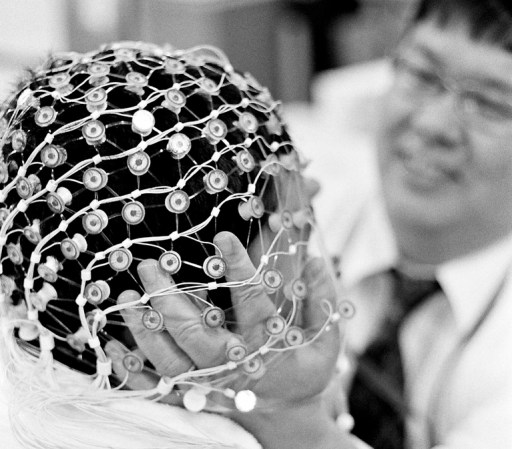

We recently gave the Parrot AR.Drone 2.0 a pretty solid review here on PopSci for improvements made to the recreational quadcopter’s smartphone- or tablet-based control interface, which we found to be very intuitive. But a team of researchers at Zhejiang University in Hangzhou, China, has gone a long step further. Using an off-the-shelf Emotiv EEG headset, they’ve devised a brain-machine interface that lets users control an AR.Drone with their thoughts alone.

As you will see in the video below, the interface isn’t exactly seamless mind-control. Its range of motions is also somewhat limited. The user thinks “right” to fly forward, “push” to increase altitude, and “left” to turn clockwise. “Hard left” initiates takeoff, while clenching teeth causes the drone to descend. The drone’s onboard camera is controlled via blinking–four times rapidly to snap an image of whatever the video feed from the drone is displaying.

So maybe it’s still not quite as intuitive as tilting an iPad in the direction you want to fly. But for disabled people the ability to use brain signals to control such a platform is pretty huge. Not only can it allow a person a form of mobility through the extension of the drone and its camera, but it lays the groundwork for other brain-machine interfaces that could extend more mobility to those who currently lack it. Moreover, this is no piece of expensive lab equipment–it requires only a commercially available EEG device, a laptop, and an AR.Drone, none of which are seriously bank-breaking.

![A Pet Drone That Follows You Like A Lost Puppy [Video]](https://www.popsci.com/wp-content/uploads/2019/03/18/GP42OBIBFUPIKU4DGFX7NIZCFY.jpg?w=525)

![Watch A Homemade TIE Fighter Drone Fly [Video]](https://www.popsci.com/wp-content/uploads/2019/03/18/VHFJVWT4XNPEFINGGOC73WYK3U.jpg?w=604)