Robots that can read and respond to brain waves will eventually help stroke patients regain movement, using new neural interfaces that can re-train damaged motor pathways. Neuroscientists have made great strides in brain-machine interfaces that can respond to a person’s thoughts — a new generation will drive a non-invasive robotic orthotic, retraining the patient’s own body.

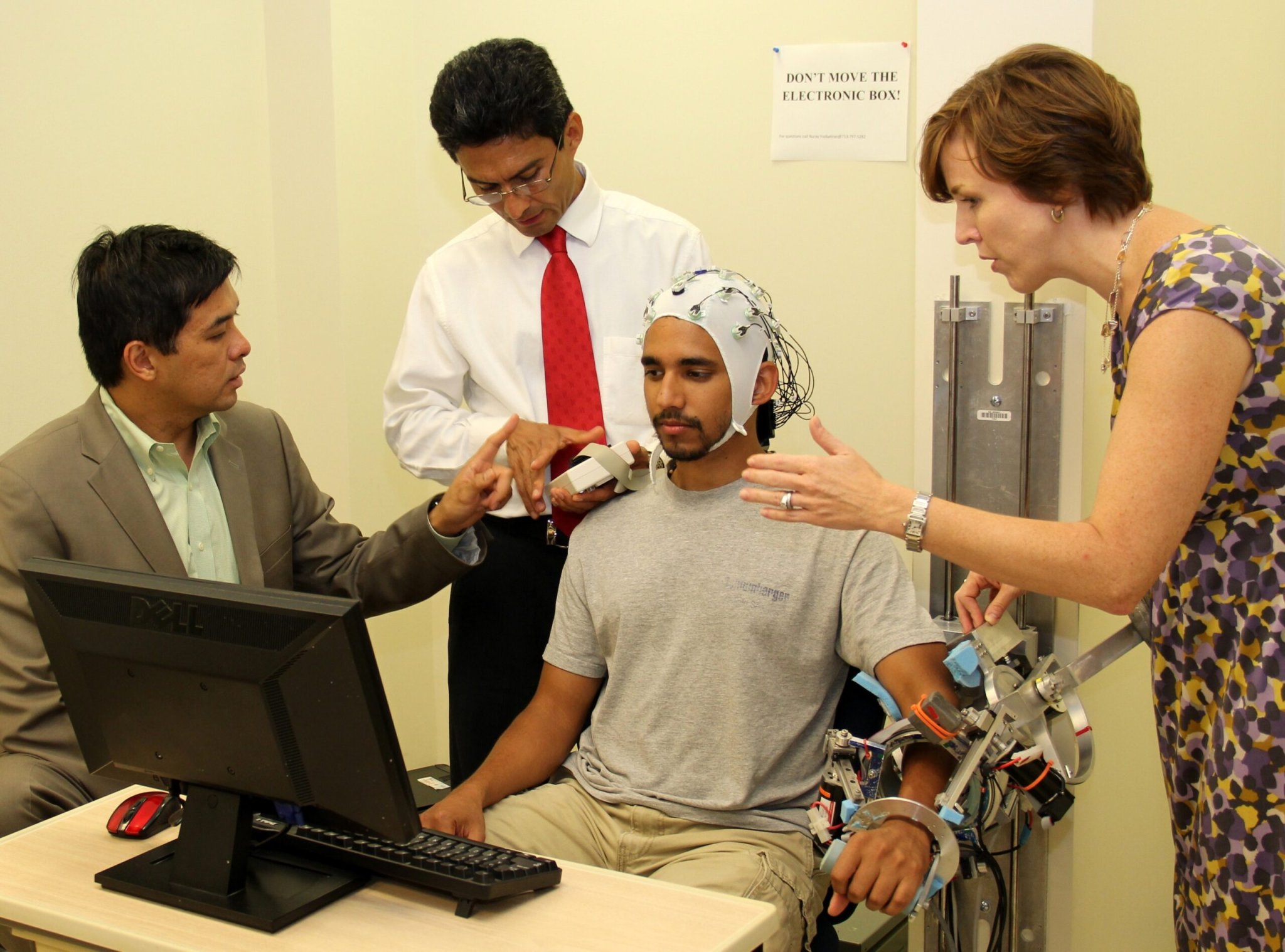

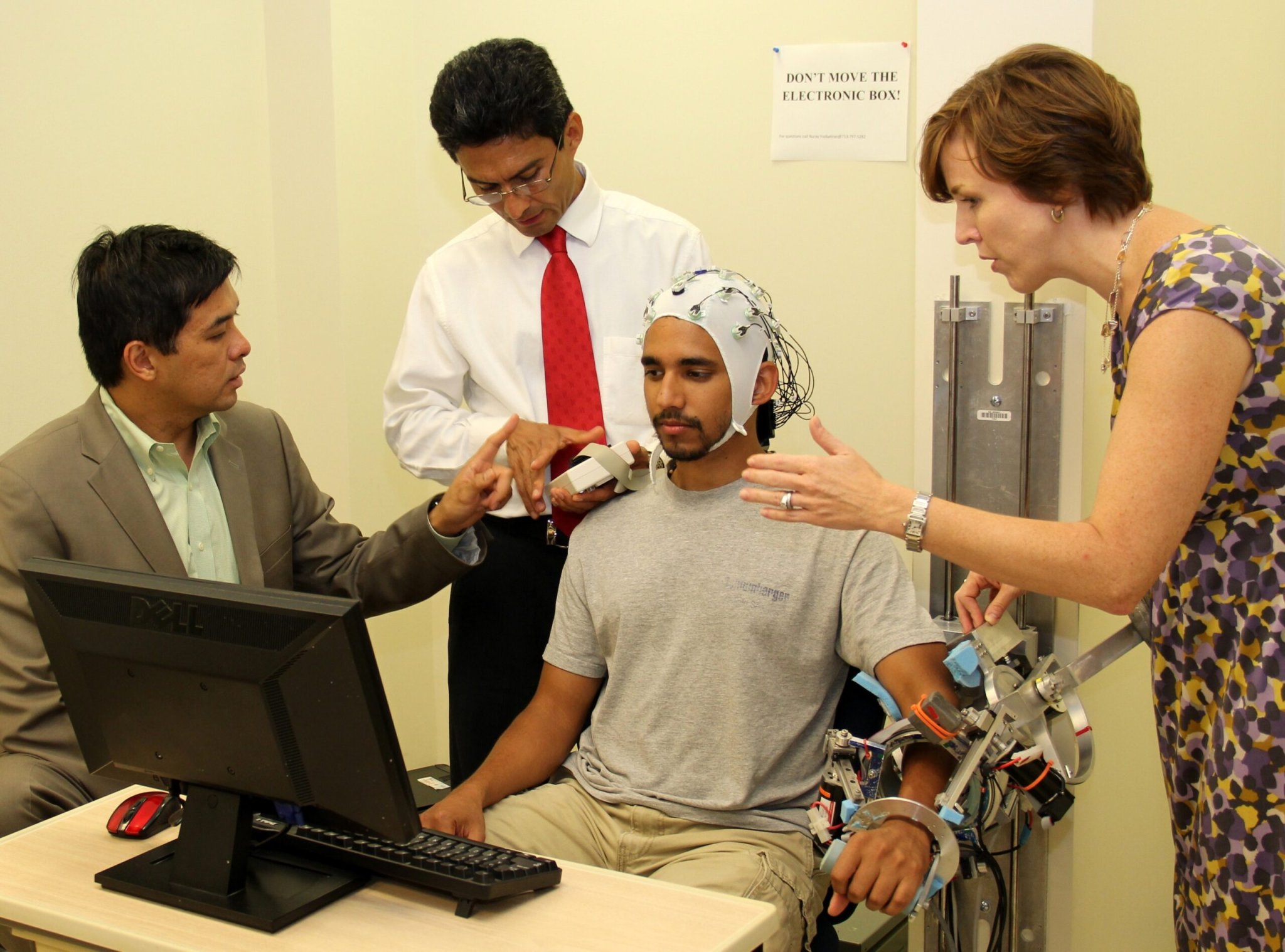

Patients who have suffered a stroke or other injury can lose the active use of their limbs, rendering them unable to simply think about moving an arm or hand and then do it. Sometimes it’s possible to re-establish the lost connection, with time and repetitive physical therapy. Researchers at Rice University are using a robotic exoskeleton and a neural interface to improve matters.

Unlike other neural interfaces implanted in the brain, this system uses an electroencephalograph (EEG), which monitors waves of activity in the brain. It will work by first recording the brain activity of healthy patients, and turning those signals into a control output that an exoskeleton can understand. Then the system will be further trained with stroke patients who have some ability to initiate movements. The goal is to develop control patterns that can work on any patient, even those with no ability to initiate willful movements — the robot will interpret any person’s brain activity and translate it into the right motion, performing the movements over and over again to retrain the patient’s motor pathways.

“With a lot of robotics, if you want to engage the patient, the robot has to know what the patient is doing,” said principal investigator Marcia O’Malley, an associate professor of mechanical engineering and materials science at Rice. “If the patient tries to move, the robot has to anticipate that and help. But without sophisticated sensing, the patient has to physically move – or initiate some movement.”

The project has already successfully reconstructed three-dimensional hand and walking movements from brain signals, according to a Rice news release. Now a $1.17 million grant from the National Institutes of Health and the president’s National Robotics Initiative will test it on 40 patients over the next two years.

[via Neurogadget]