Micro air vehicles, or MAVs, make for a tantalizing option for intelligence and surveillance agencies looking to surreptitiously gather information or deliver surveillance devices without being seen. But MAVs–usually modeled after small birds or insects– are notoriously unstable in flight and difficult to maneuver in cluttered environments. So the Pentagon is handing out research contracts to make the DoD’s little robotic bugs more stable by making them more bug-like. Specifically, the DoD wants big bulging bug eyes and hairy wings for its MAVs.

The main problem with MAVs has to do with the way they respond (or don’t respond) to dynamic environments–things like shifting or gusting winds, moving bodies, and other variables that have to be accounted for in realtime. MAVs are tiny, so there’s not a lot of space for computing assets or sensor payloads, and that leads to a sort of intractable problem: how can engineers make these things smaller and more capable while also adding increased situational awareness and better in-flight processing?

When facing a tough problem like this a little biomimicry never hurts, and that’s exactly where the Pentagon is looking with its recent contracts. If two research stipends recently handed down are any indication, the micro-drones of the future may have tiny hair-like sensors all over their bodies and big, compound eyes.

The cilia-like hairs will serve to keep the drones’ hovering and flight stable by sensing changes in air flow at the tiniest levels. That means the drone could sense a wind gust coming shortly before it arrives, allowing it to compensate for the change in circumstance. It would also aid in maintaining overall stability during flight, as the MAVs central processor would possess a constant awareness of–and the ability to manipulate–the boundary flow layer of air surrounding the drone as it hovers and flies.

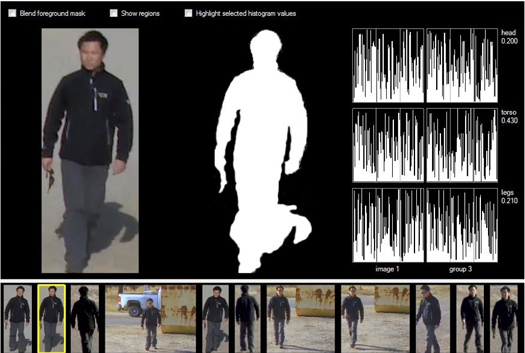

The bug-like compound eyes would similarly help MAVs navigate in cluttered spaces by increasing the amount of visual data available to the drones’ processors. An on-board minicomputer would process images in realtime, using those visual cues to automatically avoid obstacles and navigate cleanly and efficiently.