Things from space can have quite the impact on the lifeforms trying to chill here on Earth. The most obvious example of this is the meteor impact that casually wiped out three-fourths of the planet’s plant and animal life. Grim stuff. But sometimes, all that space gobbledygook does something much weirder. In a new paper published in the Journal of Geology, scientists suggest a series of distant supernovae —stars that exploded millions of years ago—may have helped encouraged early human ancestors to learn how to walk upright.

You can read that part again if you’d like, but it’s not going to get less bizarre. How is it that a bunch of stars catastrophically exploding somewhere else could possibly lead to bipedalism in Homo sapiens? Let’s take this step.

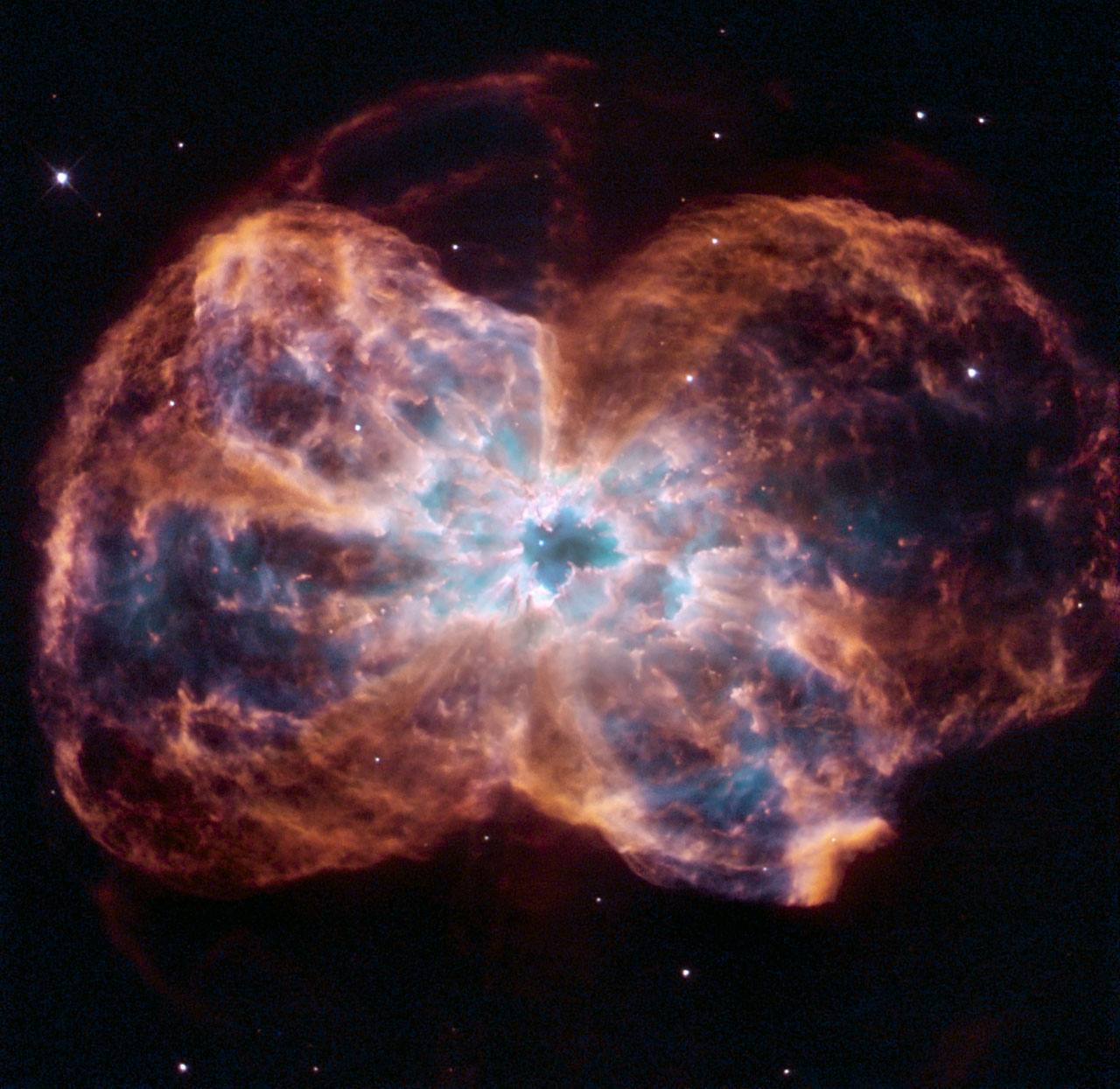

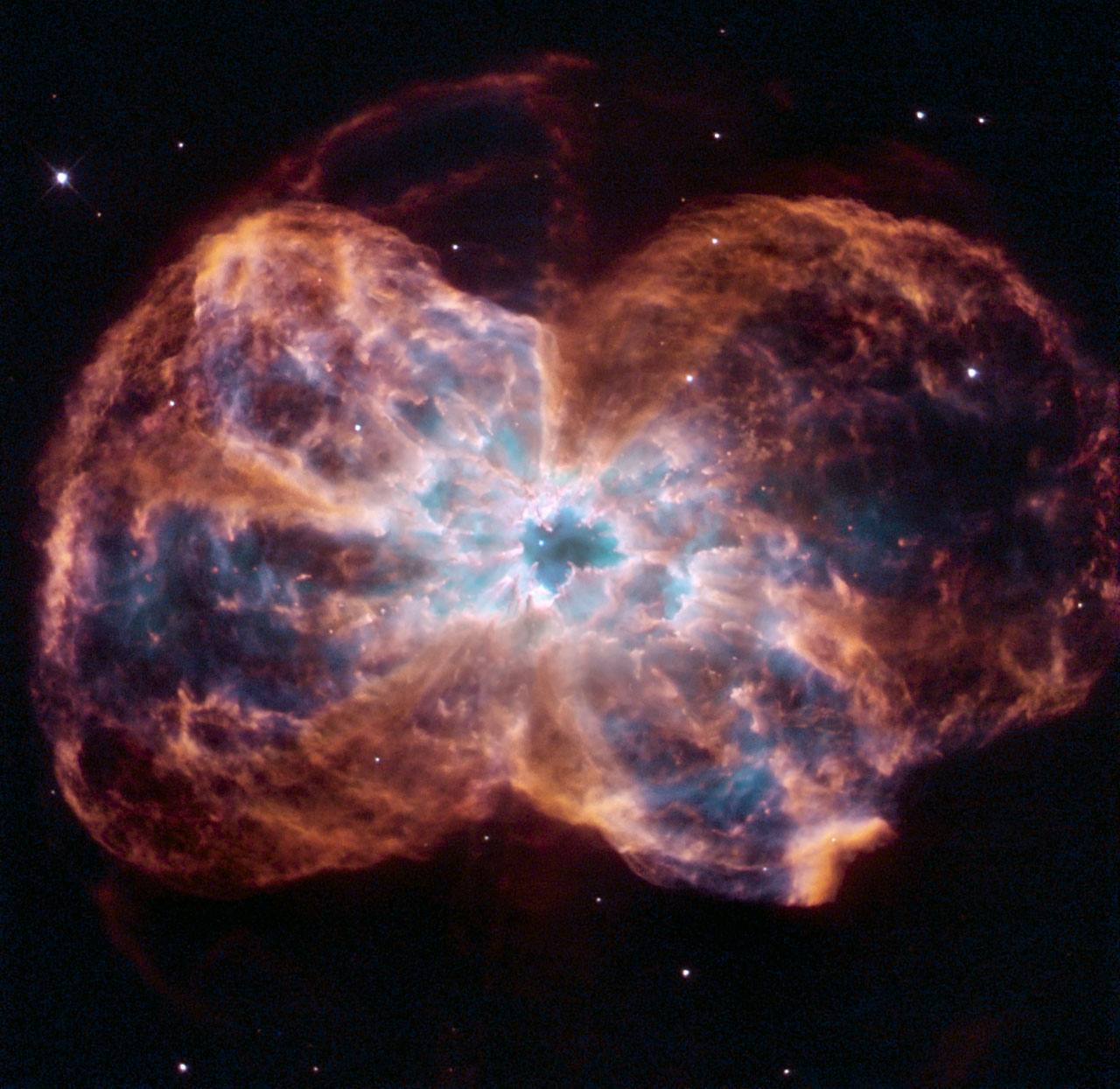

Adrian Melott, an astrophysicist from the University of Kansas and lead author of the new paper, has been studying supernovae for much of his career. But just a few years ago, researchers found iron-60d deposits around the globe hinting that a particular group of supernovae had exploded from a shared distance, during a shared timeframe. We can use the deposits to determine that the rays from these supernovae began hitting Earth as long as 8 million years ago, and that energy peaked as recently at 2.6 million years ago. For Melott and his colleagues, this timeframe distance matches up with activity originating about 123 light-years away, in a region called the Local Bubble—which possesses hot gas that looks like it was blown by a series of supernovae. The original stars that blew up were probably nine times the mass of the sun.

“We decided to ask the question: what are the results or likely consequences or these supernovae?” says Melott.

The effects of supernovae explosions on the Earth itself haven’t been very extensively studied. The first papers looking into this question were published in the 1950s, focusing on whether nearby explosions could have caused or encouraged mass extinction events on the planet. What’s new in Melott’s new paper, however, is a focus on whether moderately-distant supernovae may also have had environmental impacts that affected life on the planet.

And there should be some effect, right? A supernova emits cosmic rays, pretty much like every other celestial explosion does, and this energy can have a very physical impact, like inducing temperature fluctuations. What’s more, scientists calculate that supernovae discharge cosmic rays boasting energies of up to a quadrillion volts. That’s 15 zeros! Garden variety cosmic rays don’t really exhibit energies past a billion volts (nine zeros). “These are a million times more energetic than the sort of cosmic rays we’re usually bombarded by,” says Melott.

Melott and his coauthor Brian Thomas from the University of Washburn in Topeka, Kansas, began suspect these cosmic rays might have been powerful enough to ionize (electrically charge) the particles in Earth’s atmosphere all the way down to the surface. “That’s not normal,” says Melott. “Things normally peter out by stratospheric altitudes. But since these rays go right to the ground, they ionize particles throughout, which means more cloud-to-ground lightning.” An ionization of the atmosphere provides more free electrons and ions that create a conductive pathway for lightning to start. As a result, you would expect as much as 50 times more lightning to strike as a direct consequence of these supernovae.

The pair ran some computational models that bore out this behavior, which leads us back to the paper’s bizarre theory. Melott and Thomas believe a surge in lightning strikes caused by these supernovae may have caused a spate of wildfires (evidenced by an increase in soot and carbon deposits corresponding with this time period). These wildfires may have been responsible for burning down many forests in Northeast Africa, converting them into grassier savannas. And in recent years, it’s been hypothesized that the conversion of these areas into savannas may have encouraged bipedalism in ancestral hominid species. With trees sparsely dotting the landscape, bipedalism would have been a more energy-efficient way of navigating from place to place. With no trees to perch from, standing up would have at least allowed hominins to get a glimpse of any predators lurking in the tall grass.

Unfortunately, it’s not a theory that fits too neatly into what we already know about human evolution. “It’s clear to me this was written by people who are not working on the fossil record directly,” says Ashley Hammond, an assistant curator of biological anthropology at the American Museum of Natural History. “Those that have worked in the fossil record will tell you that 2.6 million years ago, when these supernovae peaked, bipedalism had been well-established.” The origins of bipedalism stretch back closer to 4.4 million years ago—about two million years prior to the time period Melott and Thomas say these series of supernovae reached their peak.

RELATED: New Hubble image offers a detailed look at the Triangulum Galaxy

“Bipedal organs are actually very old,” says Hammond. “They’re the first real hallmark of a human lineage, a hominid lineage. Trying to link something happening at 2.6 million years ago is a little bit of a stretch.” Hammond thinks if one wanted to connect the supernovae to evolution, it would have made more sense to link them not to hominid bipedalism, but instead to the appearance of the genus Homo. Our earliest appearance in the fossil record clocks in at 2.8 million years ago, and the genus can be found in greater abundance from about 2 million years ago.

Moreover, we still don’t really know what precise factors precipitated the rise of bipedalism in human ancestors. “It’s still pretty heavily debated,” says Hammond. The savannah hypothesis is one of those things that are discussed among scientists, and it’s likely a contributing factor. But there are also questions surrounding when exactly African landscapes began to lose their trees and morph into tall grasslands, and to what extent wildfires actually spurred that process.

Melott is the first to emphasize that he and Thomas are not paleontologists. He calls the paper’s ideas a “theoretical expectation,” and says he sees the findings more as a contribution to a larger effort to come up with a stronger theory for the formation of lightning, which he says is “not well understood.” Ironically, that also raises one of the paper’s most primary limitations: if we don’t understand the initiation of lightning very well, it’s not quite clear whether these supernovae really did create a spike in lightning strikes capable of scorching the Earth and burning down all these forests. “Our whole idea is strongly dependent on the idea that cosmic rays will seed lightning,” says Melott.

There’s no question the authors have drawn a pretty interesting connection here. But the consensus is clear: if we’re going really investigate whether supernovae in the Local Bubble are the reason we talk and walk upright, we’re going to need some more tangible evidence.