There are plenty of ways you can improve the quality of your video calls, but adding fancy microphones and elaborate lighting setups can only help so much. NVIDIA, which makes graphics processing units (GPUs), recently released a new platform called Maxine that offers some AI-powered upgrades to your video calls, some of which straddle the line between creepy and amazing.

Maxine processes data in the cloud rather than on consumer devices, so if a streaming platform has it enabled, users can get the benefit of the advanced features without the need for a computer or smartphone powerful enough to handle the computing. From a very basic standpoint, this kind of off-device computing is the same idea that allows apps like Google Stadia to stream high-end PC gameplay in real time to smartphones.

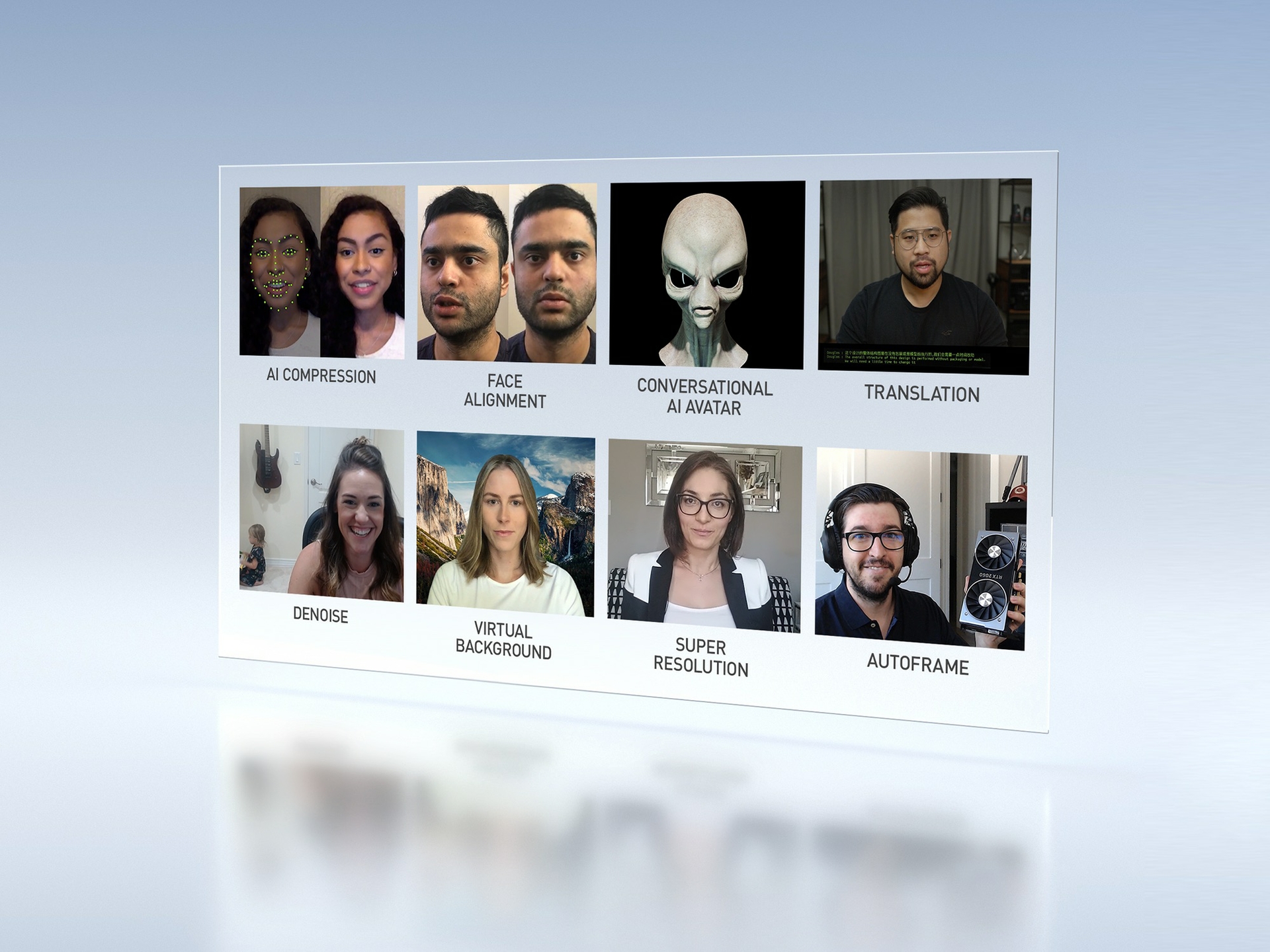

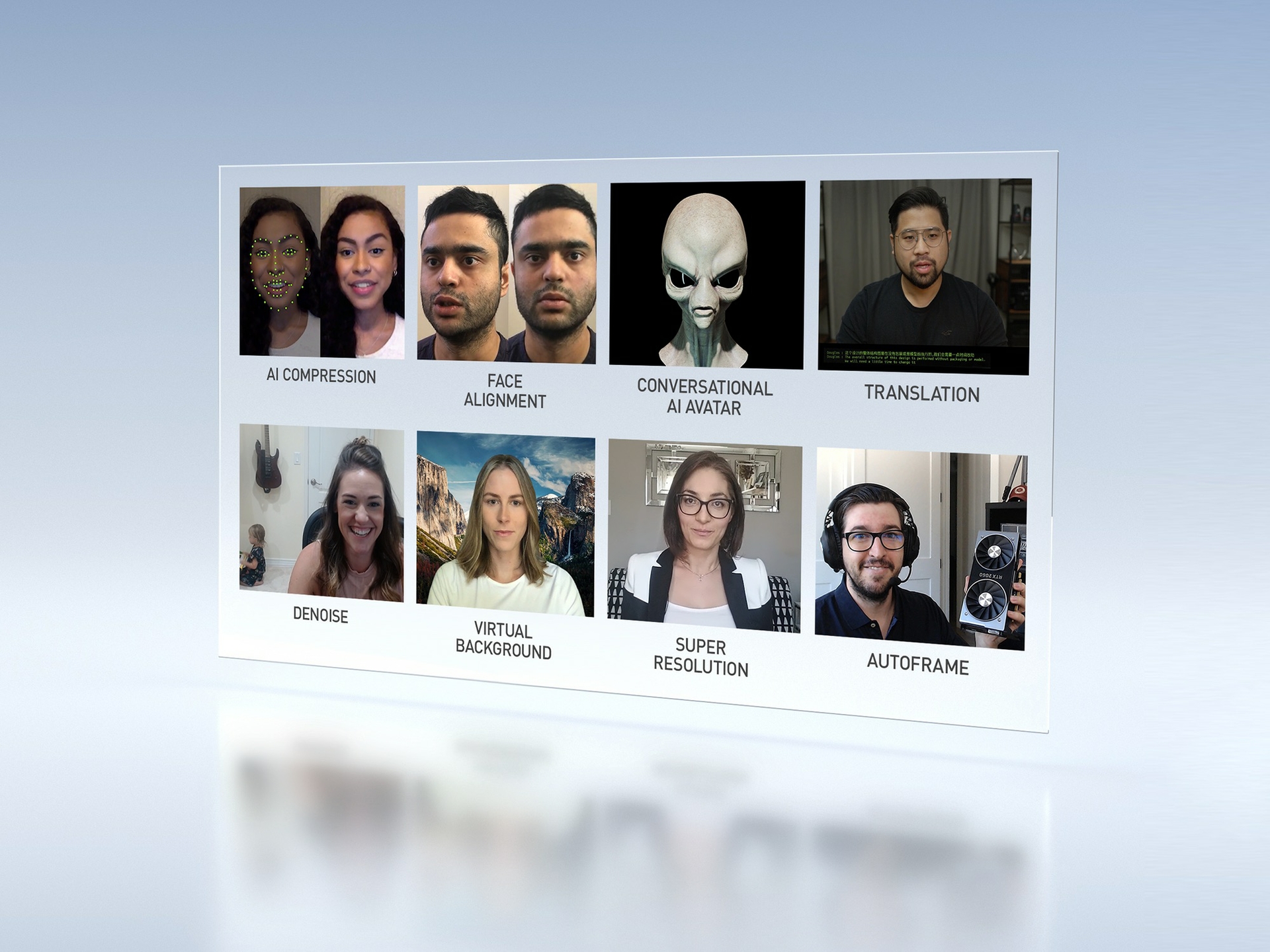

Nvidia’s platform has a variety of useful or fun applications built into it, but the key element is its ability to reduce the amount of bandwidth required by the estimated 30 million or so video calls that happen every day. Typically, web meetings involve moving a continuous stream of video. Maxine, however, recognizes key points on your face and recreates them on a viewer’s screen, using AI-driven animation techniques to fill in the missing pieces. Because the platform doesn’t have to stream the entire screen of pixels, Nvidia claims Maxine can cut the required bandwidth for a video call down by ten times.

This animation process is similar to what you’ll find powering deepfake apps, like those that can stick your mug onto an actor in a clip from a movie. Using this tech, Maxine could create a smoother viewing experience for whomever is on the receiving end of the call. Typically, when connection speeds choke during a regular video call, it drops frames and the person appears frozen. Because Maxine only relies on small amounts of transmitted facial data, the animated image could still move smoothly during the brief interruption.

The AI can take that facial data beyond simple streaming, too. The Face Alignment tool can make it appear as though the speaker is looking straight into the camera, even if they’re looking in a slightly different direction. The demo is slightly unnerving because you can see the transformation happening in real time, but if you joined a call and the other person already had the technology enabled, you may not notice, especially if you’re trying to look into the camera yourself.

Maxine also provides other AI-based tech, like real-time translations and realistic Memoji-style on-screen avatars, but they don’t have the same potential impact as the bandwidth reduction features.

Maxine won’t be an app you can download yourself. It’s a platform meant for developers and manufacturers to build into their products. Right now, companies can apply for early access to the tech, and it’s likely we’ll see others attempting similar feats to reduce bandwidth usage. After all, it looks like we’re going to be having a lot more video meetings for the foreseeable future.