Chips are in everything: smartphones, supercomputers, remote-sensing robots. Now, MIT engineers created an electronics chip design that allows for sensors and processors to be easily swapped out or added on, like bricks of LEGO. A reconfigurable, modular chip like this could be useful for upgrading smartphones, computers, or other devices without producing as much waste. Additionally, it could be useful for artificial intelligence applications. Their paper describing the tech was published this week in the journal Nature Electronics.

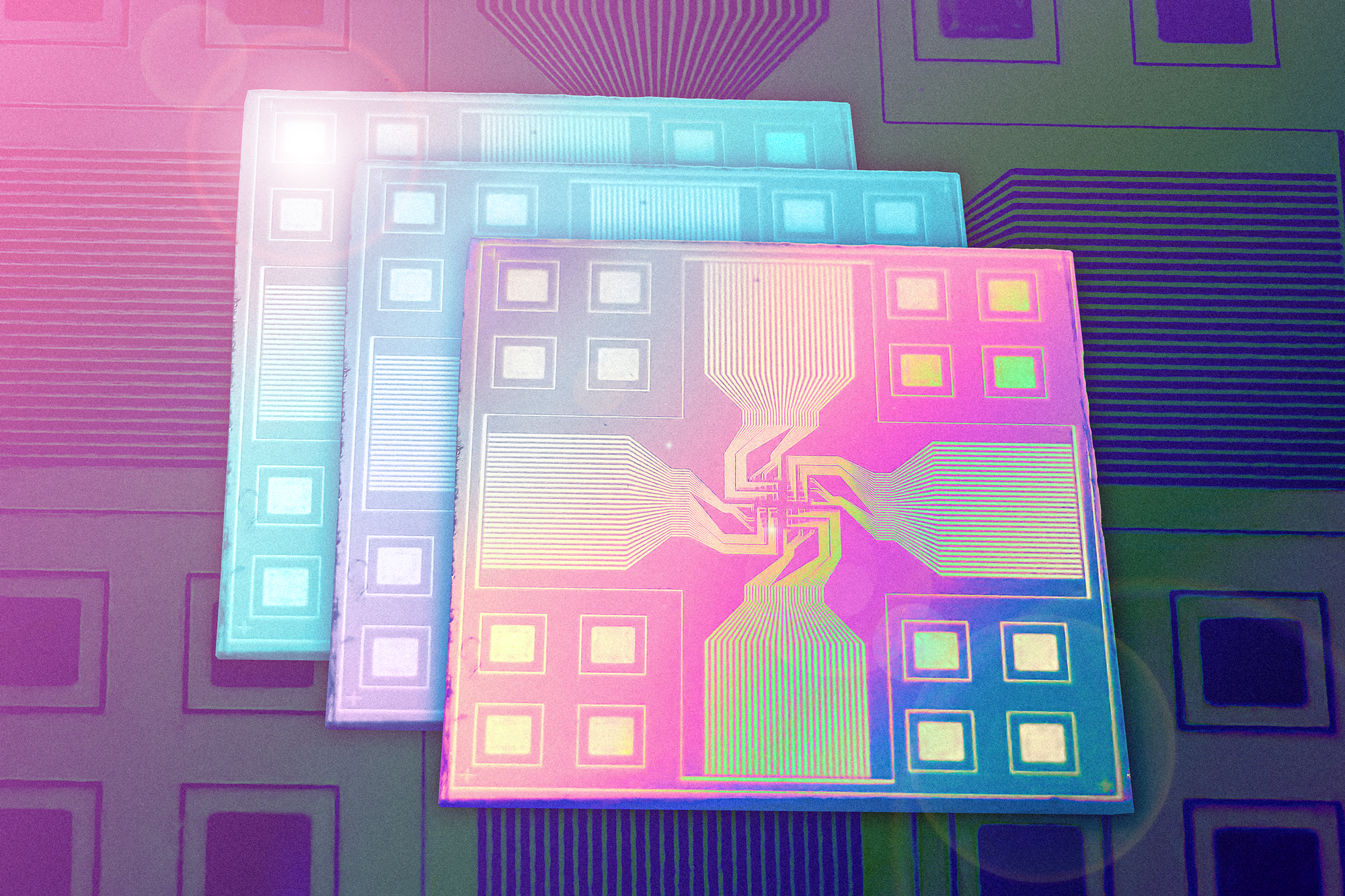

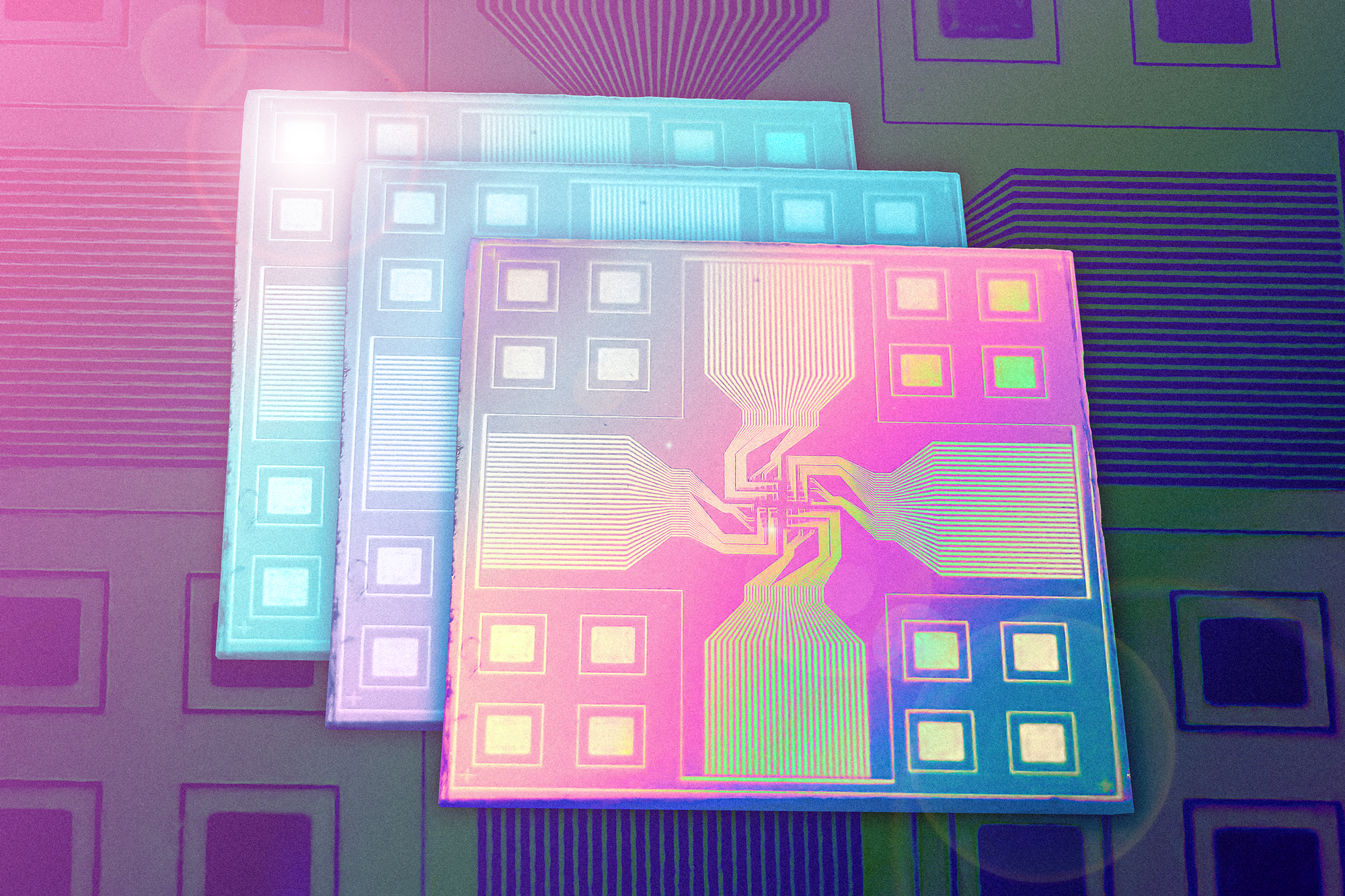

Here’s how the chip is configured. It has alternating layers for sensing and processing. Instead of having copper wires, the layers of the chip communicate internally through optical signals, more specifically, with light-emitting diodes (LEDs). These two features allow various elements on individual layers to be easily interchanged with other elements.

“As we enter the era of the internet of things based on sensor networks, demand for multifunctioning edge-computing devices will expand dramatically,” Jeehwan Kim, associate professor of mechanical engineering at MIT, said in a press release. “Our proposed hardware architecture will provide high versatility of edge computing in the future.” (Edge computing refers to electronics that can process data independently without having to connect to a central server).

To test how the chip performs on simple tasks, the team made a prototype with image sensors, LEDs, and a processor containing “artificial brain synapses”—-components made of silicon, silver, and copper that mimic how the brain transmits information (the team also calls these memristors). Instead of just transmitting information in binary (as 0 or 1), the strength of the memristors’ output electrical current depends on the strength of incoming current. This allows it to have a range of values based on the strengths of the signals. And it consistently remembers what value is associated with what strength of signal so calculations stay constant. A connected circuit, or array, of these neurons could directly process and classify signals on-chip.

[Related: The trick to a more powerful computer chip? Going vertical.]

Researchers trained a version of the stacked chip to recognize the letters M, I, and T. (For MIT.) That chip had photodetectors for receiving the visual signal and passed it down to other layers that encoded the image as a sequence of LED pixels and classified the signal based on the strength of incoming light. The researchers used laser light to shine different letters onto the chip, and it was usually able to recognize which letter it was given, although it did better with clearer and brighter images. At some point, the researchers added a “denoising” processor that helped the chip understand more of the blurry images.

The team imagines that this modular capability will allow them to add features like image recognition to smartphone cameras, or health monitoring sensors to electronic skins.

“We can make a general chip platform, and each layer could be sold separately like a video game,” Jeehwan Kim said. “We could make different types of neural networks, like for image or voice recognition, and let the customer choose what they want, and add to an existing chip like a LEGO.”