Researchers at the National University of Singapore are enhancing robots’ sense of touch by mimicking the ridged and contoured surfaces of human fingertips. Fingerprints, it turns out, don’t just give humans better grip but also carry out a sensitive type of signal processing. By imparting that same kind of signal processing to robots, we could reduce the processing loads to robots’ CPUs and help them better identify objects through their shapes.

Fingerprints provide a unique identifier and a better means to hold on to objects, but they also shape the ways we sense and perceive the world around us. When we touch something, the ridges alter the vibrations moving through our skin such that nerve endings can better receive them. This serves as a kind of signal processing that allows the skin in our fingertips to provide richer information to our central nervous system than skin on other parts of the body.

For robots, who generally do a lot of processing in a single central processing unit, this distributed signal processing represents a distinct advantage. If robotic sensing surfaces could do more signal processing in the surfaces themselves, it would save on the amount of processing taking place in the CPU, leaving more room there for other kinds of computing.

So the National University team created a robotic touch sensor out of four force-sensitive sensors on a single four-millimeter square plate. Using a thin, flat plastic sheet covering the sensors, they recorded a series of force/touch measurements. They then repeated the measurements, this time covering the touch sensors with a ridged plastic sheet. They found that the ridged measurements where far richer in touch data, even helping the sensor identify the shape of the object being touched.

That all happens right at the “fingertips,” saving the central processor the trouble. And it represents a nice distributed solution that could greatly enhance robotic object recognition. It also represents a nice piece of biomimicry. Read the paper at arXiv.

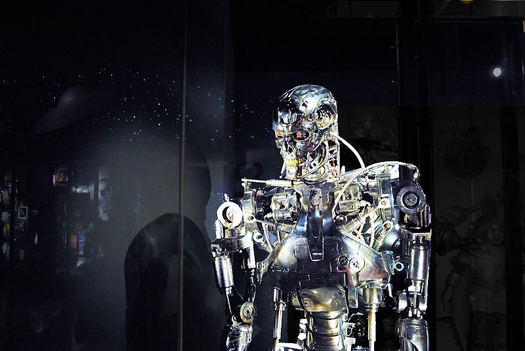

![The Sensitive Robot: How Haptic Technology is Closing the Mechanical Gap [Sponsored Post]](https://www.popsci.com/wp-content/uploads/2019/03/18/GHIQ5U4SEMDTEAY3HJ7NJH7XBY.jpg?quality=85&w=525)