We may earn revenue from the products available on this page and participate in affiliate programs. Learn more ›

Smartphone cameras aren’t very good at taking flattering selfies. The wide angle lenses introduce unpleasant distortion, and the small camera sensors can’t produce those blurry backgrounds we see in higher-end portraits. Of course, that doesn’t stop people from shooting tons of them. Roughly 24 billion selfies were added to the Google Photos service in 2016, according to the company. Most of them, we’re not ashamed to say, were garbage.

So, when Adobe showed off a “sneak peek” video in which a man makes a run-of-the-mill selfie look like a pro-grade (or at least enthusiast-level) portrait, it seemed like magic. However, most of the retouching technology on display already exists in Adobe’s arsenal—but now the company is leveraging artificial intelligence to bring those advanced capabilities into the one-tap world of smartphone photo editing.

The video shows an app similar to Adobe Fix, a retouching app currently available for both Android and iOS. While apps like Lightroom Mobile offer more advanced editing capabilities, Fix features more options for making spot-specific adjustments to an image. You can take out blemishes, adjust exposure, and crop, but Fix also offers some more advanced retouching features, such as a healing brush, most commonly associated with the desktop version of Photoshop. And best of all, you can use these tools without really knowing what you’re doing.

“All of these effects can be achieved with existing tools,” a spokesperson for Adobe Research said via email, “but by leveraging AI to automate the process, we’re expanding this functionality to a broader audience of photography enthusiasts.”

Adobe first announced its artificial intelligence initiative, called Sensei, at the Adobe Max conference in 2016. The program promised a wide variety of new functions, developed with the help of the company’s massive stockpile of data from photos and usage information shared by users. The selfie “sneak peek” video illustrates a few of these AI-driven technologies and their possible real-world uses. Ultimately, the goal is to make a photo look like it was taken by someone with a high-end camera and some photographic knowledge—even if it’s just a quick snap.

Lens trickery

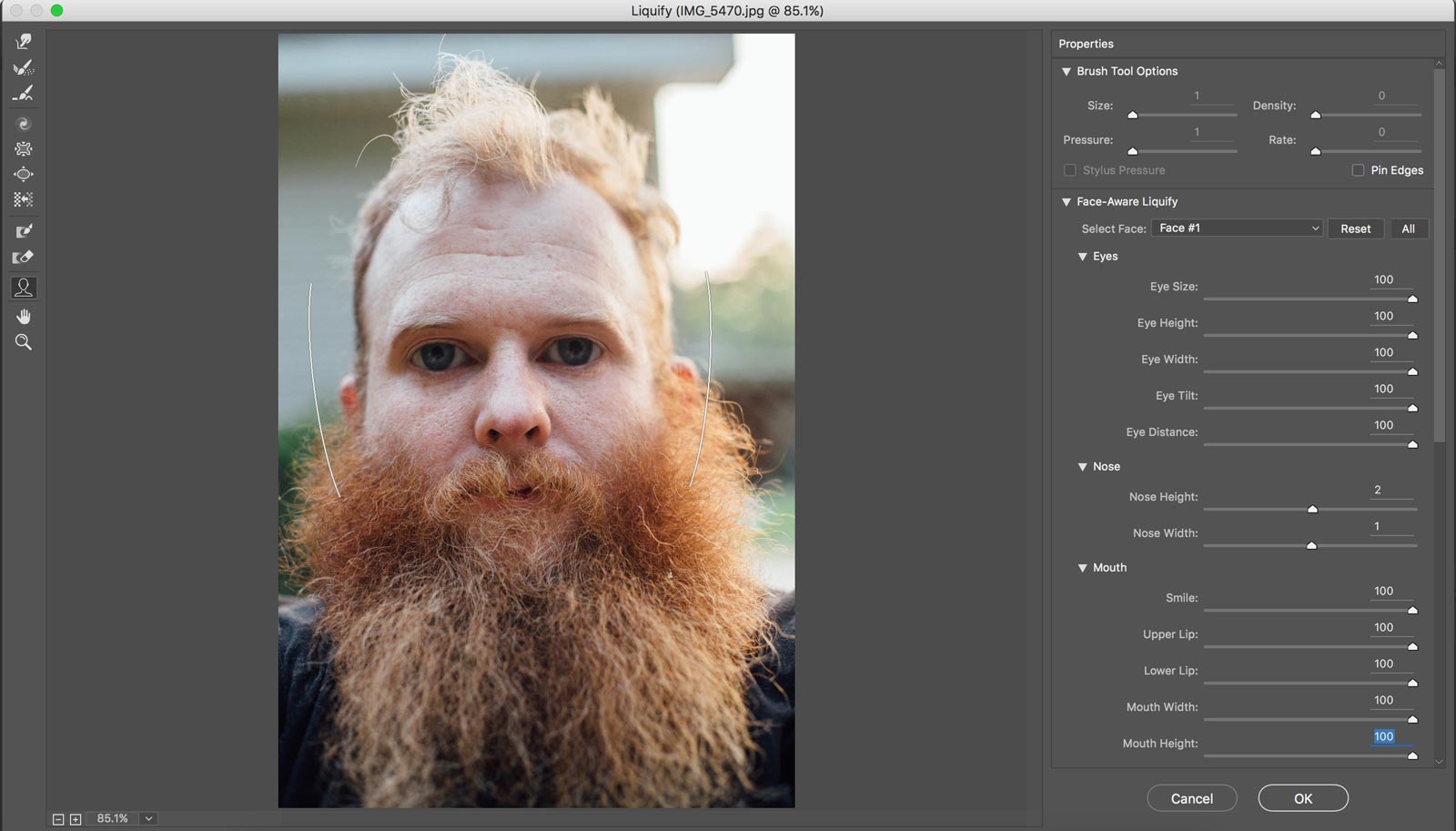

One of the biggest obstacles for good smartphone portraits is the phone’s wide angle lens, which is designed to be flexible enough for a variety of situations, including lots of wide landscapes. A photographer would typically opt for a short telephoto lens for a headshot for its lack of distortion, but that’s not possible with a smartphone—even though Apple’s Portrait Mode on the iPhone 7 Plus is giving it a shot. To remedy this, Adobe uses an extension of its Liquify tool, which learned to recognize faces in Photoshop back in 2016. The mechanism can automatically detect facial features so they can be adjusted. Want bigger eyes or a shorter chin? This is how it’s done.

The video goes beyond editing individual facial features and actually tweaks them in unison to give the illusion that a telephoto lens was used. The whole face gets a more compressed look, which deemphasizes the nose and creates an appearance generally perceived as more flattering. The software has learned what the human face looks like and also understands the properties of different camera lenses.

“AI enables our algorithms to reason about things,” continued the Adobe Research spokesperson. In this case it’s asking: “What is the shape of this person’s head in three dimensions?”

Blurring the background

Depth of field—the effect responsible for sharp subjects and blurry backgrounds—is another challenge for smartphone cameras, thanks in large part to their very small imaging sensors. In the current version of Fix, you can paint on the background blur using your finger like a brush. Using AI, however, the app tries to automatically recognize the subject of the photo, then adds blur in other areas. Adobe recently published some related research specifically about making complex selections with a single click. This differentiation between subject and background is something that several smartphone manufacturers like Apple and Samsung have been actively trying to build into their devices.

Copying the colors

The last piece of the video shows our selfie shooter choosing an unrelated source photo and copying its style—its color, its brightness, the qualities of the lens used to shoot it—over to the selfie. The primary goal is to match the color scheme and tone. Adobe recently published a study with researchers from Cornell about this process, which is an extension of the Color Match utility that already exists in Photoshop. In this instance, the AI is analyzing the colors of the image—as well as the overall style—so it knows where to put each hue.

Why do we need software to do this for us?

All of this is happening in a time when smartphone camera hardware has hit a bit of a plateau. Recently, Samsung updated the Galaxy series to the S8, which brought no real hardware updates to the main camera (even though the front-facing camera, which is typically used for selfies, did get a bump in image quality).

Apple’s dual-camera design helped enable its new portrait-shooting mode, but it suffers from some image quality challenges, including increased noise and some problems with camera shake due to the lens’s longer focal length.

The natural conclusion, then, is to focus more on software.

When you take a picture with a modern smartphone camera, there’s already an impressive amount of work going on behind the scenes to process the image before you see it. Before a photo shows up on the screen, it’s subjected to things like noise reduction, compression, and distortion correction to compensate for the very wide angle lens. At one point, Apple claimed there were roughly 800 people dedicated to working on the iPhone’s camera.

Still, many photographers have been resistant to the idea of one-click or automatic fixes. But AI projects like this may help to change that.

“There will always be a tension between how automatic you can make an image editing task, and how much control you give to a photographer,” said Adobe’s representative, but the company still looks at these apps as tools photographers can use to make aesthetic decisions. “Ultimately, our algorithms don’t decide what looks good, it’s up to the individual to make that decision.”

AI can give photographers access to more tools used by professionals, but it still can’t replace a good eye—or, more importantly, good taste.