Each day, as we talk to friends, family, and coworkers, and consume podcasts, movies, and other media, we are ceaselessly bombarded by the spoken word. Yet somehow, our brains are able to piece out the meaning of these words, allowing us to seamlessly, in most cases, go about our days, understanding, remembering, and responding when necessary.

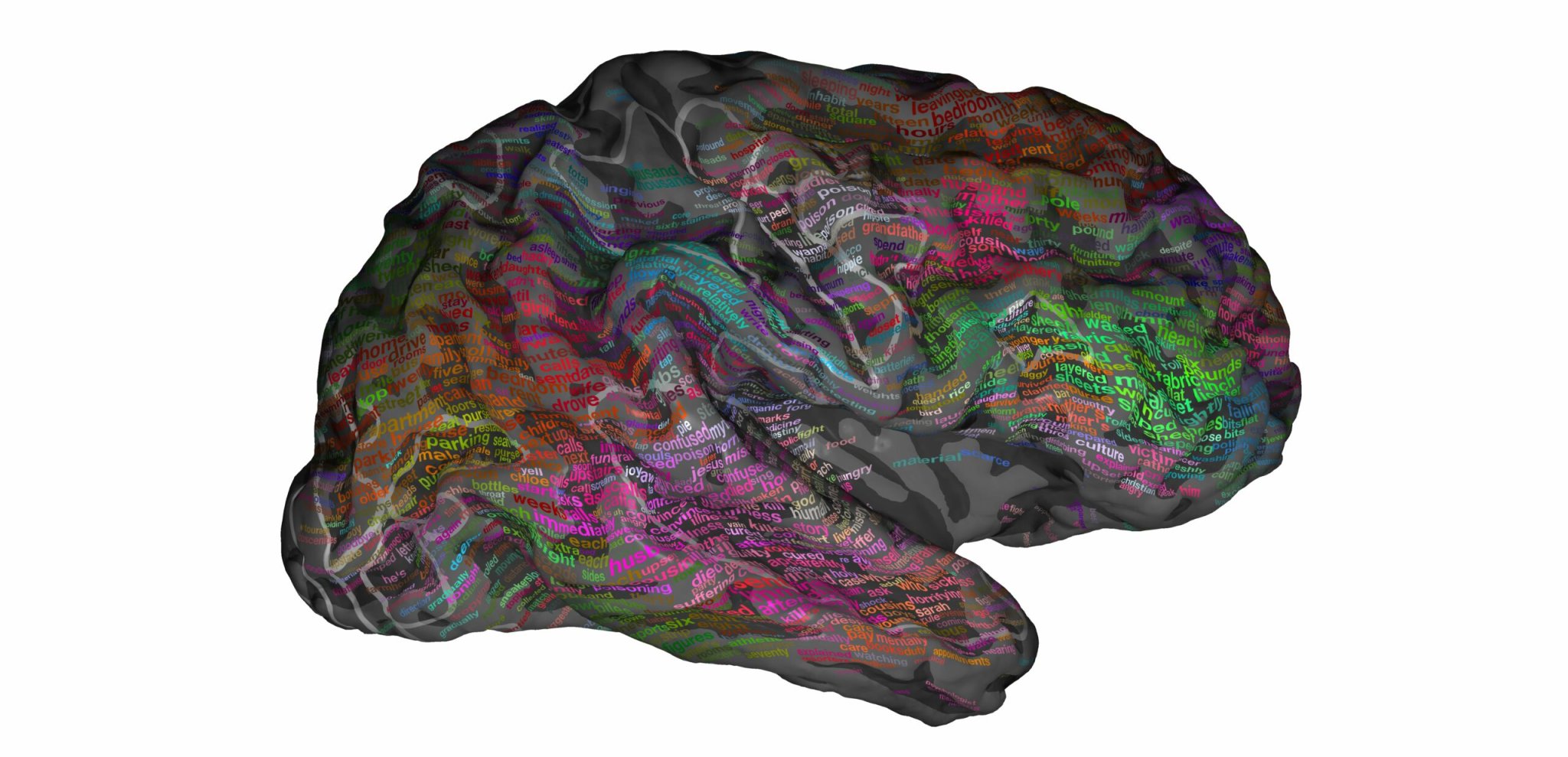

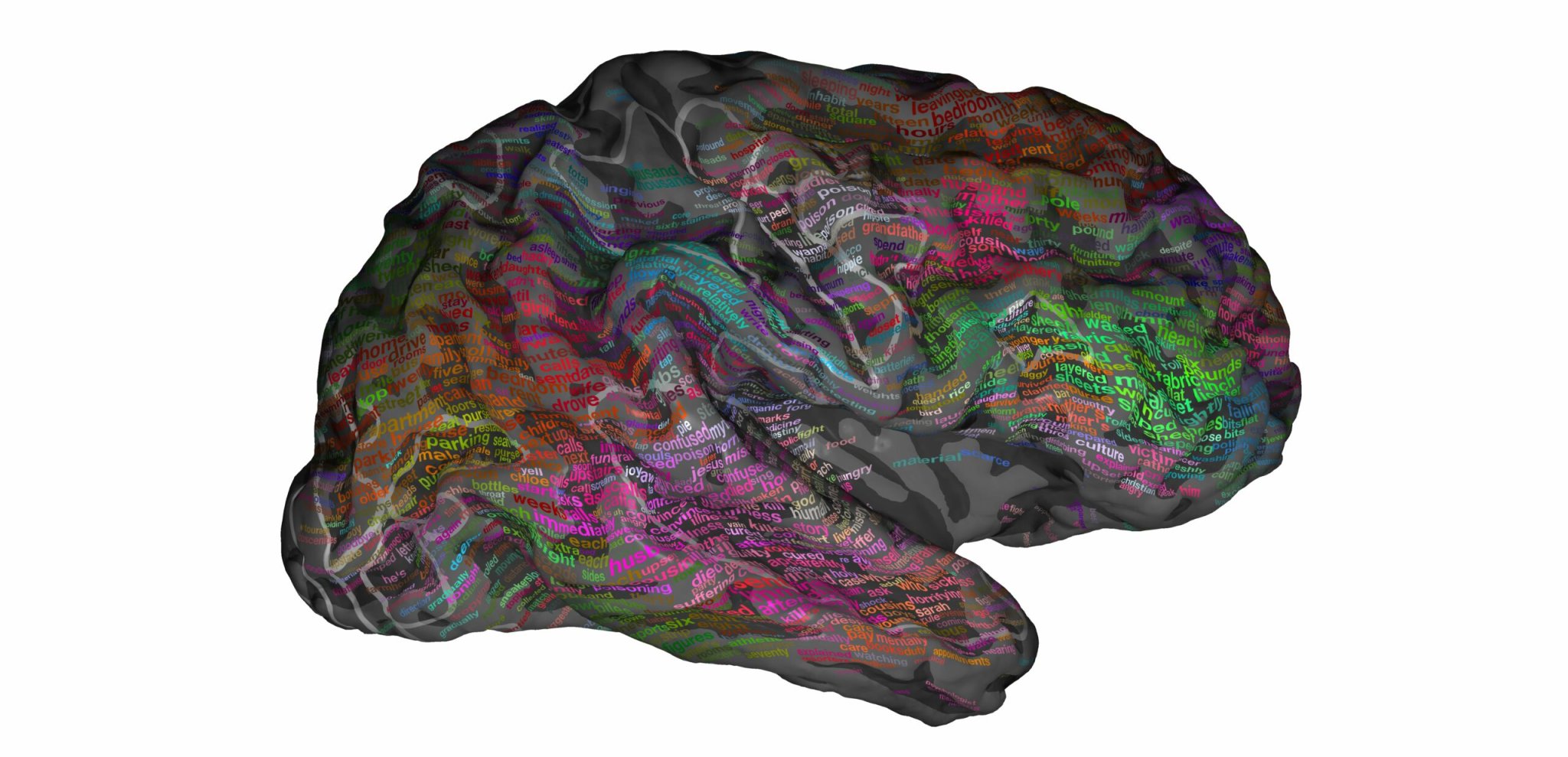

But what’s going on in our brains that allows us to understand this endless stream? A group of scientists set out to map how the brain represents the meaning of spoken language, word by word. Their results, published today in the journal Nature, not only represent a first-of-its-kind directory or ‘semantic atlas’ to display how the meaning of language is grouped in the brain, but also shows that we use a broad range of regions in the brain, challenging the belief that language is limited to a few brain areas and involving only the left hemisphere.

Word meaning, also known as semantics, focuses on the relationship between words and phrases and what they mean, their connotation. Words like purchase, sale, item, store, card, and package, for example, all have something in common — you go to a store to purchase an item using your debit card. To understand semantics in the brain, previous studies used single words or phrases to see what’s going on.

But Jack Gallant, a neuroscientist at UC Berkeley, and his colleagues, including lead author Alex Huth, wanted to know how our brains map out a more natural, narrative story. They asked seven participants to listen to a few hours of The Moth Radio Hour, a storytelling program. They hooked each participant up to an fMRI machine–which measures changes in blood flow and volume caused by the activity of neurons in the brain–so they could see exactly what areas of the brain lit up and when.

Afterwards, they used transcripts of the narratives along with the fMRI scans to understand first what words corresponded to the lit up areas of the brain and second, to create a model that predicts brain activity based on the words the person heard.

An interactive model of how we understand language

By putting together information from all seven participants, with the help of a statistical model, the researchers created a brain atlas, a 3D model of the brain that shows what brain areas lit up at the same time among all the participants. They also created an interactive version of the atlas. The interactive can be found here.

What does the model tell us about language?

The researchers found that word meaning is distributed across the cerebral cortex, in 100 different areas that span both hemispheres. Looking at all the participants’ scans as a whole, they found that certain regions of the brain are associated with certain word meanings. For example, hearing language about people tends to activate one area of the brain, about places in another, and about numbers in a third–demonstrating a basic organizing principle for how the brain handles different components of language.

While the results were quite similar across individuals, this doesn’t mean that the researchers have created a definitive atlas for language. First, the study only looked at seven participants, all from the same area of the world and all speakers of English. It also only used just one source of input: a series of spoken, engaging narrative stories. The researchers are eager to learn how things like experience, native language, and culture will alter the map.

Further, Gallant says, they think the map could also change if the setting changed or if a person was in a different mental state: if a person read the story instead of hearing it, or instead of hearing an engaging story, the context was tedious cramming for an exam.

Gallant says these would likely change the map as well, and he plans to learn how in future research.

How will this map help?

Many brain disorders and injuries affect language areas in the brain, says Gallant. This atlas could act as the basis of our being able to understand how brains and their maps change after a brain injury affecting language, like a stroke, or are different in language disorders like dyslexia or social language disorders like autism.

For now, Gallant says, this study helps to show the importance of not just brain anatomy but the physiology or function behind that anatomy. “In neuroscience we know a lot about the anatomy of the brain, down to single synapses, but what we really want to know about is the function.” The combination, he says, is the key to really understanding the brain.

With much more research, this idea could be used as a sort of decoding mechanism: a map of fMRI data could be used to “read” what words a person is reading, hearing, or thinking, which could allow people with communication disorders like ALS to better communicate.