We’ve seen some pretty talented robot bartenders, but we still have to go to them for a drink. What kind of a future is this? Can’t the robot come and pour us a beer by now?

Sure! But that’s not quite as easy as just having a robot walk (or roll) over and pour a beer into a glass. If you, say, move the glass at the last minute (not cool, dude, but okay), the robot could keep right on pouring. No, a great robot server needs to be able to look slightly into the future.

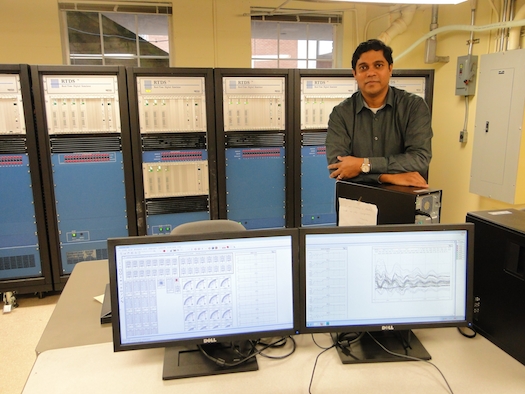

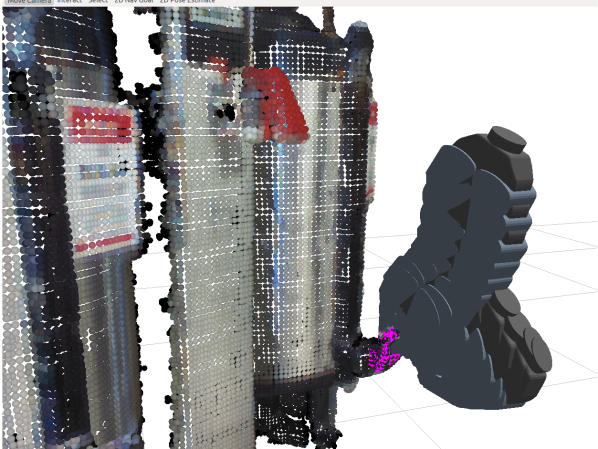

Using the PR2 robot, which seems to be a quick learner and has already done some beer-retrieving work in the past (sidenote: we want one), a Cornell team at the Personal Robotics Lab has taught the machine to predict human interactions, then base its own actions on what people are most likely to do. So, armed with a Microsoft Kinect, the Cornell ‘bot determines what’s in its frame, then draws on a video archive showing how humans will usually interact with certain objects.

Maybe, for example, the robot is planning on pouring you a beer. The robot sees you, a book, and a glass—then it sees you reaching out your hand. The robot, based on a set of algorithms, can pause and wait to see if you go for the book or the glass, and if you do go for the glass, wait until you put it back to fill you up.

Although that is clearly the most important function, the robot can do some stuff besides pouring beer. In the video here, you can see it predict when someone is heading to the fridge, then eagerly route them off and open the door for them.

So far, the inventors report, the robot has a pretty decent success rate: 82 percent correct when looking ahead one second, 71 percent for three seconds, and 57 percent for 10 seconds. Not too bad, even though that’s a bummer for the unlucky few who got their beer spilled because of incorrect predictions.