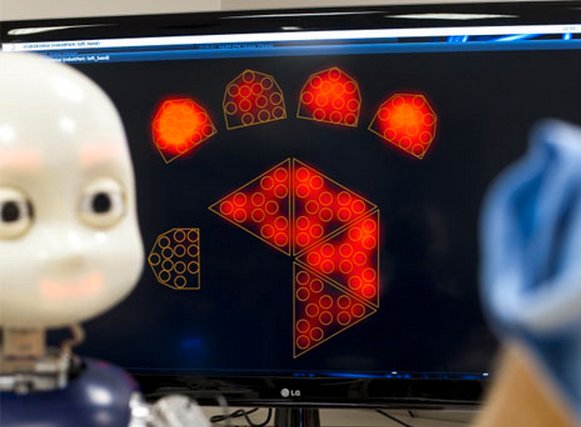

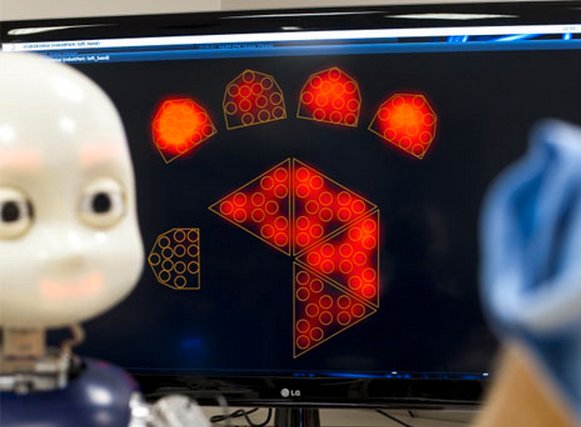

A robot with an artificial brain is learning languages–even heavily accented ones–by stringing together words and sentences. Peter Ford Dominey and his colleagues at the French National Institute of Health and Medical Research taught an iCub toddler-bot to learn speech patterns, and it can think before it speaks.

Our brains process spoken words in real time and anticipate what’s next, which allows us to hold meaningful conversations (mostly) without awkward pauses to stop and think. This is possible because of connections between the frontal cortex and a brain region called the striatum. Dominey and colleagues built an artificial version–a neural network with a series of recurring loops that can transmit information. They incorporated this into an iCub open-source robot, which is designed to look like a 3-year-old human.

As you can see below, a researcher asks the iCub to point to a “guitar,” depicted in blue, and then move a “violin,” depicted in red. Like a weird E-Trade ad from the future, the iCub’s babylike head speaks with an adult guy’s voice to ensure it has understood and is doing the task correctly. Then it checks after it’s finished, just to be sure.

This could actually help researchers studying the brain, by validating pathways thought to be important in processing language. But more importantly, it could help robots learn more efficiently, according to Dominey. “At present, engineers are simply unable to program all of the knowledge required in a robot,” he said in a statement. “We now know that the way in which robots acquire their knowledge of the world could be partially achieved through a learning process – in the same way as children.” Creepy, creepy robot children.

[via ScienceDaily]