When social distancing started, video calling quickly stumbled into its role as a fundamental method of interpersonal communication. The tool is not the same as meeting in-person, but simply seeing other faces made typical audio-only conference calls feel hopelessly antiquated. Now, however, after months of distance, video calling’s novelty has faded and the annoying quirks have become more apparent. A barking dog in the background isn’t as cute as it once was, and anyone who eats from a crinkly bag during a brainstorm without muting their mic should go into one of those mega-jails where the Avengers house super villains.

Now, however, companies such as Microsoft and Google are ramping up AI-powered efforts to cancel out annoying background noise during video calls. In fact, Google just started rolling out its noise-canceling feature to some G Suite corporate customers and it will make its way to more users in coming months.

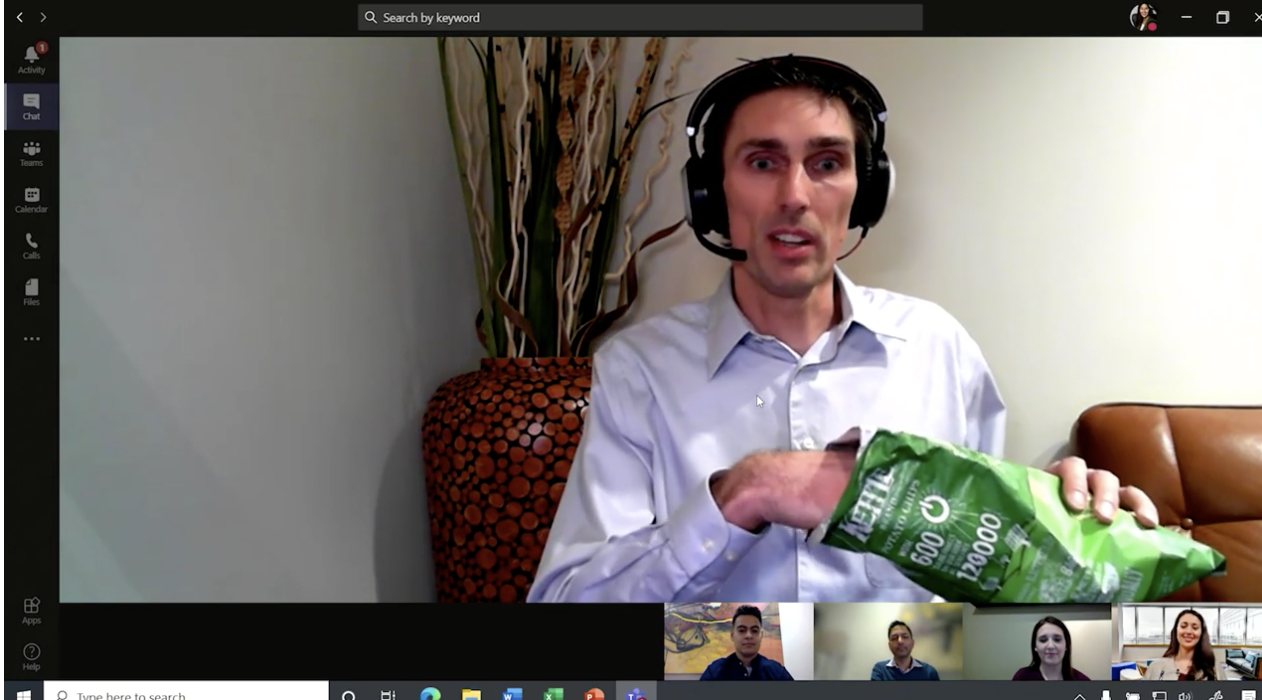

This week, Google gave Venture Beat a demo of the noise-cancelling tech it’s starting to implement. The demonstration is impressive. The presenter, G Suite director of product management, Serge Lachapella, runs through a variety of different sounds, including hand-clapping, bag crinkling, and even hitting a glass cup with a metal hex key. When he turns the noise-cancellation on, the quality of his voice sounds slightly muffled, but clears up after a few moments. More importantly, however, the distracting sounds almost completely disappear.

Lachapella is using a Blue Yeti microphone, which is a fairly common piece of kit for podcasters and streamers, but it’s not the hardware that’s pulling off the magic. Instead, Google relies on an cloud-based AI algorithm that analyzes the audio and pries out the unpleasant distractions while leaving your words.

That’s different from when we typically use the phrase “noise-canceling” in headphone terms. In that case, headphones create sound waves that physically cancel out noise as it tries to get to your ear. In Google’s case—and other companies trying the same thing—a bot is analyzing the audio and stripping the noise from the signal before transmitting it to your headphones or speakers.

Google is no stranger to speech recognition. The Google Assistant has been listening to and parsing words for years now, and just last year, the company introduced its surprisingly accurate Live Transcription function, which reproduces conversations in plain text in real time. Google leveraged that technology with its new AI. With noise-cancellation, the computing takes place in the cloud rather than on the user’s device so it doesn’t tax the local processor even more than a resource-intensive video call would.

The feature will be on by default when it goes out to users, which means you may one day notice that background sounds are gone. You’ll be able to go into the settings and switch it off if you prefer the unfiltered audio. There may be some instances where you’d want to. For instance, singing will likely make it through the filter, while background music may not.

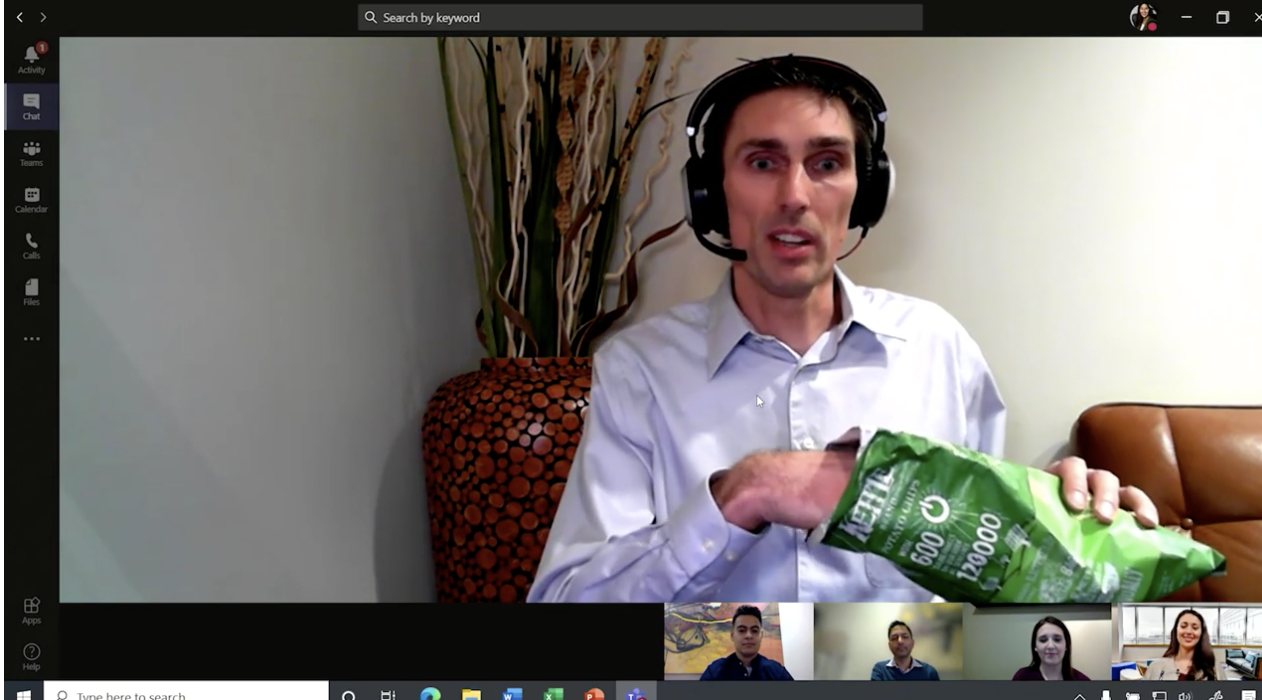

Google isn’t the only company working on trying to clean up video calling audio. Back in April, Microsoft demonstrated a similar technology intended for its Teams video chat functionality. It uses a similar concept: analyzing audio and filtering out sounds that it doesn’t recognize as talking.

Like Google’s plan, Microsoft’s noise suppression will roll out in coming months.

As with most AI-powered technology, both companies expect that their systems will get better at identifying unwanted noise over time. Given wider data sets and more time to learn what it should and shouldn’t filter out will ultimately make it more effective. That’s good, because your chip-snarfing co-workers probably don’t plan on switching to quieter snacks any time soon.