Last August, U.S. Navy operators on the ground lost all contact with a Fire Scout helicopter flying over Maryland. They had programmed the unmanned aerial vehicle to return to its launch point if ground communications failed, but instead the machine took off on a north-by-northwest route toward the nation’s capital. Over the next 30 minutes, military officials alerted the Federal Aviation Administration and North American Aerospace Defense Command and readied F-16 fighters to intercept the pilotless craft. Finally, with the Fire Scout just miles shy of the White House, the Navy regained control and commanded it to come home. “Renegade Unmanned Drone Wandered Skies Near Nation’s Capital,” warned one news headline in the following days. “UAV Resists Its Human Oppressors, Joyrides over Washington, D.C.,” declared another.

The Fire Scout was unarmed, and in any case hardly a machine with the degree of intelligence or autonomy necessary to wise up and rise up, as science fiction tells us the robots inevitably will do. But the world’s biggest military is rapidly remaking itself into a fighting force consisting largely of machines, and it is working hard to make those machines much smarter and much more independent. In March, noting that “unprecedented, perhaps unimagined, degrees of autonomy can be introduced into current and future military systems,” Ashton Carter, the U.S. undersecretary of defense for Acquisition, Technology and Logistics, called for the formation of a task force on autonomy to ensure that the service branches take “maximum practical advantage of advances in this area.”

In Iraq and Afghanistan, U.S. troops have been joined on the ground and in the air by some 20,000 robots and remotely operated vehicles. The CIA regularly slips drones into Pakistan to blast suspected Al Qaeda operatives and other targets. Congress has called for at least a third of all military ground vehicles to be unmanned by 2015, and the Air Force is already training more UAV operators every year than fighter and bomber pilots combined. According to “Technology Horizons,” a recent Air Force report detailing the branch’s science aims, military machines will attain “levels of autonomous functionality far greater than is possible today” and “reliably make wide- ranging autonomous decisions at cyber speeds.” One senior Air Force engineer told me, “You can envision unmanned systems doing just about any mission we do today.” Or as Colonel Christopher Carlile, the former director of the Army’s Unmanned Aircraft Systems Center of Excellence, has said, “The difference between science fiction and science is timing.”

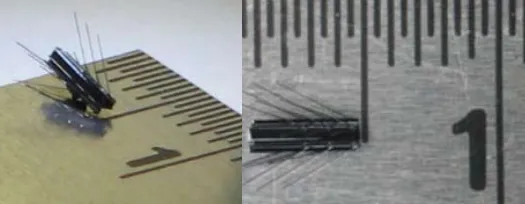

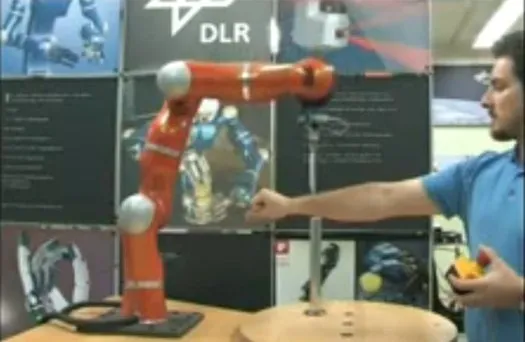

We’ve gathered some of the most powerful and fear-inducing robots to date in this gallery. Click the thumbnails to see more ways bots are getting faster, smarter and more lethal.

We are surprisingly far along in this radical reordering of the military’s ranks, yet neither the U.S. nor any other country has fashioned anything like a robot doctrine or even a clear policy on military machines. As quickly as countries build these systems, they want to deploy them, says Noel Sharkey, a professor of artificial intelligence and robotics at the University of Sheffield in England: “There’s been absolutely no international discussion. It’s all going forward without anyone talking to one another.” In his recent book Wired for War: The Robotics Revolution and Conflict in the 21st Century, Brookings Institution fellow P.W. Singer argues that robots and remotely operated weapons are transforming wars and the wider world in much the way gunpowder, mechanization and the atomic bomb did in previous generations. But Singer sees significant differences as well. “We’re experiencing Moore’s Law,” he told me, citing the axiom that computer processing power will double every two years, “but we haven’t got past Murphy’s Law.” Robots will come to possess far greater intelligence, with more ability to reason and self- adapt, and they will also of course acquire ever greater destructive power. So what does it mean when whatever can go wrong with these military machines, just might?

I asked that question of Werner Dahm, the chief scientist of the Air Force and the lead author on “Technology Horizons.” He dismissed as fanciful the kind of Hollywood-bred fears that informed news stories about the Navy Fire Scout incident. “The biggest danger is not the Terminator scenario everyone imagines, the machines taking over—that’s not how things fail,” Dahm said. His real fear was that we would build powerful military systems that would “take over the large key functions that are done exclusively by humans” and then discover too late that the machines simply aren’t up to the task. “We blink,” he said, “and 10 years later we find out the technology wasn’t far enough along.”

Dahm’s vision, however, suggests another “Terminator scenario,” one more plausible and not without menace. Over the course of dozens of interviews with military officials, robot designers and technology ethicists, I came to understand that we are at work on not one but two major projects, the first to give machines ever greater intelligence and autonomy, and the second to maintain control of those machines. Dahm was worried about the success of the former, but we should be at least as concerned about the failure of the latter. If we make smart machines without equally smart control systems, we face a scenario in which some day, by way of a thousand well-intentioned decisions, each one seemingly sound, the machines do in fact take over all the “key functions” that once were our domain. Then “we blink” and find that the world is one we no longer are able to comprehend or control.

Low-Hanging Fruit

Today soldiers and airmen can see that the machines are becoming their equals or betters, at least in some situations. Last summer, when I visited the Air Force Research Laboratory at Wright-Patterson Air Force Base near Dayton, Ohio, scientists there showed me a video demonstration of a system under development, called Sense and Avoid, that they expect to be operational by 2015. Using a suite of onboard sensors, unmanned aircraft equipped with this technology can detect when another aircraft is close by and quickly maneuver to avoid it. Sense and Avoid could be used in combat situations, and it has been tested in computer simulations with multiple aircraft coming at the UAV from all angles. Its most immediate benefit, however, might be to offer proof that UAVs can be trusted to fly safely in U.S. skies. The Federal Aviation Administration does not yet allow the same UAVs that move freely in war zones to come anywhere near commercial flights back home and only very rarely allows them to fly even in our unrestricted airspace. But Sense and Avoid algorithms follow the same predictable FAA right-of-way rules required of all planes. At one point in the video, which depicted a successful test of the system over Lake Ontario, a quote flashed on the screen from a pilot who had operated one of the oncoming aircraft: “Now that was as a pilot would have done it.”

Machines already possess some obvious advantages over us mere mortals. UAVs can accelerate beyond the rate at which pilots normally black out, and they can remain airborne for days, if not weeks. Some military robots can also quickly aim and fire high-energy lasers, and (in controlled situations) they can hit targets far more consistently than people do. The Army currently uses a squat, R2-D2–like robot called Counter Rocket, Artillery and Mortar, or C-RAM, that employs radar to detect incoming rounds over the Green Zone or Bagram Airfield and then shoot them down at a remarkable rate of 70 percent. The Air Force scientists also spoke of an “autopilot on steroids” that could maximize fuel efficiency by drawing on data from weather satellites to quickly modify a plane’s course. And a computer program that automatically steers aircraft away from the ground when pilots become disoriented is going live on F-16s later this year.

For the moment, the increase in machine capability is being met with an increase in human labor. The Air Force told Singer that an average of 68 people work with every Predator drone, most of them to analyze the massive amount of data that each flight generates. And as the Pentagon deploys ever more advanced imaging systems, from the nine-sensor Gorgon Stare to the planned 368-sensor ARGUS, the demand for data miners will continue to grow. Because people are the greatest financial cost in maintaining a military, though, the Pentagon is beginning to explore the use of “smart” sensors. Drawing on motion-sensing algorithms, these devices could decide for themselves what data is important, transmitting only the few minutes when a target appears, not the 19 hours of empty desert.

Ronald Arkin, the director of the Mobile Robot Laboratory at the Georgia Institute of Technology, hypothesizes that, within certain bounded contexts, armed robots could even execute military operations more ethically than humans. A machine equipped with Arkin’s prototype “ethical governor” would be unable to execute lethal actions that did not adhere to the rules of engagement, minimize collateral damage, or take place within legitimate “kill zones.” Moreover, machines don’t seek vengeance or experience the overwhelming desire to protect themselves, and they are never swayed by feelings of fear or hysteria. I spoke to Arkin in September, just a few days after news broke that the Pentagon had filed charges against five U.S. soldiers for murdering Afghan civilians and mutilating their corpses. “Robots are already stronger, faster and smarter,” he said. “Why wouldn’t they be more humane? In warfare where humans commit atrocities, this is relatively low-hanging fruit.”

A Tricky Thing

In a secure area within the Air Force Research Lab, I watched computer simulations of an automated aerial refueling system that allows UAVs to sidle up to a flying Air Force tanker, to move into the appropriate fueling position, and to hold that position, even amid turbulence, for the nearly 30 minutes it takes to fill up. The Air Force has been fueling piloted aircraft in midair for some 60 years, initially using the delicate procedure on fighter jets in the Korean War and on nuclear-armed bombers that stayed in the air for days at a time. Aerial refueling reduces the number of planes and bases needed by extending the range of missions and by allowing aircraft to take off with less fuel and greater payload on shorter runways. Because the process requires the two planes to fly very close to each other, it has always been left to airmen in each craft to coordinate these interactions.

As the Air Force makes its transition to an unmanned fleet, though, it is trying to ensure that the procedure can be accomplished free of human control. The automated aerial refueling system, developed jointly with Northrop Grumman and others, has undergone “dry” flight tests in which a Learjet stood in for a UAV. But most of the testing has been conducted using computer simulations, running through as many scenarios as possible, identifying potential problems, and adjusting inputs accordingly. The transfer of control from human to robot has been gradual. “The more you can demonstrate trust, the more you can convince everyone that the UAVs are safe,” Bob Smith, one of the senior engineers in the lab’s Control Sciences Division, says. “But trust is a greater entity than just reliability or safety. It has to be built up over time. Trust is a tricky thing.”

The lab also contained a simulator in which airmen trained on the refueling system. I was allowed to slide face-down onto a boom-operator’s couch pulled from a retired Boeing KC-135 Air Force tanker to try my hand at the maneuver. My head rested mostly inside a dome of video screens that were made to look like a tanker’s windows, with rolling hills and snow-capped peaks on the virtual ground far below. A UAV looking like a stingray glided into view what seemed 50 feet beneath me, wobbling a bit from what must have been wind gusts. A global positioning system enables unmanned planes to follow a tanker’s exact circling pattern at the identical speed. It was my job to position a rigid fuel tube directly above the UAV’s tank by manipulating a controller in my right hand and, with a controller in my left, telescope the tube downward until it mated with the unmanned plane. As videogames go, it was low stakes—no civilization created or lost, no kill or be killed. But inside the simulator, it did seem fairly real.

I moved the boom into place, lowered it, missed long. I retracted, started over, sent it wide. After I failed on several more attempts, the scientists looking at my prone backside began mentioning the next presentation, how we shouldn’t keep the busy engineers waiting. Clearly I was the weakest link in the system. A machine would have done it much better.

Oops Moments

Placing such faith in our military machines may be tempting, but it is not always wise. In 1988 a Navy cruiser patrolling the Persian Gulf shot down an Iranian passenger plane, killing all 290 aboard, when its automated radar system mistook the aircraft for a much smaller fighter jet, and the ship’s crew trusted the computer more than other conflicting data. Several scientists at Wright-Patterson mentioned learning from such classic examples of over-reliance on machines, which even in civilian aviation has led to fatal accidents. As often as not, such military machine mishaps are the result of what a vice president at a robotics company described to P.W. Singer as “oops moments,” the kinds of not-uncommon mistakes that occur with the technology at such an early stage of development. In 2007, when the first batch of the armed tank-like robots called SWORDS were deployed to Iraq—and then quickly pulled from action—a story spread about one aiming its guns on friendly forces.

The robot’s manufacturer later confirmed that there were several malfunctions but insisted that no personnel were ever endangered. A C-RAM in Iraq did target a U.S. helicopter, identifying it incorrectly as incoming rocket fire; why it held its own fire remains unclear. A soldier back from duty in 2006 told Singer that a ground robot he operated in Iraq would sometimes “drive off the road, come back at you, spin around, stuff like that.” That same year, Singer says, a SWORDS inexplicably began whirling around during a demonstration for executives; a scene out of the movie Robocop was avoided because the robot’s machine gun wasn’t loaded. But at a crowded South African army training exercise in 2007, an automated anti-aircraft cannon seemed to jam and then began to swivel wildly, firing all of its 500 auto-loading rounds. Nine soldiers were killed and 14 seriously wounded.

It turns out that it’s easier to design intelligent robots with greater independence than it is to prove that they will always operate safely. The “Technology Horizons” report emphasizes “the relative ease with which autonomous systems can be developed, in contrast to the burden of developing V&V [verification and validation] measures,” and the document affirms that “developing methods for establishing ‘certifiable trust in autonomous systems’ is the single greatest technical barrier that must be overcome to obtain the capability advantages that are achievable by increasing use of autonomous systems.” Ground and flight tests are one method of showing that machines work correctly, but they are expensive and extremely limited in the variables they can check. Software simulations can run through a vast number of scenarios cheaply, but there is no way to know for sure how the literal-minded machine will react when on missions in the messy real world. Daniel Thompson, the technical adviser to the Control Sciences Division at the Air Force research lab, told me that as machine autonomy evolves from autopilot to adaptive flight control and all the way to advanced learning systems, certifying that machines are doing what they’re supposed to becomes much more difficult. “We still need to develop the tools that would allow us to handle this exponential growth,” he says. “What we’re talking about here are things that are very complex.”

This technical problem has political ramifications as well. Other countries, hoping to reap the tactical advantages of superior robot intelligence and power, might be willing to field untested autonomous systems that could go dangerously out of control. Forty-three other countries, including China and Iran, currently have or are developing their own military robots. And the Association for Unmanned Vehicles Systems International boasts 6,000 members. Air Force scientists say they can stay ahead of countries that cut corners simply by stepping up their own verification and validation efforts. But such efforts will do little to prevent the proliferation of unreliable robots elsewhere, and may simply inspire some countries, in their attempts to win the robot arms race, to cut even more corners. “What’s easy to do is create a dumb autonomous robot that kills everything,” says Patrick Lin, the head of the Ethics and Emerging Sciences Group at California Polytechnic State University at San Luis Obispo. It’s not hard to imagine an ambitious, militant nation manufacturing a dumb robot that kills the wrong thing and triggers a much larger conflict.

Wendell Wallach, the chairman of the Technology and Ethics study group at Yale University, says machines often are credited with powers of discrimination, resilience and adaptability that they don’t actually possess, frequently by contractors eagerly hyping their products. “A real danger with autonomous systems,” he says, “is that they end up being used in situations where they simply don’t belong.”

The precursors to such systems—the remote-control machines that perform many operations the military calls too “dull, dirty, and dangerous” for humans—are in wide use, and not without consequence. CIA drone crews unable to adequately discriminate between combatants and noncombatants have so far killed as many as 1,000 Pakistani civilians. And truly autonomous systems are taking on increasingly sensitive tasks. “I don’t know that we can ignore the Terminator risk,” Lin says, and as an example suggests not some killer drone but rather the computers that even now control many of our business operations. Last spring, for instance, one trading firm’s “sell algorithm” managed to trigger a sudden 1,000-point “flash crash” in the stock market: “It’s not that big of a stretch to think that much of our lives, from business systems to military systems, are going to be run by computers that will process information faster than we can and are going to do things like crash the stock market or potentially launch wars. The Terminator scenario is not entirely ridiculous in the long term.”

Out of the Loop

As we quicken the pace and complexity of warfare (and stock trading), it will become difficult to avoid surrendering control to machines. A soldier technically can override the firing of a C-RAM, but he must reach that decision and carry out the action in as few as 0.5 seconds. Critical seconds are also lost as a machine relays information about a confirmed target to a remote human operator and awaits confirmation to fire. With autonomous systems executing superhuman tasks at superhuman speeds, humans themselves are increasingly unable to make decisions quickly enough. They are becoming supervisors and “wingmen” to the machines—no longer deciders who are in the loop but observers who are, as the military puts it, “on the loop.”

The officials at the Air Force lab were quick to point out that autonomy should not be thought of as an all-or-nothing proposition. Ideally there are “degrees of flexible autonomy” that can constantly scale up and down, with people playing more supervisory roles in some situations and machines operating independently in others. “Our focus is using automation to help the human make the decision, not to make decisions for the human,” Major General Ellen Pawlikowski, the commander of the lab, told me. “If it gets to the point where a bad decision can be made because a human is out of the loop, that’s when automation has to stop.”

But to maintain even this level of human involvement requires extraordinary levels of coordination and communication between people and machines. Isaac Asimov addressed this very issue with his oft-cited Three Laws of Robotics, a fictional device that nonetheless has become the default starting point in discussions about robot control: A robot may not harm a human or, through inaction, allow a human to come to harm; a robot must obey any orders given to it by a human, unless the orders conflict with the first law; and a robot must protect itself, so long as the actions don’t conflict with the previous two laws.

David Woods, a professor at Ohio State University who specializes in human- robot coordination and who works closely with military researchers on automated systems, says that a simple rules-based approach will never be enough to anticipate the myriad physical and ethical challenges that robots will confront on the battlefield. There needs to be instead a system whereby, when a decision becomes too complex, control is quickly sent back either to a human or to another robot on a different loop. “Robots are resources for responsible people. They extend human reach,” he says. “When things break, disturbances cascade, humans need to be able to coordinate and interact with multiple loops.” According to Woods, the laws of robotics could be distilled into a single tenet: “the smooth transfer of control.” We may relinquish control in specific instances, but we must at all times maintain systems that allow us to reclaim it. The more we let go, the more difficult that becomes.

At Wright-Patterson, I observed a simulation in which a single person simultaneously controlled four unmanned aircraft using a unique interface called the Vigilant Spirit Control Station. On two large monitors, each of the four planes was depicted in a different color, its important flight data and tactical information pertaining to mission, sensor footprint and target location all displayed in the corresponding color along the edge of one screen. Mark Draper, the lab’s technical adviser for supervisory-control interfaces, explained that a single operator could handle a dozen UAVs if the planes were merely watching fixed targets on the ground, with the sensor video being relayed to other people who needed it. The real test is whether humans and machines can detect some sort of disruption or anomaly and react to it by swiftly reassigning control. “What if one of the 12 UAVs suddenly finds a high-value target who gets in a truck and drives away?” Draper proposed. “That’s a very dynamic event, and this one person can’t manage 12 planes while tracking him. So boom, boom— that task immediately transfers over to a dedicated unit at some other remote site. Then when the job is done, the plane transfers back over to the initial resource manager still tracking the 11 planes burning holes in the sky.”

The Air Force is also looking into how humans can become more machinelike, through the use of drugs and various devices, in order to more smoothly interact with machines. The job of a UAV manager often entails eight hours of utter tedium broken up at some unknown time by a couple of minutes of pandemonium. In flight tests, UAV operators tend to “tunnelize,” fixating on one UAV to the exclusion of others, and a NATO study showed that performance levels dropped by half when a person went from monitoring one UAV to just two. Military research into pharmaceuticals that act as calmatives or enhance alertness and acuity are well-known. But in the research lab’s Human Effectiveness Directorate, I saw a prototype of a kind of crown rigged with electrode fingers that rested on the scalp and picked up electric signals generated by the brain. Plans are for operators overseeing several UAVs to wear a future version of one of these contraptions and to undergo continuous heart-rate and eye-movement monitoring. In all, these devices would determine when a person is fatigued, angry, excited or overwhelmed. If a UAV operator’s attention waned, he could be cued visually, or a magnetic stimulant could be sent to his frontal lobe. And when a person displayed the telltale signs of panic or stress, a human (or machine) supervisor could simply shift responsibilities away from that person.

Just how difficult it is to keep humans on the loop has surprised even the researchers at the Air Force lab. At the end of the Vigilant Spirit presentation, Bob Smith, one of a dozen engineers in the room, said, “We thought the hard part would be making a vehicle do something on its own. The hard part is making it do that thing well with a human involved.”

The Strategy

In September, a group of 40 scientists, government officials, human-rights lawyers and military personnel gathered in Berlin for the first conference of the International Committee

for Robot Arms Control. Noel Sharkey co-founded the organization in 2009 with the hopes of stimulating debate about the ways that military robots have already altered the nature of warfare and subverted many of the existing rules of engagement regarding lawful targets and legal combatants, legitimate strikes and assassinations, spying and aggressive military actions. David Woods said that it is very likely we will soon hear about counter-drone battles, in which drones surveil, follow, feint, jam, and steal information from one another— everything short of outright aerial dogfights.

With humans taking on less of the risk of combat, Sharkey and many others fear that the barriers for armed conflict will be lowered, as engagement seems (at least from the perspective of those with the robots) to be less costly. The U.S. is currently involved in an undefined military action in Pakistan that’s being carried out by a civilian intelligence agency. The country has launched 196 unmanned airstrikes in northwest Pakistan since 2004, with the rate of these attacks increasing dramatically during the Obama administration. “If it weren’t for drones,” Patrick Lin says, “it’s likely we wouldn’t be there at all. These robots are enabling us to do things we wouldn’t otherwise do.”

A majority of the people at the conference signed a statement calling for the regulation of unmanned systems and a ban on the future development and deployment of armed autonomous robot weapons. But Sharkey says attendees argued even over how to define basic terms, and the experience further demonstrated to him just how difficult it would be to bring state actors into the discussion. Governments that build this technology do so to advance their military aims, but soon the aims become shaped to suit the technology, and the machines themselves prove indispensable. The newness of the committee and its size (just 10 people are full-fledged members) reveal how astonishingly rare such dialogue on this topic remains.

Wendell Wallach emphasizes the “tremendous confusion out there about how autonomous robots will get. We have everything from ‘We’re only two decades away from human-level AI’ to people who think we’re 100 years away or may never get there.” Even at the Air Force lab, where I was shown the cutting-edge work in the field, I left without a clear sense of what we might expect in the years ahead. Siva Banda, the head of control theory there, told me the Air Force understood well the standards and specs required to build a manned aircraft. “But our knowledge when it comes to UAVs—we’re like infants. We’re babies.” Indeed, it’s not clear that anyone is taking the lead on the matter of military machines. After P.W. Singer recently briefed Pentagon officials on his Wired for War, a senior defense-department strategy expert said he found the talk fascinating and had a question: “Who’s developing and wrestling with the strategy for all of this?” Singer, who has now given many such talks, explained to the official: “Everyone else thinks it’s you.”