The human hand is amazingly complex—so much so that most modern robots and artificial intelligence systems have a difficult time understanding how they truly work. Although machines are now pretty decent at grasping and replacing objects, actual manipulation of their targets (i.e. assembly, reorienting, and packaging) remains largely elusive. Recently, however, researchers created an impressively dextrous robot after realizing it needed less, not more, sensory inputs.

A team at Columbia Engineering has just unveiled a five-digit robotic “hand” that relies solely on its advanced sense of touch, alongside motor learning algorithms, to handle difficult objects—no visual data required. Because of this, the new proof-of-concept is completely immune to common optical issues like dim lighting, occlusion, and even complete darkness.

[Related: Watch a robot hand only use its ‘skin’ to feel and grab objects.]

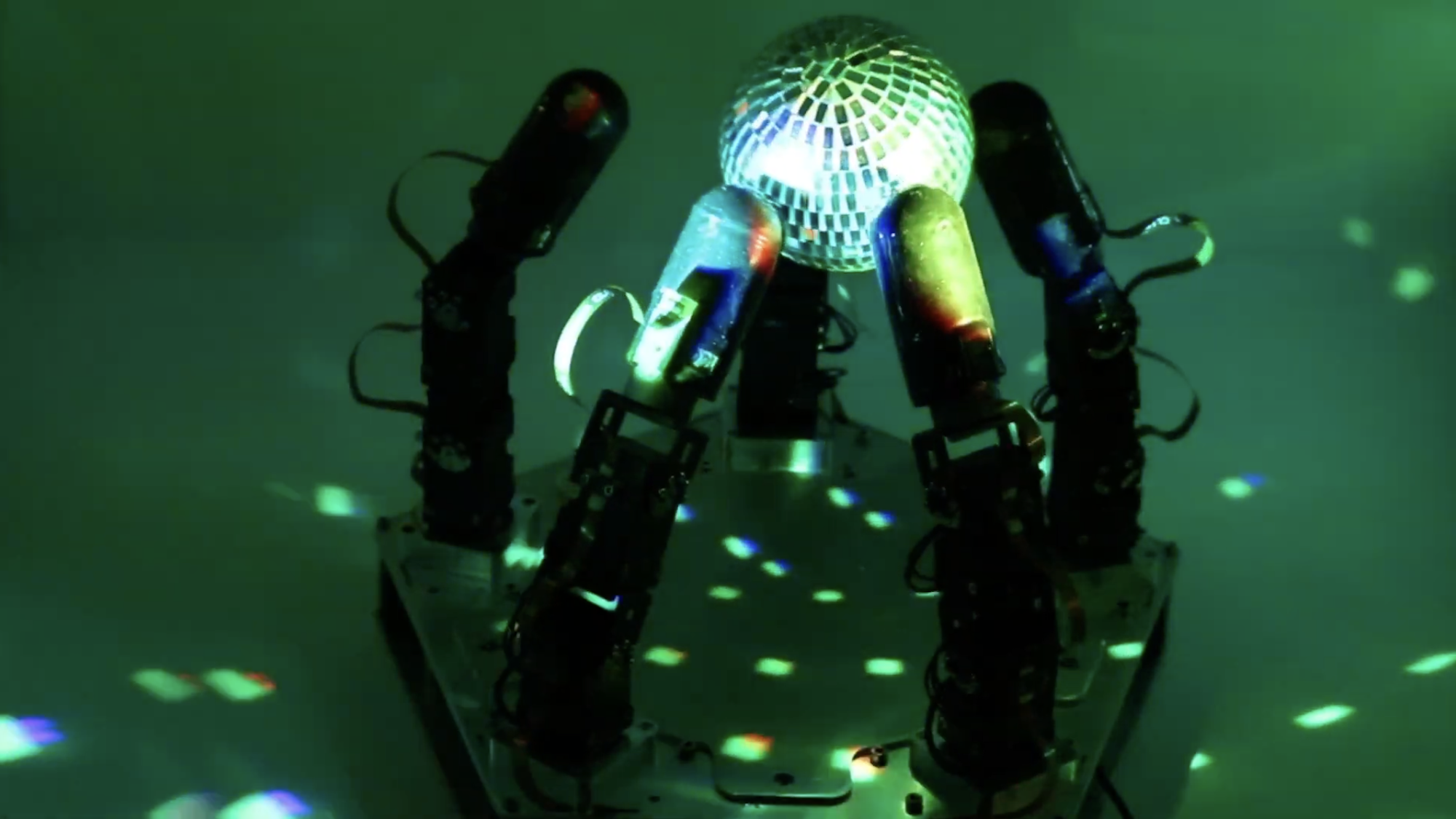

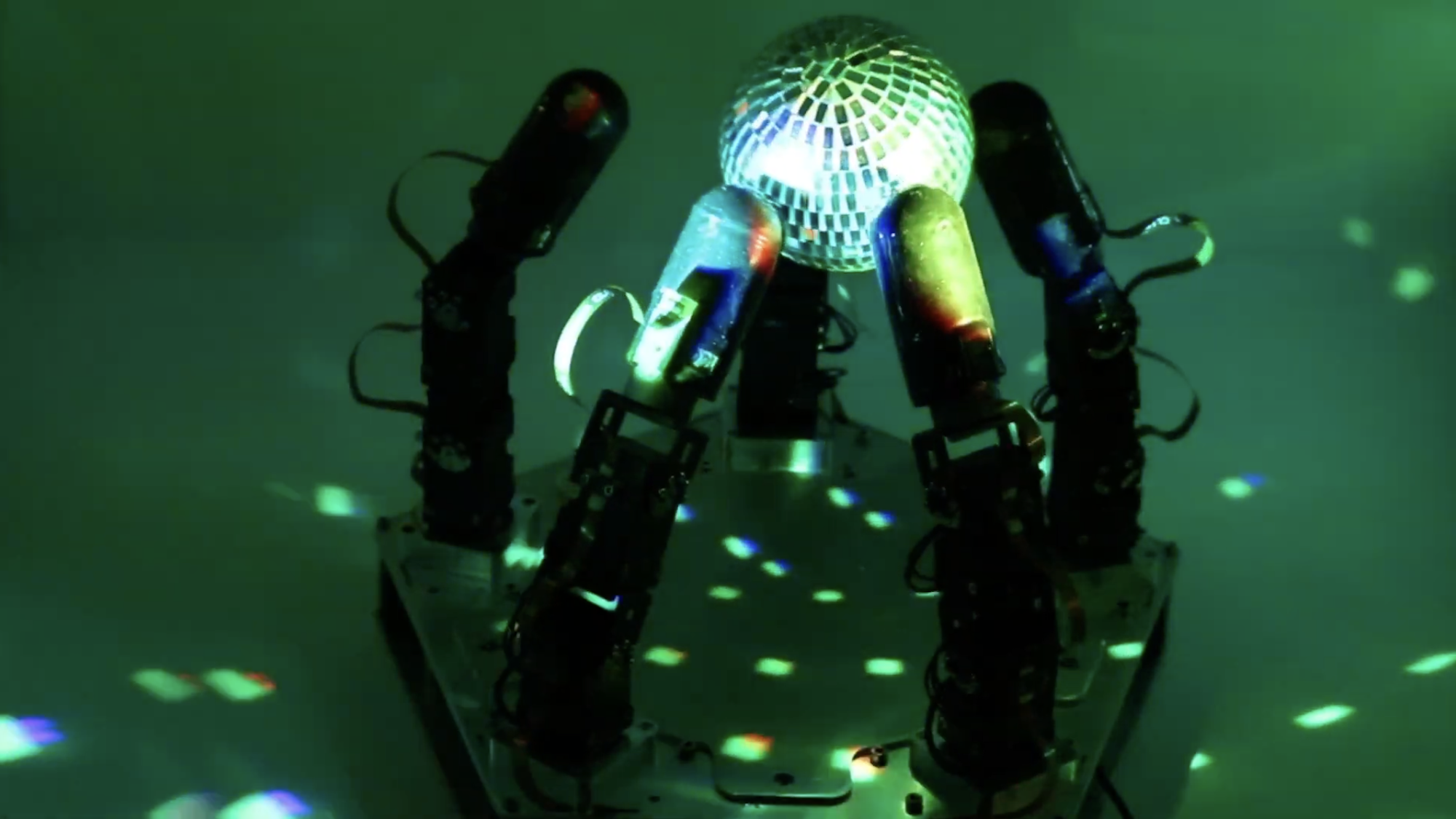

Each of the new robot’s digits are equipped with highly sensitive touch sensors alongside 15 independently actuating joints. Irregularly shaped objects such as a miniature disco ball were then placed into the hand for the robot to rotate and maneuver without dropping them. Alongside “submillimeter” tactile data, the robot relied on what’s known as “proprioception.” Often referred to as the “sixth sense,” proprioception includes abilities like physical positionality, force, and self-movement. These data points were then fed into a deep reinforcement learning program, which was able to simulate roughly one year of practice time in only a few hours via “modern physics simulators and highly parallel processors,” according to a statement from Columbia Engineering.

In their announcement, Matei Ciocarlie, an associate professor in the departments of mechanical engineering and computer science, explained that “the directional goal for the field remains assistive robotics in the home, the ultimate proving ground for real dexterity.” While Ciocarlie’s team showed how this was possible without any visual data, they plan to eventually incorporate that information into their systems. “Once we also add visual feedback into the mix along with touch, we hope to be able to achieve even more dexterity, and one day start approaching the replication of the human hand,” they added.

[Related: AI is trying to get a better handle on hands.]

Ultimately, the team hopes to combine this dexterity and understanding alongside more abstract, semantic and embodied intelligence. According to Columbia Engineering researchers, their new robotic hand represents the latter capability, while recent advances in large language modeling through OpenAI’s GPT-4 and Google Bard could one day supply the former.