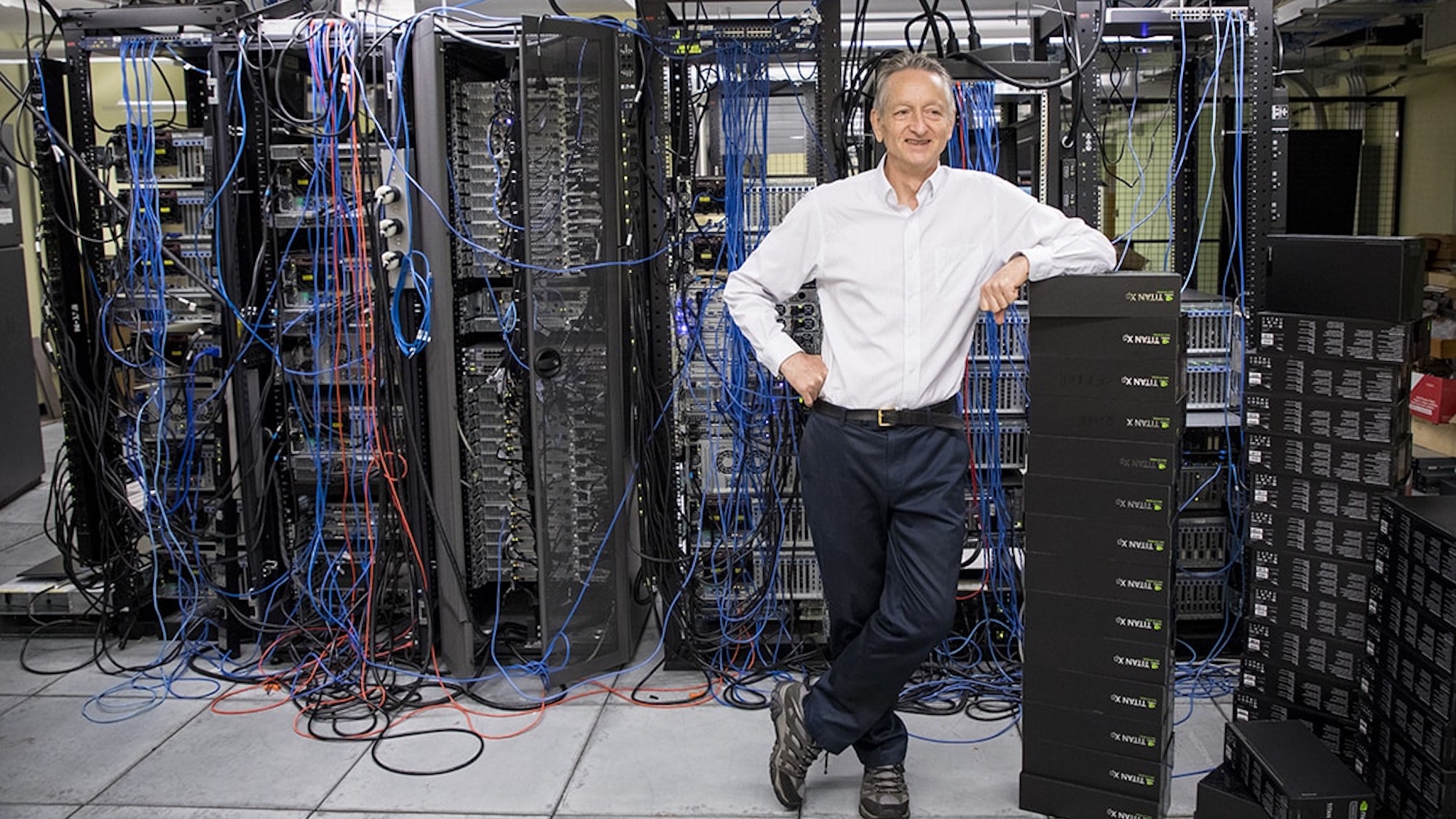

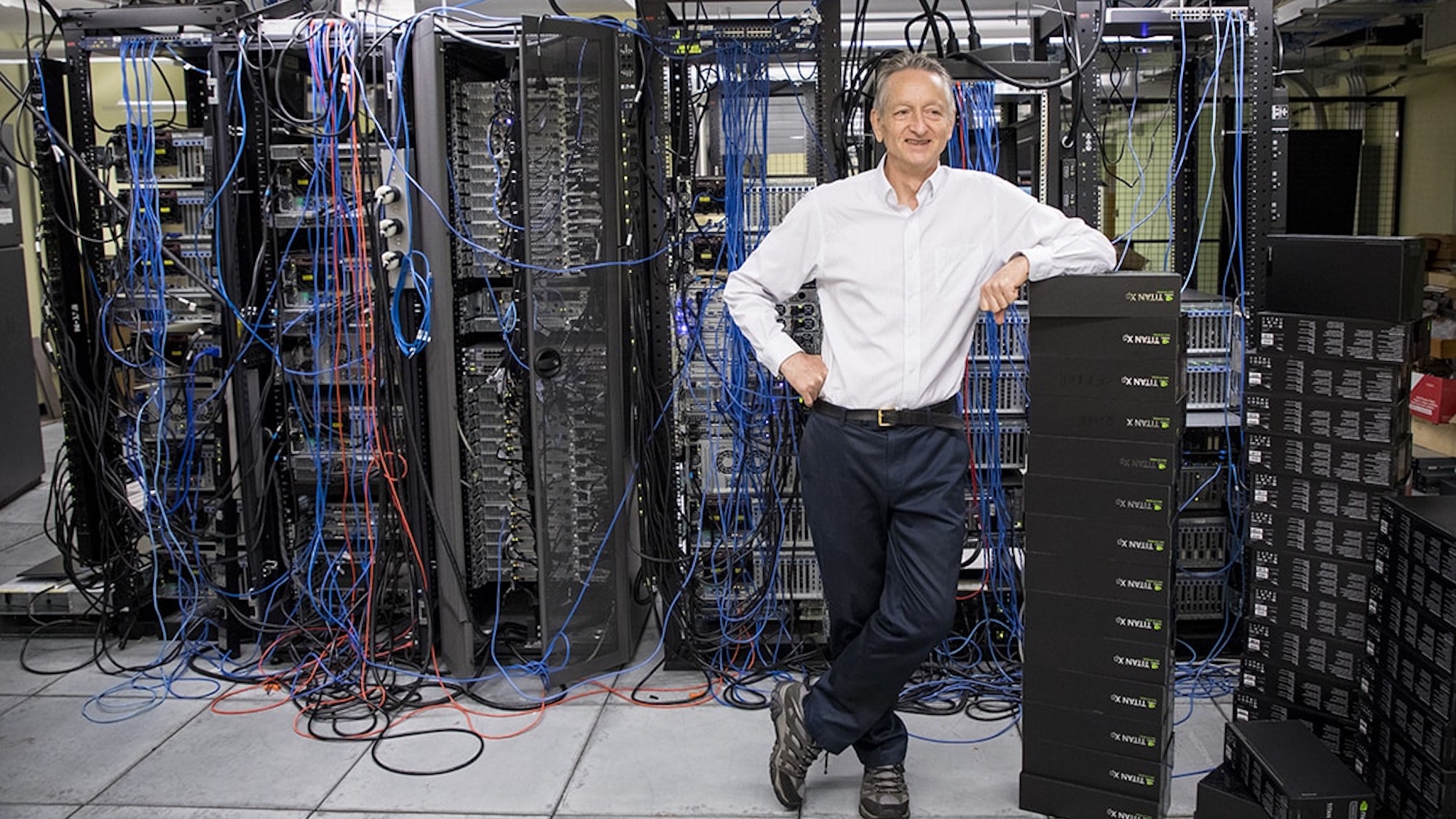

Geoffrey Hinton, known to some as the “Godfather of AI,” pioneered the technology behind today’s most impactful and controversial artificial intelligence systems. He also just quit his position at Google to more freely criticize the industry he helped create. Via an interview with The New York Times published on Monday, Hinton confirmed he told his employer of his decision in March and spoke with Google CEO Sundar Pichai last Thursday.

In 2012, Hinton, a computer science researcher at the University of Toronto, and his colleagues achieved a breakthrough in neural network programming. They were soon approached by Google to work alongside the company in developing the technology. Although once viewed with skepticism among researchers, neural networks’ mathematical abilities to parse immense data troves has since gone on to form the underlying basis of industry-shaking text- and image-generating AI tech such as Google Bard and OpenAI’s GPT-4. In 2018, Hinton and two longtime co-researchers received the Turing Award for their neural network contributions to the field of AI.

[Related: Microsoft lays off entire AI ethics team while going all out on ChatGPT.]

But in light of AI’s more recent, controversial advances, Hinton expressed to The New York Times that he has since grown incredibly troubled by the technological arms race brewing between companies. He said he is very wary of the industry’s trajectory with little-to-no regulation or oversight, and is described as partially “regret[ting] his life’s work.” “Look at how it was five years ago and how it is now. Take the difference and propagate it forwards,” Hinton added. “That’s scary.”

Advancements in AI have shown immense promise in traditionally complicated areas such as climate modeling and detecting medical issues like cancer, “The idea that this stuff could actually get smarter than people—a few people believed that,” Hinton said during the interview. “But most people thought it was way off. And I thought it was way off. I thought it was 30 to 50 years or even longer away. Obviously, I no longer think that.”

[Related: No, the AI chatbots (still) aren’t sentient.]

The 75-year-old researcher first believed, according to the New York Times, that the progress seen by companies like Google, Microsoft, and OpenAI would offer new, powerful ways to generate language, albeit still “inferior” to human capabilities. Last year, however, private companies’ technological strides began to worry him. He still contends (as do most experts) that these neural network systems remain inferior to human intelligence, but argues that for some tasks and responsibilities AI may be “actually a lot better.”

Since The New York Times’ piece published, Hinton took to Twitter to clarify his position, stating he “left so that I could talk about the dangers of AI without considering how this impacts Google.” Hinton added he believes “Google has acted very responsibly.” Last month, a report from Bloomberg featuring interviews with employees indicated many at the company believe there have been “ethical lapses” throughout Google’s AI development.

“I console myself with the normal excuse: If I hadn’t done it, somebody else would have,” Hinton said of his contributions to AI.