Ian Burkhart drapes his hand over a beige tube, squeezes his fingers, and lifts it into the air. For most, this movement would be no big deal: You think “grab that tube,” and your spinal cord whisks the command down to your hand, which does the deed before you even realize it. But for Ian, who lost the use of his arms below the elbow eight years ago, the process plays out differently.

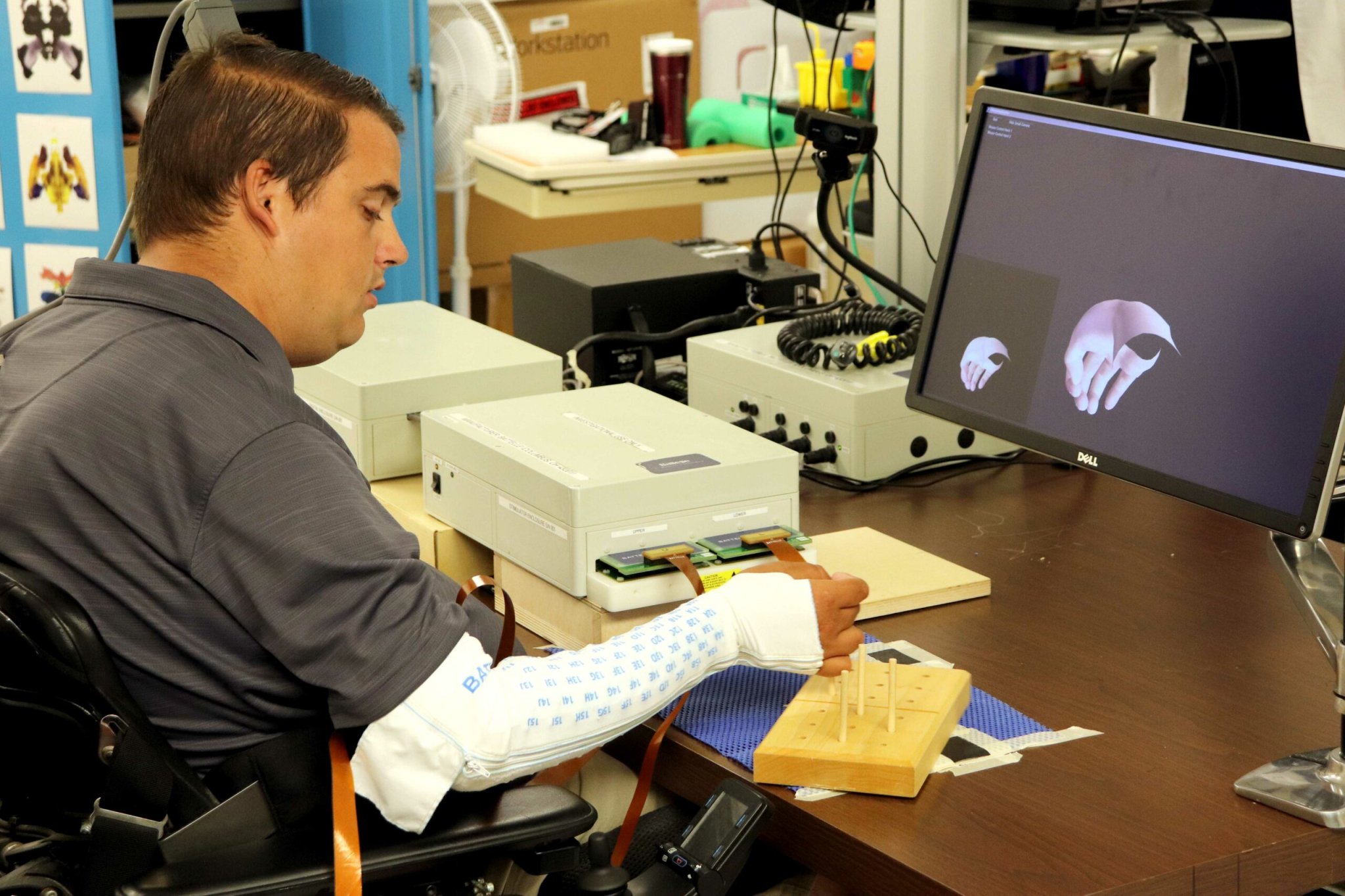

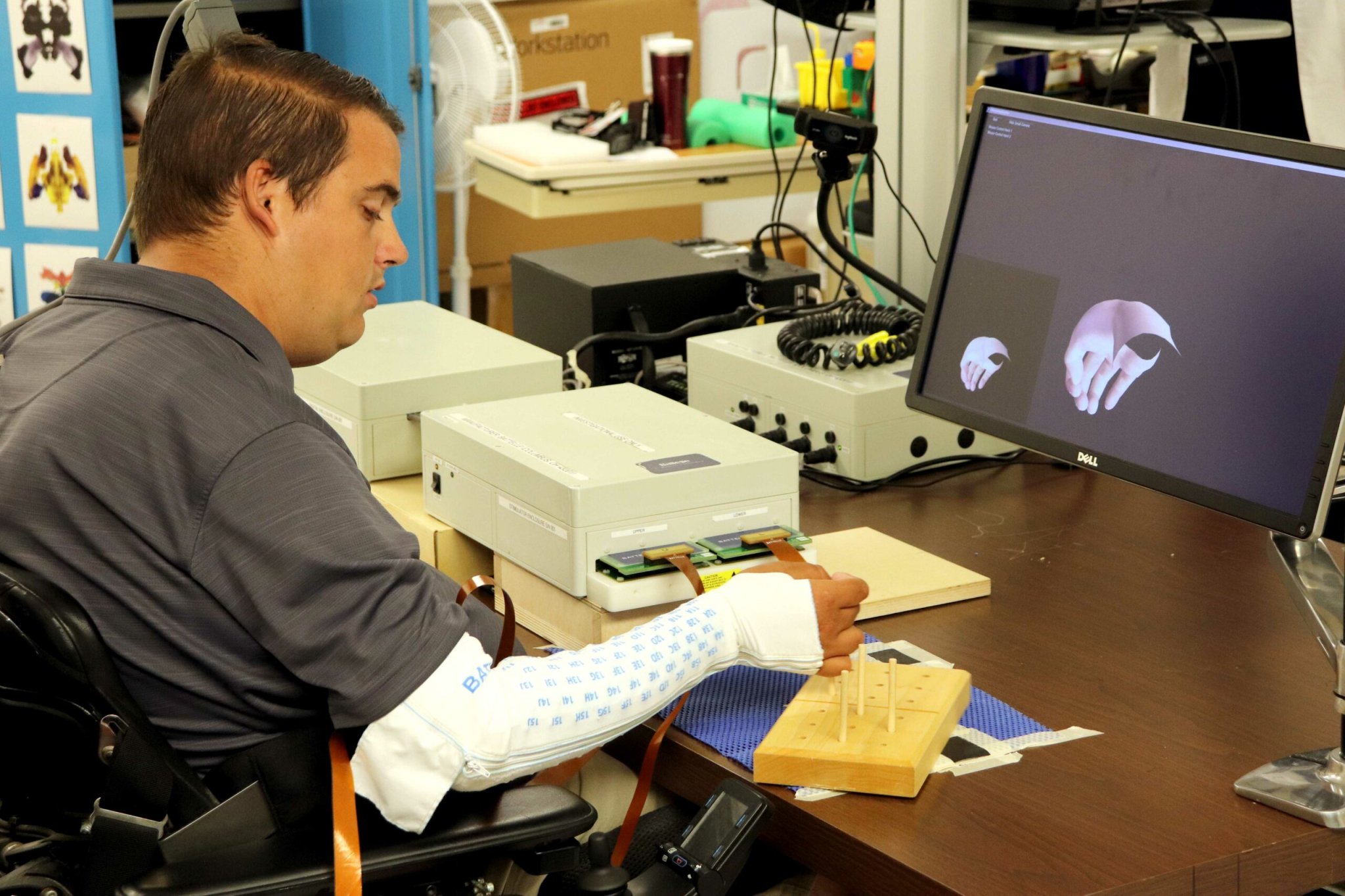

When he thinks “grab,” a bead-sized device in his brain picks up the resulting electric blips, a desktop computer interprets them, and a zippered sleeve jolts his forearm with electric current, moving his hand. Over the last five years Ian, who never expected to control his hand again, has learned to pour from a bottle, stir with a straw, and even play Guitar Hero. He regains the use of his right arm only in the lab, however, where engineers can plug him into a computer and run through the calibration session required to remind the machine how to interpret his thoughts.

This week the doctors and neuroscientists developing the interface described a new version that, for the first time in humans, harnesses a novel pairing of machine learning strategies to skip the tedious and repetitive training sessions. Eliminating the need to spend hours each day fiddling with the machine opens the door to Ian, and others like him, someday taking the device home. “Just being able to pick up a bottle and take a drink,” Ian says. “That’s something that would really change my life.”

Ian’s life hasn’t been the same since 2010, when he dove into the surf off the coast of North Carolina while on spring break with his college friends. He’d jumped into the ocean hundreds of times before, but that day a wave pushed his head into an invisible sandbar, breaking his neck. By the time his friends pulled him onto shore, he could feel the warmth of the sun only on his face.

Four years later, after a physical therapist referred him to an experimental collaboration between Ohio State University and private applied science company Battelle, surgeons implanted a tiny array of electrodes into the part of Ian’s brain that controls his right hand. They connected the sensor, which records electrical bursts of brain cell activity, to a small port in the back of his head that lets him jack directly into a computer, Matrix style. That’s where the real work begins: transforming millions of data points per second into a meaningful motion.

“When you do any movement with your hand, your motor cortex lights up in the hand area,” says David Friedenberg, a machine learning researcher at Battelle, “so the real trick is trying to figure out the difference between different hand movements, which is much more subtle.”

To tease out the nearly imperceptible differences between related brain signals, the team turned to neural networks—computer programs that find ways to separate similar-looking datasets by guessing and checking a bunch of different rules until they hit on one that works. During a typical four-hour lab session, Ian spends half the time thinking on cue about various hand movements so the neural network can learn which brain signal matches which motion, a technique known as “supervised” learning, and half the time running experiments. He found the exercises tough at first, but gradually gained control.

“I would leave sessions mentally drained,” Ian says. “But now it’s gotten to the point where [moving my arm] is something I have to focus on, but I can focus on that and still do other things at the same time.”

While Ian got better at commanding the machine, the machine hit a limit on how well it could read Ian. The baseline firing of your brain cells shifts from day to day depending on how much sleep you got, what medication you’re taking, and whether you indulged in that second cup of coffee, among other things, which means the patterns a brain interface learns on Monday may be useless by Tuesday. Without constant retraining based on a known sequence of movements, such systems fall apart in a matter of hours—a dealbreaker for those who would see these machines in homes, since users can’t spend hours each day setting up their devices.

One solution, which the team outlined Monday in a paper in Nature Medicine, is to incorporate a second neural network that can learn without requiring researchers to feed it named examples of movements—an example of “unsupervised” learning.

First the team trained a network in the traditional “supervised” way, with Ian demonstrating each motion command in turn by thinking them. Then, the unsupervised network took over. It monitored Ian’s thoughts as he used the device freely, but no one told it explicitly what he was doing. Rather, the machine used its setup training to guess what motions Ian was executing, and re-trained itself on the fly with those guesses. Imagine taking one Spanish course, and then trying to maintain those basic language skills on your own by watching a lot of Mexican soap operas. Having a teacher on hand to answer all your grammar questions would be more efficient, but with sufficient initial knowledge, the telenovela marathon may be enough to stem the decline.

In the end, the hybrid system interpreted Ian’s motion signals correctly more than 90 percent of the time for an entire year with no retraining. It also outperformed the previous system by nearly 10 percent. Other groups have done related work in monkeys, but Friedenberg says this is the first time such a device has worked so consistently humans.

The system proved a quick study for new motions too. While it started out with a vocabulary of just four rough hand motions, such as flexing the wrist, the device was able to learn to open Ian’s hand with nearly 90 percent accuracy after just seven minutes of input from Ian. “You only need a couple of examples to say, ‘this is the basic pattern I’m looking for,’ and then the rest of the data is helping to counteract those baselines that might be shifting around,” Friedenberg explains.

Everyone has high hopes for the project’s next steps. “I’d just like to get it outside the lab and put it through its paces,” Ian says.

To do that they’ll need to make it a lot smaller. The current interface, which consists of seven big boxes sprawling across a table, is hardly portable. The team aims to shrink that machinery down to fit into one device, about the size of a hardback book, that will fit on Ian’s wheelchair.

Still, the new self-training system represents a step toward the ultimate goal. With eight hours of data from daily use, Friedenberg expects the system will barely need future users to train it at all. Ian is especially looking forward to the day when he’ll be able to operate this device, or a similar one, without an engineer on hand. While he says he doesn’t expect to play the piano, just being able to get a good grip on packages and kitchen utensils would let him cook, do chores, and generally become more independent.

“It’s really restored a lot of the hope that I have for the future to know that a device like this will be possible to use in everyday life,” he says, “for me and for many other people.”