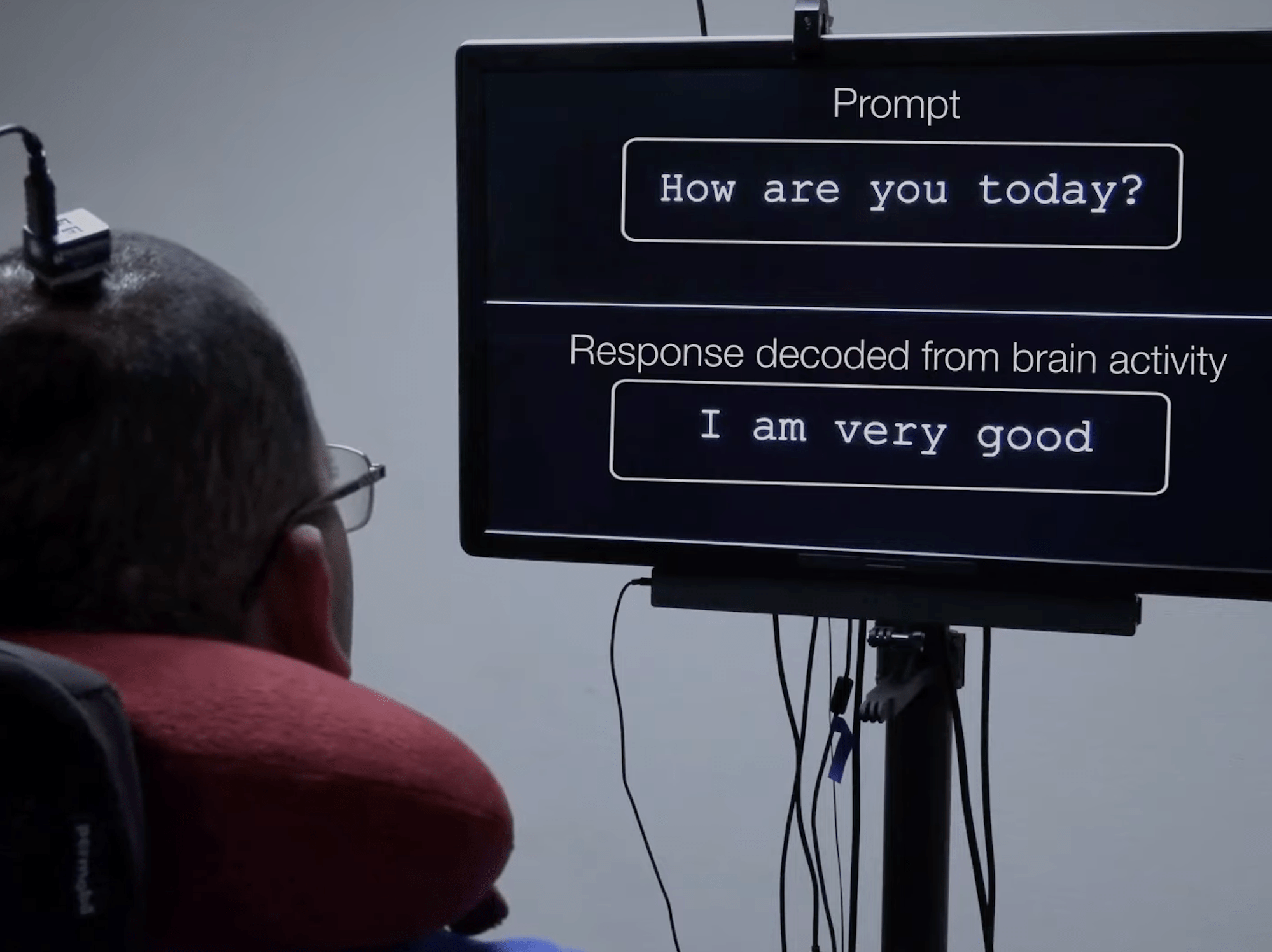

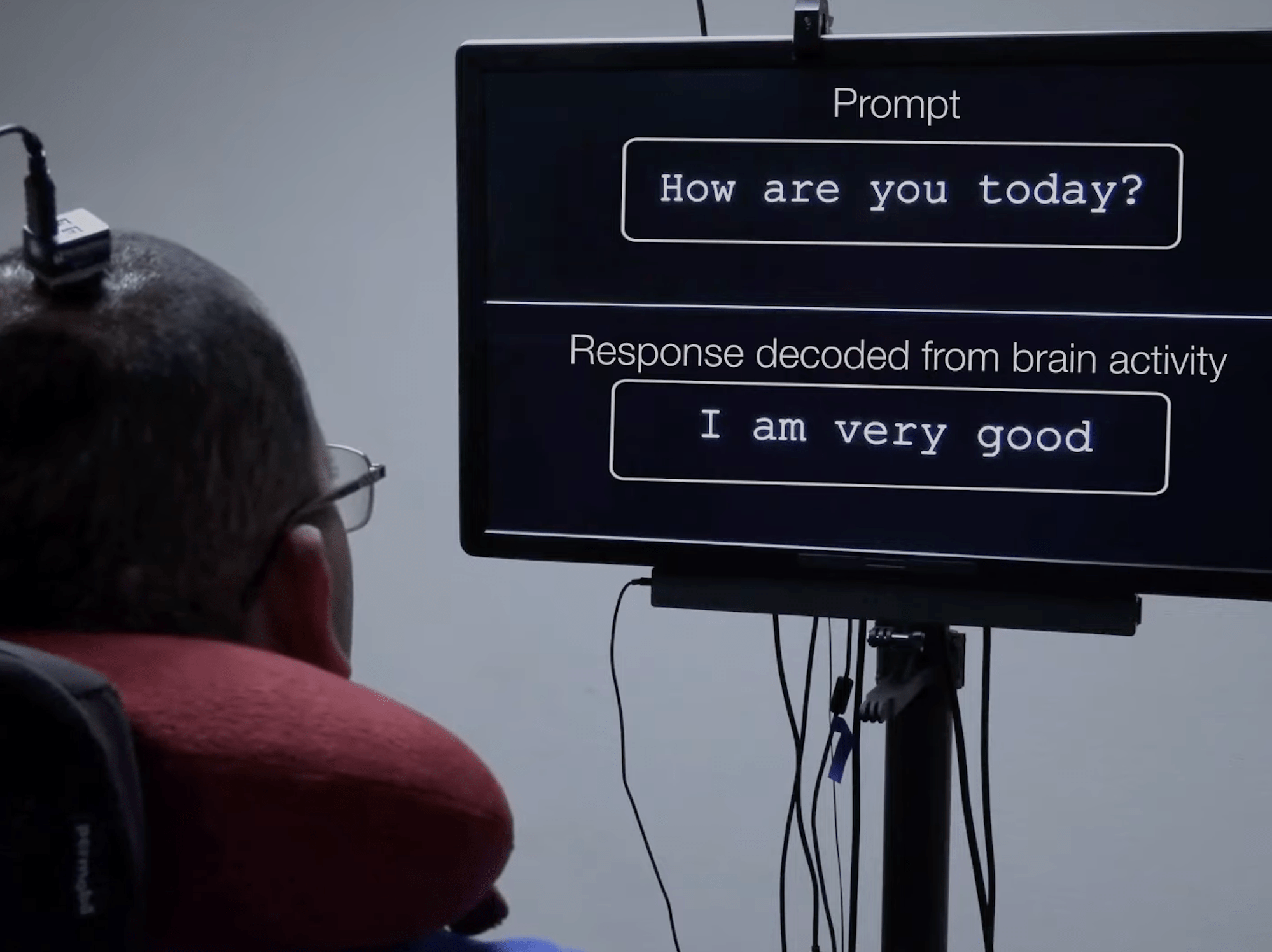

New improvements in a brain implant developed by researchers at the University of California Berkeley are providing a paralyzed man unable to speak the ability to communicate by translating just their brain signals into text. The research, published this week in the journal Nature Communications, demonstrates how a a patient can now “type” from a roughly 1,150 word vocabulary bank at a speed of about 29 characters per minute (roughly seven words), with a 94 percent accuracy rate by simply thinking.

Although a version of the device was first described last year in The New England Journal of Medicine, its capabilities were then far more restricted than the current iteration, and relied on a patient attempting to vocally speak words which were then translated by a computer system.

[Related: How a personalized brain implant helped one woman’s extreme depression.]

“Neuroprostheses have the potential to restore communication to people who cannot speak or type due to paralysis. However, it is unclear if silent attempts to speak can be used to control a communication neuroprosthesis,” reads the research group’s paper abstract.

To tackle this issue, the team utilized deep machine learning and language modeling techniques to decode letter sequences from the test patient as they silently spell via the NATO alphabet (“alpha” for “a,” “bravo” for “b,” and so on).

“The NATO phonetic alphabet was developed for communication over noisy channels,” one of the study’s co-authors told Live Science this week. “That’s kind of the situation we’re in, where we’re in this noisy environment of neural recordings.” When the patient thinks the code words, algorithms translate the brain activity via a network of 128 electrodes previously laid across his brain’s surface, particularly atop a region controlling vocal tract muscles and an area involved in hand movements. To end a sentence, the trial participant attempted to squeeze his right hand, which was then interpreted as a sentence’s endpoint.

For now, the device requires a wired connection to operate, but researchers are hopeful to one day transition to a completely wireless interface. They additionally believe there is potential to combine the abilities developed during both the previous experimentation and their most recent one to create a system that can work for both vocal and thought-based speaking attempts.