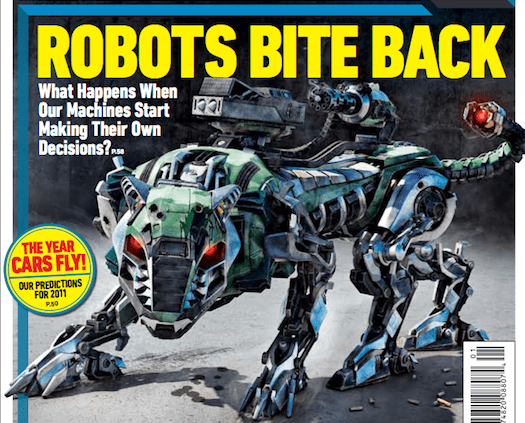

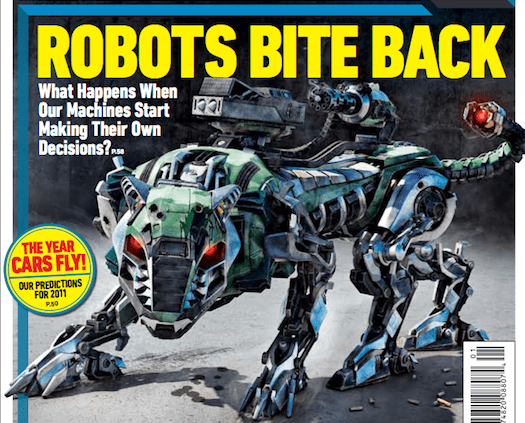

When writing a truly grabby headline about robots, you generally have two options.

First, there’s the robot uprising reference. Whether direct—see Gizmodo‘s “ATLAS: Probably the Most Advanced Humanoid Yet, Definitely Terrifying,” about, get this, an unarmed robot designed for use in a competition to develop life-saving first-responder bots—or simply name-dropping—the Telegraph‘s_ _ “Terminator-style self-assembling robots unveiled by scientists,” about tiny cube-shaped bots that can (wait for it) awkwardly wobble towards one another—the result is the same. It’s a reference, and often a zany one, to the notion of machines rebelling against their creators and committing genocide. Do the writers and editors involved actually buy into the SF prophesy they’re tapping into? Do they honestly think that James Cameron has seen our future, and that its littered with human skulls, crushed underfoot by autonomous laser tanks and skeletal crimson-eyed bogeymen? Well, who knows? It’s just a joke, right? A total knee-slapper, like most hilarious predictions of our extinction by mass, systematic slaughter.

That’s one method of getting you (and me) to look at robot news. Another is to ditch all pretense, and get right with the fear-mongering: Killer robots are coming to get you.

Take, for example, the story by Joshua Foust on Defense One that ran earlier this month, entitled, “Why America Wants Drones That Can Kill Without Humans.”

But when cross-posted to sister site, Quartz, the title had morphed into something even more confident: “The most secure drones will be able to kill without human controllers.”

Pause for a moment. Drink it in. Feel the certainty emanating from the syntax in both headlines. There’s no question mark, no caveats or reservations. The governments wants these systems. Secure drones will kill without human control. As surely as drones will continue to be built and deployed, and as surely as each of us will eventually die, drones will kill without human controllers.

What follows, though, is a story that appears to prove the exact opposite. It initially raises the specter of the lethal autonomous robot, or LAR, as a topic of heated debate in academic and military circles. Quotes from experts help to define the possible benefits of such a hands-off killbot—it would be less prone to hacking, and better at aiming, than today’s remote-controlled systems—and then proceed to trash the entire concept.

“The idea that you could solve that crisis with a robotic weapon is naïve and dangerous,” says one professor, talking about Syria. “Ultimately, the national security staff…does not want to give up control of the conflict,” says another expert, a fellow at the Brookings Saban Center, adding, “With an autonomous system, the consequences of failure are worse in the public’s mind. There’s something about human error that makes people more comfortable with collateral damage if a person does it.”

The story ends with a final expert quote, from a naval postgrad professor: “I don’t think any actor, human or not, is capable of carrying out the refined, precise ROEs [rules of engagement] that would enable an armed intervention to be helpful in Syria.”

This seems to be an extremely responsible story about all the reasons LARs are a bad idea. What it’s missing, though, and what every story about this looming threat also leaves out, is anyone on the record talking about wanting to unleash robots into a war zone. Every military and robotics expert I’ve ever spoken to repeats the same sentiment, as drilled and rehearsed as any proper talking point—there has to be a “human in the loop.” Someone who either guides the drone’s reticle over a target and pulls the trigger, or, in theory, tells the robot, “Go ahead, kill that guy.”

So here’s my question: Where are these unnamed, off-the-record maniacs, the ones just itching to send a drone into some designated kill-box, where it will use its own algorithmic judgment to decide who to ignore, and who to pulp? Are these military personnel, the kinds of people who know (some of them from firsthand experience) just how insidious friendly fire is, or how often any piece of technology can and will backfire? Are they DoD-funded roboticists, the same people whose own bots routinely grind to a halt in the lab, for whom failure is an expectation, and the best hope is to achieve a less embarrassing margin of success?

My intention isn’t to pick on Foust—he’s an excellent writer and reporter. And whoever wrote the various headlines attached to his piece, or the ones who co-opted the dirge-like tone of that display copy, covering the Defense One story with their own stories and posts, such as, “Coming Soon, Lethal Autonomous Robots that Can Kill on Their Own Volition” and “Unmanned Drones to Make Strike Decisions,” are just swimming with the tide. This is how the extremely serious, extremely sobering business of covering the future of drones is handled, by implying and suggesting into existence a horde of inevitable, untethered death machines. And who wants these doomsday devices? Why, hordes of bloodthirsty straw men, of course.

For the record, I’ve contributed to this ugly tradition myself, both unintentionally, and by not properly vetting or framing rumors and product hype. I accidentally started a false urban legend about armed ground bots aiming at military personnel. I rounded up scary foreign robots that might never go into development, including an early blurb about South Korea’s oft-referenced (as an example of killbots being a reality) robot sentry tower—an armed, and self-described autonomous system training its sinister guns and sensors across the DMZ—that’s listed as an external source in the system’s Wikipedia entry. That the glitchy ground robot story was scrambled in a game of internet telephone, or that the Korean kill tower would only theoretically open fire if it was ordered to, negating its status as an automatic death machine, is all but irrelevant. The examples, however unsupported or incomplete, speak for themselves. The autonomous death bots are already here, proof that more are on the way. Never mind that the only confirmed example is an experimental sentry tower, that’s never fired on a person, and whose partial autonomy is at the beck and call of a human operator.

At the heart of this ongoing discussion of the inevitability of self-guided murderers is the notion that the tech exists—it’s just a matter of deploying it. A robotic anti-air turret, the kind that can acquire and fire on incoming missiles and aircraft without operator intervention, malfunctioned in 2007, killing nine sailors. Couldn’t that happen again, on purpose? Sure. It’s technically possible. But it’s also as completely unlikely as North Korea dropping a nuke on U.S. soil, ensuring its own annihilation. No offense to the vast missile defense industry that’s used North Korea to justify its post-Cold War funding, but capability doesn’t equal reality. If we don’t apply logic to the world around us, then we’re either buying into someone else’s hype, or simply terrorizing and distracting ourselves with phantom threats. The prerequisite for LARs to be used would be the combined lunacy of hundreds, if not thousands of politicians, military personnel, and researchers. Government shutdown jokes notwithstanding, the halls of the Pentagon aren’t a crossfire of Three Stooges-style pie-throwing idiocy, nor is it stalked by ghouls desperate to find new ways to rack up collateral damage. Even if the most cartoonish version of the DoD is real, and it’s dumb enough, and/or evil enough to make feasible its reported quest for LARs, shouldn’t we wait for the first actual announcement or field-test of such a system, instead of preemptively shrieking at Terminator-sized shadows?

There’s a very real possibility that I’m wrong about all of this. Maybe I just want to rain on everyone’s killbot parade, because that’s what you do on the internet—tell those who are having fun to knock it off. So I’m opening up the comments. If there are real examples, of real people requesting a fully autonomous armed drone, that would be cleared to select and attack its targets without human authorization, let us know. And maybe the editors and writers I’m talking about think their headlines and stories aren’t misleading and sensationalistic. Maybe the robots are coming for us, after all.

If so, stop talking in what ifs, and unsourced chatter, and thought experiments disguised as journalism. There’s enough to cover related to actual remote-piloted drones that fire actual missiles at actual human beings to occupy us for the next 20 years. By then, perhaps there’ll be a reason to set robots to kill at will.

How about UFOs? I’ve seen a lot of hard-hitting news stories about those, too.