Think of the most fussy science teacher you ever had. The one who docked your grade if the sixth decimal place in your answer was rounded incorrectly. Now imagine work that even that teacher would hate for being too anal-retentive. That’s the kind of work that goes into defining the meter, the second, and other international standard units of measure.

Here’s a look at some ridiculously precise standards and the role that elements have played in defining, redefining, and re-redefining them over the ages.

There are seven base units in the international metric system, and over the past century, metrologists (people for whom measurement isn’t the start of science—it is science) have gotten increasingly picky about defining these seven quantities. And it turns out that some of the best tools metrologists have to make measurements are elements on the periodic table. Unlike even the top measuring instruments, elements are exactly the same everywhere, allowing for perfectly reproducible results. And the sheer variety of the table ensures that, no matter what obscure task you have in mind, there’s probably an element for that.

The Meter

The first definition of the meter wasn’t bad, for the 1790s: Exactly one ten-millionth of the distance between the Equator and the North Pole, as measured through Paris. Unfortunately, scientists botched the measurement, and the length of the meter that came into common use was later found to be 0.2 mm off the supposed definition, an intolerable gap.

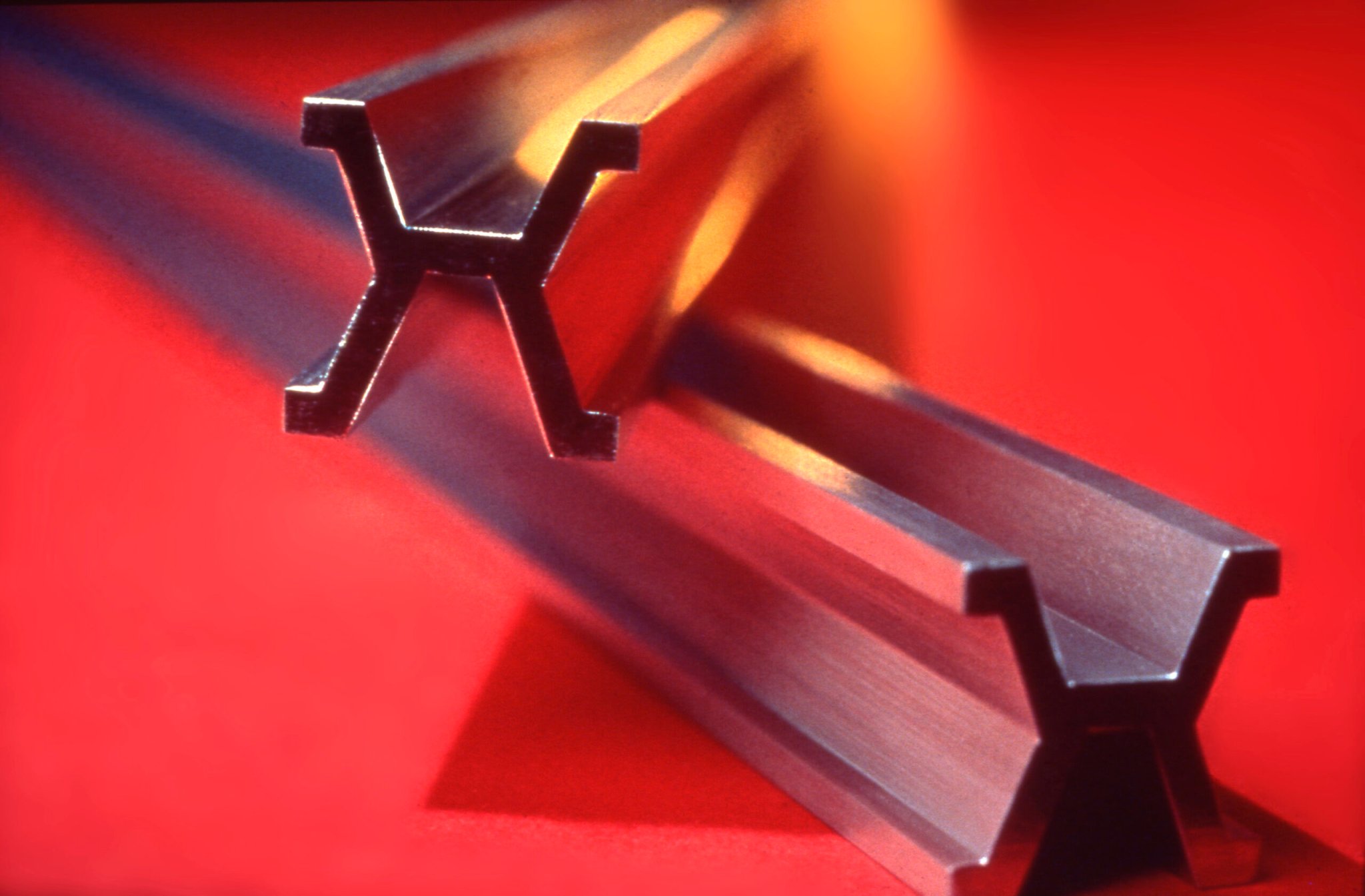

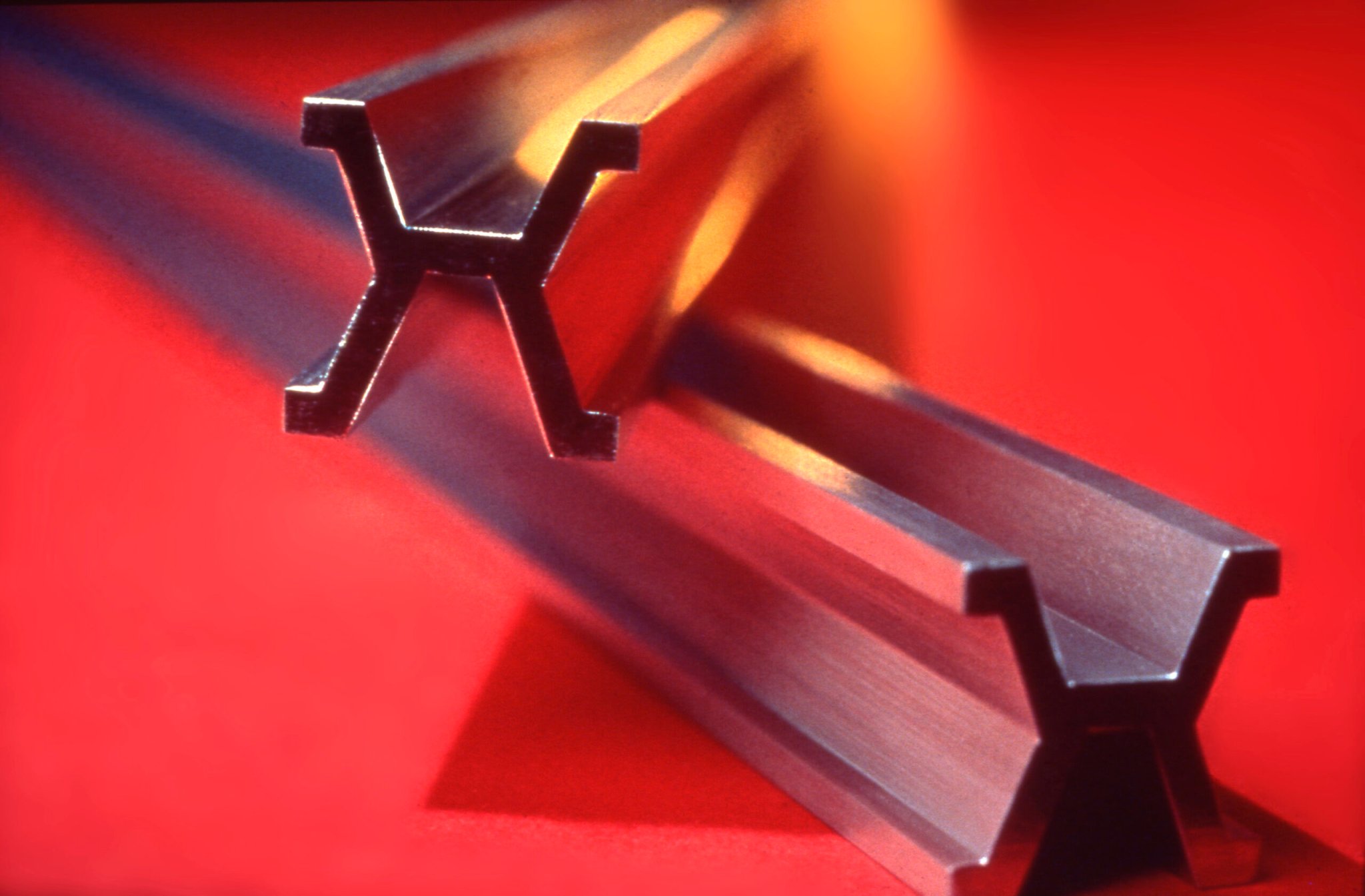

So in 1889 scientists replaced the meridian definition with a long bar made of the elements platinum and iridium. Someone made a scratch near one end of the bar, then made a scratch near the other, and the distances between the scratches became, from then on, 1.000000… meter, to as many decimal places as you like.

But defining a meter this way only evoked more questions. Like what temperature are we talking about? Things expand when they heat up, after all. And what’s the geometry here? A rod that length will droop if not supported properly, and will droop differently depending on where it’s supported. To head off any ambiguities, scientists decided the rod had to be measured at 0°C and standard atmospheric pressure, and supported on two cylinders one centimeter in diameter, with each of the cylinders in the same horizontal plane and 571 millimeters apart.

Naturally, this definition was no good, either. For one, it’s questionable to use centimeters and millimeters to define a meter. For another, on a microscopic scale the scratches have their own width—where does the measurement start? Even worse, metrologists hated that the definition relied on an artifact, a man-made object, since this was supposed to be a universal unit, not the property of one country. (Indeed, the fact that scientists from other countries sometimes had to hike it to Paris and cool things down to 0°C and make their own scratches on an identical rod and bring it back home was a hindrance to spreading the standard.)

What metrologists coveted was an “operational” definition—they wanted to discover a physical process that would produce something with a magnitude of exactly one meter every time. To put it more colloquially, and anachronistically, scientists were after an “e-mailable” definition—a purely verbal set of instructions that could be sent around the world, and that would allow scientists anywhere to perform an experiment and reproduce the same meter.

Scientists finally achieved this goal in the 1960s, with the noble gas krypton. All noble gases (think of “neon” lights) emit strong, colored light when excited, and krypton happens to emit a real beauty, a sharp beacon of orange light that’s easy to measure. So a meter became 1,650,763.73 wavelengths of this orange light from a krypton-86 atom. That’s an e-mailable definition, since all krypton atoms are identical, and scientist could just pick up a krypton discharge tube if he needed it. Scientists had finally relegated the platinum-iridium rod to the velvet casket of a museum.

Never satisfied, though, metrologists redefined the meter again in 1983 , getting rid of even the krypton atom. A meter is now the distance light travels in a vacuum in 1/299,792,458th of a second.

Of course, that definition assumes you know how long a second really is…

Tune in tomorrow for the next installment of our exploration of the standards that make science tick. The series is written by Sam Kean, author of The Disappearing Spoon—a collection of funny and peculiar stories hidden throughout the periodic table.