A smartphone app may provide a new answer to an old problem for the visually impaired: tree branches at head height.

While guide dogs and walking canes can prevent a person from tripping over objects on the ground, they’re little help for spotting branches hanging over a sidewalk or a partially open garage door.

Computer scientists in Spain have developed an app, called Aerial Object Detection, that recognizes and monitors head-level obstacles as a blind person approaches them, the team reports this month in the IEEE Journal of Biomedical and Health Informatics.

“It’s a fascinating app, and it tackles a real problem that is not addressed well by existing technologies,” says James Coughlan of the Smith-Kettlewell Eye Research Institute in San Francisco, who wasn’t involved with the work.

Here’s the setup. Users wear the smartphone like a necklace, with the camera facing out. A horizontal swipe across the screen selects the type of navigation: telemeter or obstacles. The first works for indoors, beeping or vibrating the phone as a person approaches an obstacle like a wall or an open cabinet.

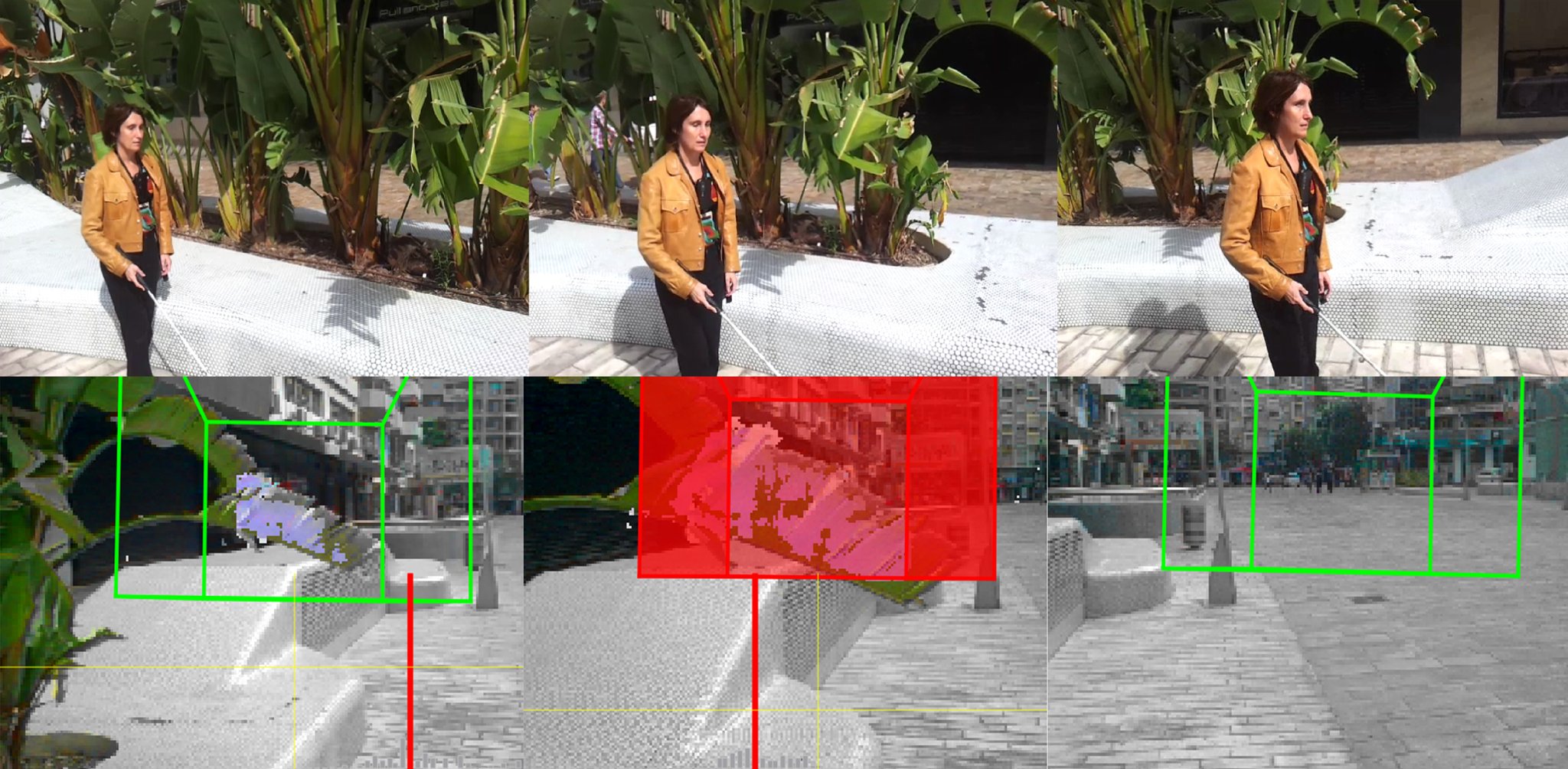

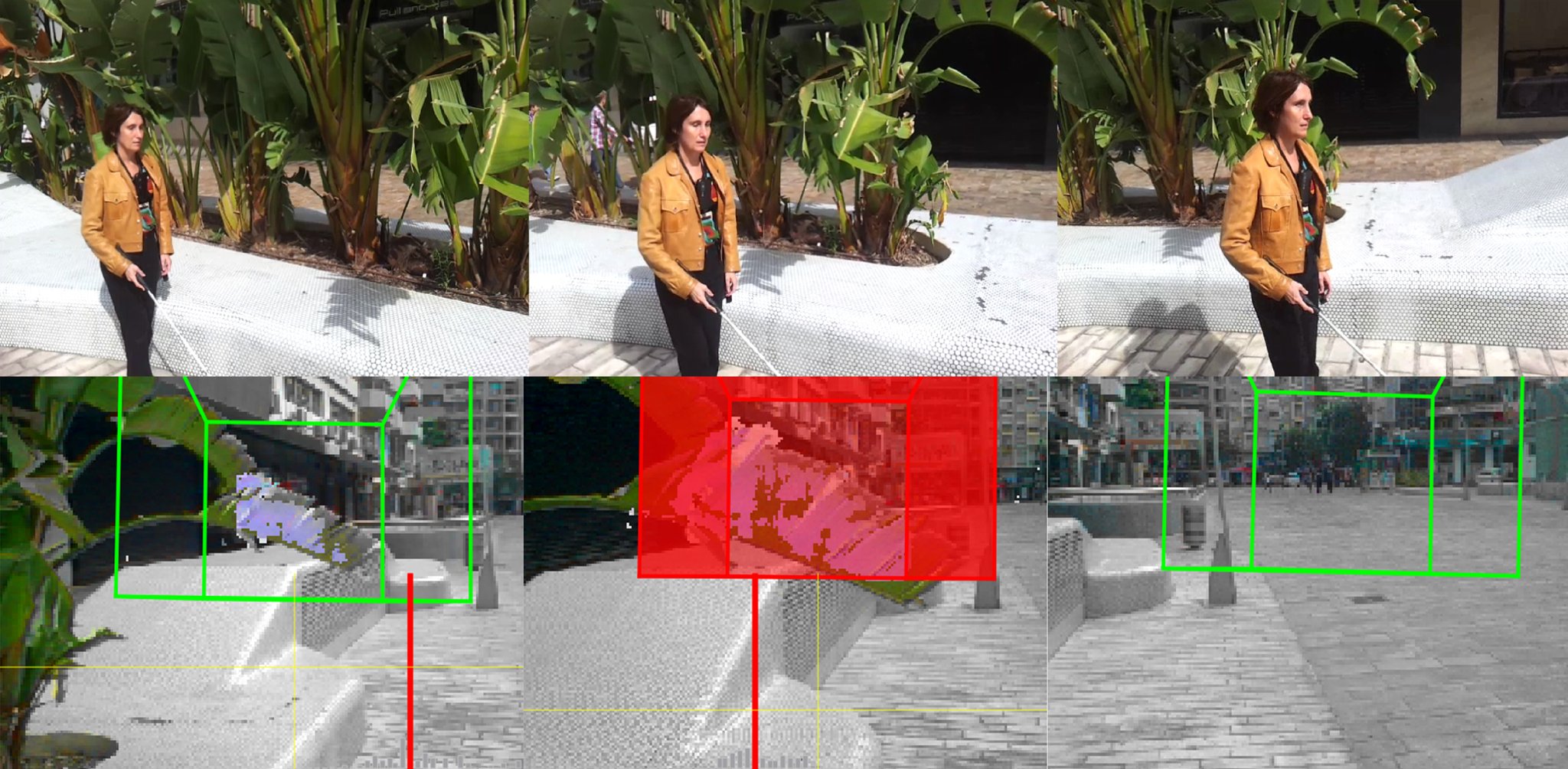

The obstacles mode detects danger outdoors. The app uses the phone’s 3D camera to scan the oncoming landscape. A tree branch becomes a cloud of points, and since the camera perceives depth, the app can figure out the absolute distance between a walker and the object. At the same time, motion sensors calculate the person’s heading and speed, so the app can time and tailor the alert.

Other apps for the visually impaired can read the colors of signs or find crosswalks, but Aerial Object Detection is the first smartphone-based solution that uses 3-D vision. But for the app to help the world’s 39 million blind and 246 million with low vision, it’ll need a platform.

“The future really depends on the existence of 3-D mobiles,” says designer Francisco Escolano of the University of Alicante in Spain. Only two brands have broached the global market, and even though Amazon introduced a new model in June, some wonder whether they’ll gain mainstream traction. The team expects the patent approval for the app within the next year, at which point it’ll hit Android stores (iPhones don’t sport 3D cameras).

In the meantime, Escolano and his colleagues are developing apps that work on Google Glass or regular smartphones, which have monocular – single camera – vision. The biggest hurdle is that monocular vision can only determine relative distances, meaning it will needs a reference object – like a park bench — in the background to judge a scene. Over the next year as part of a national project, the team is building a library of common outdoor objects – traffic signs or bus stops – that can serve as beacons for a monocular app.