Artificial intelligence lets us offload tasks onto machines—they’re beginning to tag our photos, drive our cars, and fly our drones. These A.I. systems occasionally make wrong decisions while doing these things, as speculated in the recent Tesla Autopilot crash or mishearing a voice command, but new research suggests that hackers with experience in A.I. could force these algorithms to make wrong and potentially harmful decisions.

Researchers took the first step in validating these real-world attacks when they forced an A.I. algorithm to misidentify images, up to 97 percent of the time, in pictures taken with a smartphone. Changes to the images, printed on paper and photographed with a smartphone in the experiment, are invisible to human eye, but outside the lab the difference could be between a self-driving car seeing a stop sign or seeing nothing at all.

“You could imagine someone signing a check that looks like it was for $100, and the ATM reads it as $1000,” says Ian Goodfellow, researcher at OpenAI and co-author of the paper. He also says these attacks could be deployed to increase search engine optimization by tricking a machine learning algorithm into thinking an image was more appealing, or by a financial institution to trick a competitor’s A.I. stock trader into making bad decisions.

Goodfellow isn’t aware of this kind of attack ever being used in the wild, but says it’s something artificial intelligence researchers should definitely start to consider.

This research on deceiving A.I. has existed for years—but previous work couldn’t prove this kind of attack was plausible with photos taken of real items by an average camera. The paper, written by Elon Musk-backed OpenAI and Google, validates that even with changes in lighting, perspective, camera optics, and digital processing, attacks can still be successful.

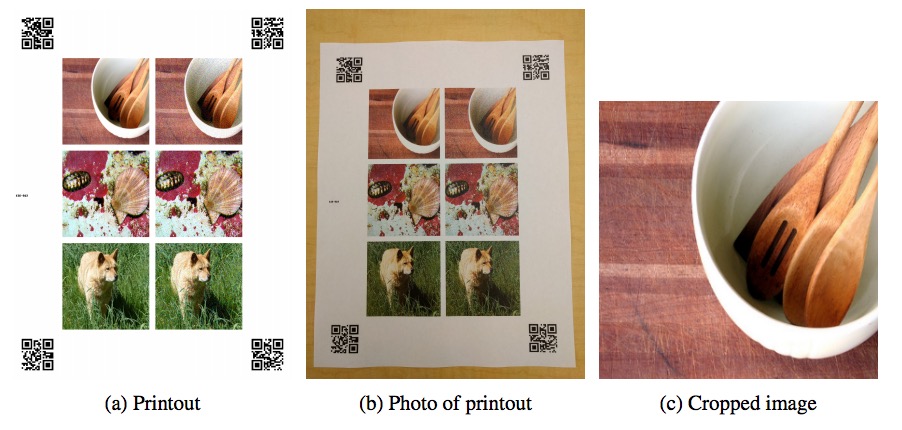

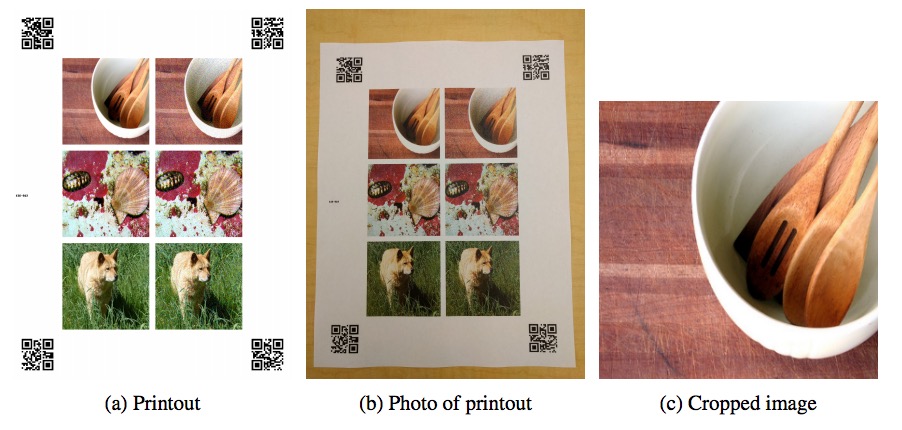

To test the vulnerability, the team printed slightly-altered images, already known to deceive A.I. if fed directly to the algorithm, on paper next to unaltered images. Then using a Nexus 5X smartphone, they took pictures of the paper.

The researchers cropped the printed images, then ran them through a standard image recognition algorithm. The algorithm not only failed to identify what was pictured, but 97 percent of the time, it wasn’t even able to get the correct result within its top 5 guesses. Results did decline, however, based on variation in alteration to the images—typically changes more drastic and perceptible humans had a higher rate of success.

Their aim was to merely trick the A.I. into not seeing what it normally would—although in previous research, these altered images could be perceived as specific other objects. Researchers didn’t account for the kind of camera used, but expect that adjusting for a camera’s optics would improve their results.

Other research has used the same technique—but with audio—to trick Android phones into visiting websites and changing settings through malicious voice commands.

That group, based out of Georgetown University and UC Berkeley, are able to modify recorded voice commands, like “OK Google,” and distort them to be nearly unintelligible to humans.

“Hidden voice commands may also be broadcast from a loud-speaker at an event or embedded in a trending YouTube video, compounding the reach of a single attack,” researchers write in the Georgetown and Berkeley paper which will be presented at USENIX Security Symposium in August.

A public attack like this could trigger smartphones or computers (newly imbued with virtual personal assistants) to pull up malicious websites or post a user’s location to Twitter.

These attacks might be innocuous for some uses in A.I.—resulting in a mislabeled photo—but in robotics, avionics, autonomous vehicles, and even cybersecurity—the impact is real.