Even aside from the fear that evil A.I.s will take over the world, the field of artificial intelligence can be daunting to outsiders. Facebook’s director of artificial intelligence, Yann LeCun, uses the analogy that A.I. is a black box with a million knobs; the inner workings are a mystery to most. But now, we have a peek inside.

Masters candidate at Ryerson University Adam Harley has built an interactive visualization that helps explain how a convolutional neural net, a type of artificial intelligence program used for analyzing images, works internally.

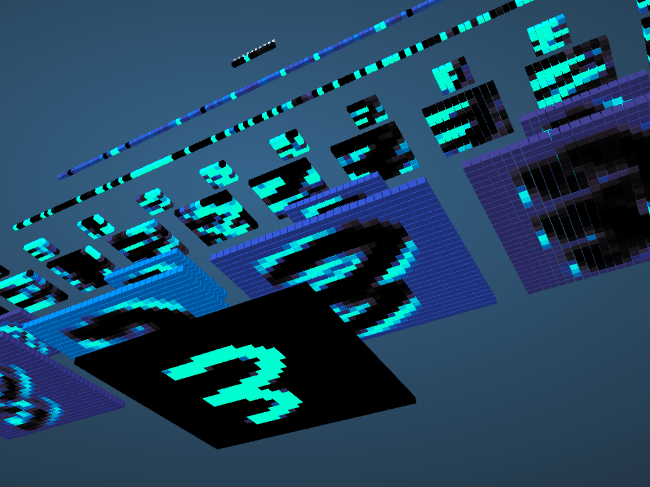

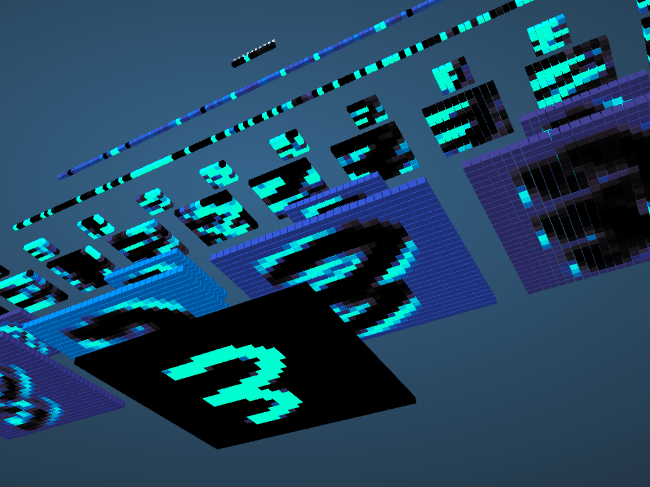

As seen in the interactive visualization, neural networks work in sequential layers. At the bottom is the input, the original idea that the computer is trying to make sense of — in this case a numeral that you drew — and at the top is the output, the computer’s final conclusion. In between are layers of mathematical functions, each layer condensing the most important distinguishing information and passing it to the next.

The green pixels in the input (bottom row) correspond to what you drew, while the black is background the number must be distinguished from. If this were trying to detect a face, the 3 would be the face, and the black would be the background of the photo. In each stage, we’re seeing what the image looks like after each step, not the step itself.

In the neural network, the first few layers are mainly concerned with things like edges and shapes, pulling out the general visual idea, looking for different distinguishing features that can be pulled out to emphasize what makes the shape different than its surroundings.

Each of these layers has been previously tuned to recognize this data, in a process called training. Training usually means running hundreds of thousands, if not millions, of examples through the machine to demonstrate what different types of “3” look like. The same process is used with different source material for all kinds of machine learning and artificial intelligence. Google trains its voice recognition software on randomized voice samples from people who use their service, and Facebook trains its facial recognition algorithm on images of people at different angles.

Training entails running millions of examples through the machine.

The data passed along by the first layer is simplified by the second layer (called a downsampling layer because it reduces the complexity of the data). It’s then analyzed for shapes again by the third layer, which is a convolutional layer like the first. This neural network has two convolutional layers, while more complicated networks can have more than 10.

This set of shapes and edges is then processed and matched against a set of predetermined outputs, to ultimately conclude with a strong probability that the user drew a 3 (or perhaps an 8). You can see this in the color of the the data as it proceeds through layer by layer. That green number that you drew eventually is the green bit of information that designates the (hopefully) correct output.

In Harley’s model, the computer can simply distinguish a number, much like the original convolutional neural nets used to read check deposits in ATMs. Cutting-edge AI is far more complex, able to recognize faces with 97 percent accuracy.

But seeing is believing. Try out artificial intelligence for yourself!

[via Samim Winiger]