We may earn revenue from the products available on this page and participate in affiliate programs. Learn more ›

For Intel’s big CES press conference, they promised an ambitious goal: make computer sensors more like human senses. And I don’t know if the product they announced, the RealSense 3-D camera, is quite as amazing as the human eye, but it’s pretty darn rad.

Using the embedded camera, laptops and tablets can scan depth when taking pictures, through infrared and color sensors. Once the camera’s determined that depth, you can do some pretty crazy stuff, like immediately crop out a particular object by selecting its depth, or even, through a new partnership with 3D Systems, create a shareable scan and 3-D print what your camera sees.

It’s not perfect; there’s a way to use the camera to detect a person and place a new background behind that person, but it still looked a little rough in the demo: the person wasn’t perfectly cut out in the frame.

It’s part of a push by Intel to create a series of sensors under their RealSense banner that are more intuitive, and the tech is coming to gadgets from a bunch of manufacturers, like Dell, Lenovo, and HP. The company also showed off some gesture recognition tech for tablets, Leap Motion style, that could be used with the camera. One demo showed someone watching Google Earth, turning her head to tilt the direction. (Less cool: new voice-listening tech, also under that RealSense banner.)

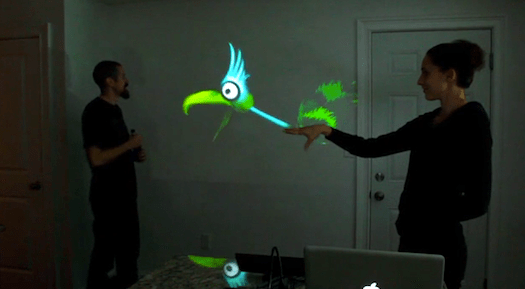

There were also some gaming-related uses for the cam: it tracks finger-by-finger gesture control, letting people move their hands or a flat plane to manipulate on-screen objects. It looks a lot like the Xbox Kinect, but it could fit in a tiny laptop. And it looks like a lot of fun. The same idea could be applies to augmented reality games, too.

This is something that feels like the future, something that with disparate devices—like the Kinect and 3-D scanning—we’ve been leading up to. We were waiting for the tech to get small enough to be viable for a laptop. Now, here it is.

![Samsung’s Galaxy Camera Is The Camera Of The Future [Review]](https://www.popsci.com/wp-content/uploads/2019/03/18/6S7NO25O7SXS4Y7624QFSJRIYY.jpg?quality=85&w=525)