This week I had the honor of crowning the winner of National Instruments’ student design competition, in which students show off the various inventive ways they use NI’s LabView software. For those who don’t know, NI builds the software and systems by which an engineer can test and prototype pretty much anything, from an irrigation system to a rocket. LabView is a software environment in which you can put together your parts ahead of time to test how much voltage goes here, how much interference results over there. NI’s other products, from data-acquisition modules to processors, can then be the backbone of your first build. LabView helps run the CERN Large Hadron Collider, mission control for SpaceX’s Falcon IX rocket, and the kits that make up the robots duking it out in the FIRST competitions each year.

College students have a special aptitude for bending LabView to their will, and the finalists on display in Austin were very hard to choose between.

Rice University built a sensor-filled baseball that precisely transmits the mechanics of a throw to better teach pitching. UC San Diego created a trumpet that not only detects the exact pitch being played, but can bend that pitch in real time to hit the proper note—a sort of auto-tune for the brass section. The University of Konkuk, South Korea, created an autonomous flying drone out of remarkably few parts. And the University of Leeds built an astounding haptic feedback system for simulating the feel of tumors under the hands of doctors in training.

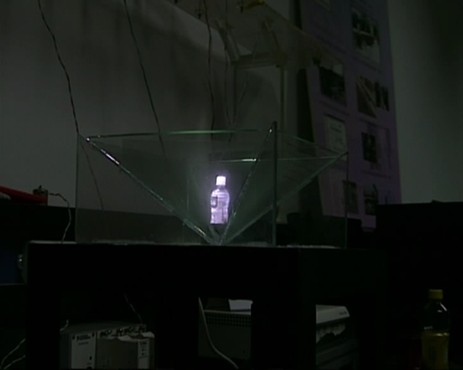

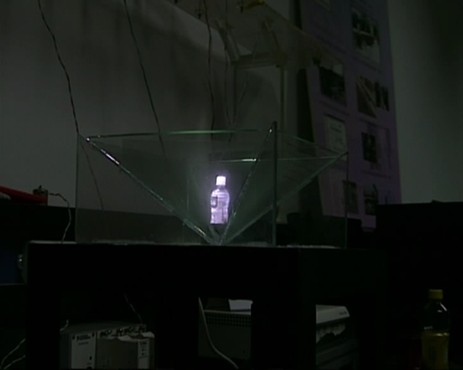

In the end, however, we chose the entry from Tsinghua University, China. Five students there built an entirely new 3-D imaging system. They conquered the classic glasses-or-no-glasses problem by simply stepping around it: instead of a conventional flat screen, they built a four-sided glass enclosure which displays the four sides of a simulated object. The system scans an object on a turntable, acquires the image data, and reproduces it by projecting the image with four projectors onto four panes of glass. Walk around the simulated object on display, and it’s like walking around it in real life. In addition, the system recognizes gestures, allowing you to rotate and zoom in on an object with your hands. You can imagine the implications for medical analysis, enhanced teaching, point-of-sale displays, and telecommunication.

The thing that blew my mind, however, was the sheer discipline of these kids in dealing with costs. They had developed several alternative systems, they told me, including one that used a rotating mirror and a high-speed projector. But they had given themselves the goal of keeping the thing cheap, and this was the cheapest workable solution.

The adoption of prototying software like LabView seems to be collapsing the time and costs involved in building new devices. “We’re still far away from a product,” Gao Yongfeng, a member of the 3-D team, told me. But the kid’s still in college. By the time he’s headed to grad school, this thing could be on store shelves.