Technology

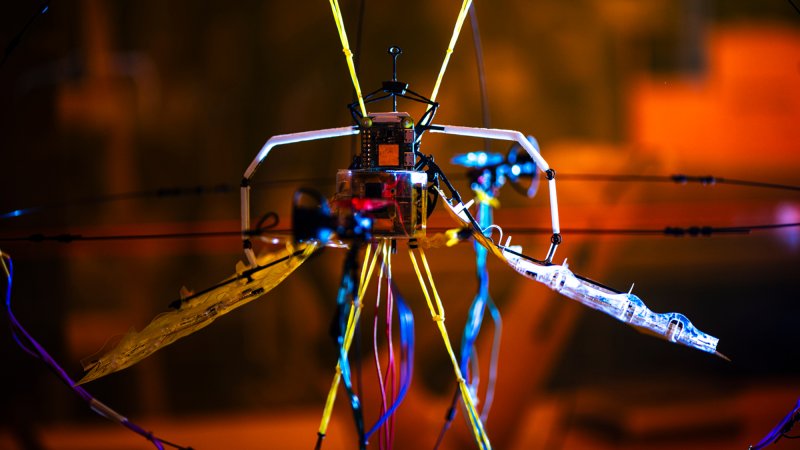

Robots

The latest creations and innovations in automated machines.

Latest in Robots

Technology

Watch a robot operate on a pork loin

Technology

The latest creations and innovations in automated machines.

Breakthroughs, discoveries, and DIY tips sent every weekday.

By signing up you agree to our Terms of Service and Privacy Policy.