Global Warming Could Be Linked to the Number of Exploding Stars in the Sky

As we enter the high season of electoral politics, you’re going to hear things about global warming that may seem...

As we enter the high season of electoral politics, you’re going to hear things about global warming that may seem a bit dubious–that it doesn’t exist, that it exists and George W. Bush invented it, that cataclysmic climate change has already occurred and we are all doomed, that climate change is the result of the failed stimulus, etc. But an astrophysicist working on one of the cosmos greatest mysteries has another theory that might sound equally implausible on its face, but actually makes some sense: that we can measure future global warming based on the number of exploding stars we see in the sky.

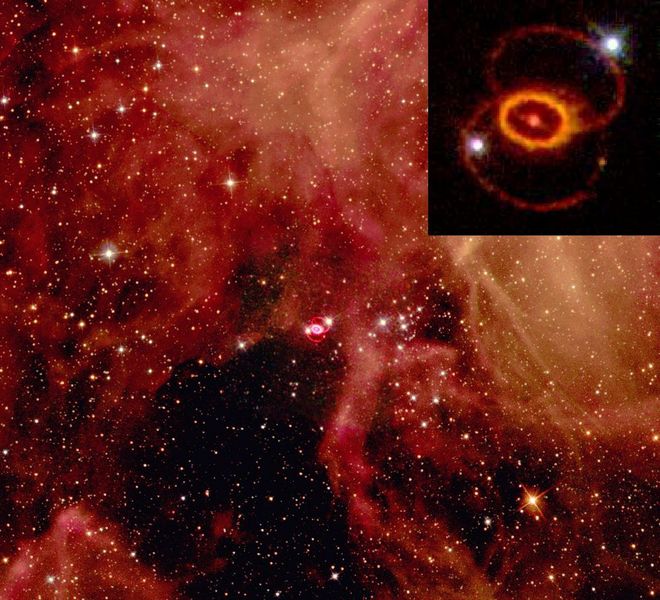

Dr. Charles Wang of the University of Aberdeen has put forth a new theory concerning supernova that involves a Higgs Boson-like mystery particle that is scheduled to be tested at CERN. That’s interesting, but perhaps more intriguing is the idea that his theory could aid in our understanding of where global warming originates and where it is going.

It turns out exploding stars elsewhere in the universe have an effect on the temperature of Earth’s atmosphere. When stars explode elsewhere, the massive amount of cosmic rays created affect space weather in that corner of the cosmos, making it cloudier. That cloudiness shades Earth from other cosmic waves that are likely impacting the atmosphere here. The cloudier it is out there, the cooler Earth’s atmosphere is. So, the theory goes, fewer star explosions equals a warmer atmosphere. And a warmer climate.

That doesn’t help us much from a policy perspective. We don’t yet fully understand the mechanisms by which individual stars go supernova, and we certainly don’t have the means to control star explosions. But since we do record these explosions–roughly one per year–we could use that data to help predict future changes in climate.