Video: Monkeys Demonstrate Brain-Controlled Arm With a Sense of Touch

The holy grail of prosthetics research is and has been a kind of “Luke Skywalker hand” interface–prosthetics that respond to...

The holy grail of prosthetics research is and has been a kind of “Luke Skywalker hand” interface–prosthetics that respond to stimulus from the brain and function just as the original appendage it is replacing. But ideally the prosthetic wouldn’t just respond to stimulus from the brain–it would also provide sensory stimulus to the brain. It would have a sense of touch. And in a paper published today in Nature, we see the groundwork for just such a breed of prostheses.

A study at Duke University Medical Center has trained monkeys to operate a virtual reality hand that provides them with tactile feedback via microwave implants that have been inserted into the part of the cortex responsible for processing such nerve stimulus. That kind of two-way communication demonstrates the feasibility of prosthetics that can both sense and carry out motor commands.

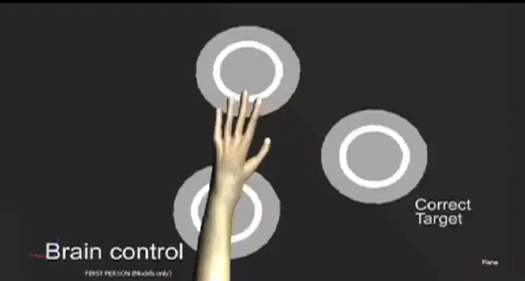

The study carried out two experiments. In the first, monkeys used a joystick to control a virtual hand on a computer screen in a game that encouraged them to differentiate between objects on the screen. Though the objects appeared identical, they had different “textures,” at least according to the implants in the monkey’s brains that were providing the tactile information. Between three objects, two would initiate feelings of the same texture, and the third would be different. Plied with a reward of food, the monkeys quickly learned to differentiate between the objects based on the virtual sensory stimulus.

Next, the joystick was taken away and the monkeys were allowed to control the avatar hand with only their thoughts. They were less accurate this time, but they did improve over time. The important thing to consider here though is that the monkeys–while their real arms rested at their sides–were able to control the motor movement of the avatar arm as well as receive and process tactile feedback in the brain to execute a task.

It’s a first step, but a fairly notable one, with the next step being to take this off the computer screen and build it into a real prosthetic hand. Might we suggest including artificial fingerprints?