A prominent scientific journal has officially retracted an article featuring an AI-generated image of a rat with large genitals alongside strings of nonsensical gibberish words. The embarrassing reversal highlights the risk of using the increasingly popular AI tools in scientific literature. Though some major journals are already reigning in the practice, researchers worry unchecked use of the AI tools could promote inaccurate findings and potentially deal reputational damage to institutions and researchers.

How did an AI-generated rat pass peer review?

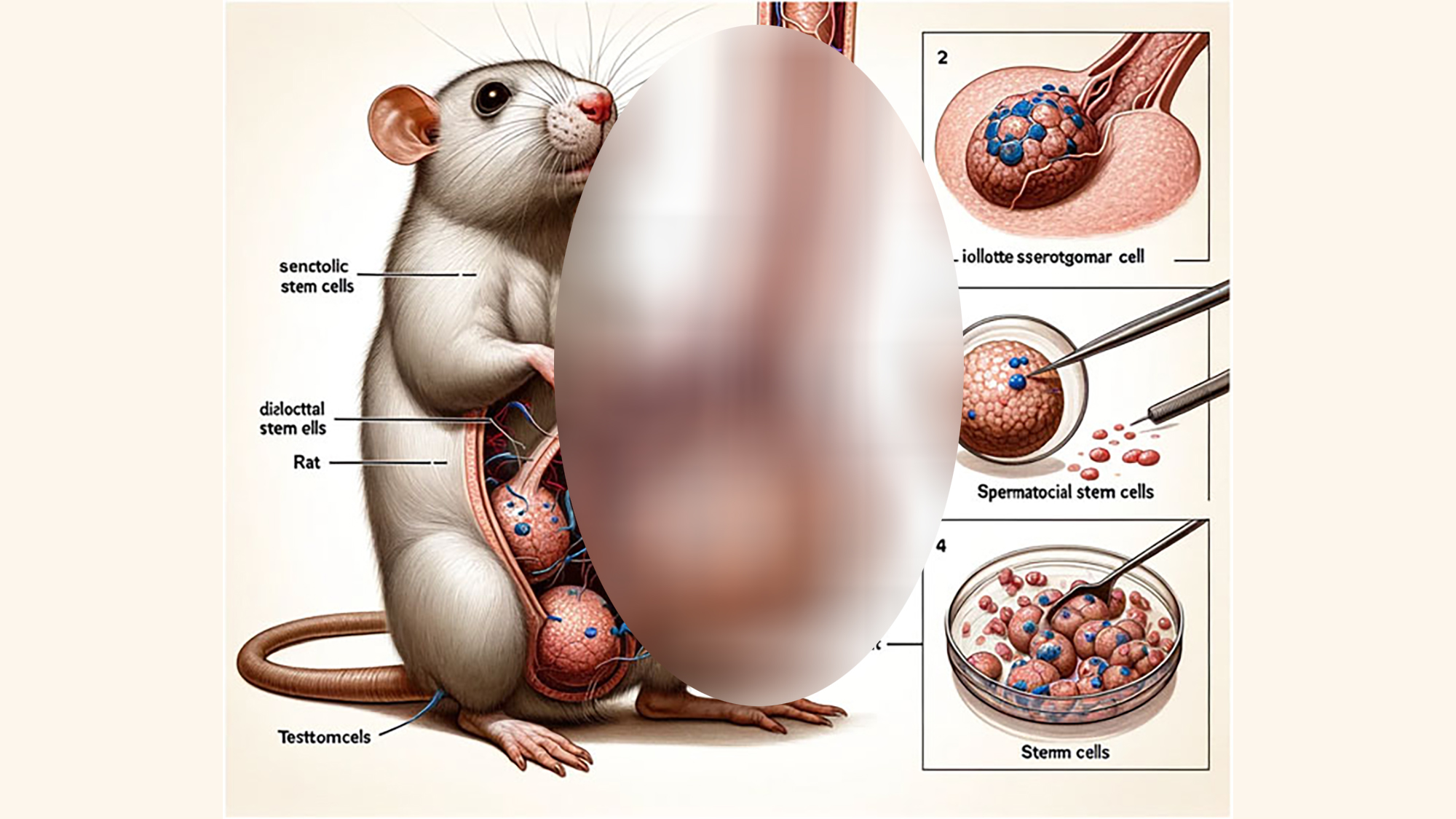

The AI-generated images appeared in a paper published earlier this week in the journal Frontiers in Cell and Developmental Biology. The three researchers, who are from Xi’an Honghui Hospital and Xi’an Jiaotong University, were investigating current research related to sperm stem cells of small mammals. As part of the paper, the researchers included an illustration of a cartoon rat with a phallus towering over its own body. Labels appeared beside the rat with incoherent works like “testtomcels,” “Dissisilcied” and “dck.” The researchers openly acknowledged they used Midjoruney’s AI-image generator to produce the image in text accompanying the figure.

The AI-generated rat was followed up by three more figures purportedly depicting complex signaling pathways. Though these initially appeared less visually jarring than the animated rat, they were similarly surrounded by nonsensical AI-generated gibberish. Combined, the odd figures quickly garnered attention amongst academics on social media, with some questioning how the clearly inaccurate figures managed to slip through Frontiers’ review process.

Though many researchers have cautioned against using AI-generated material in academic literature, Frontiers policies don’t prohibit authors from using AI tools, so long as they include a proper disclosure. In this case, the author clearly stated they used Midjourney’s AI image generator to produce the diagrams. Still, the journal’s author guidelines note figures produced using these tools must be checked for accuracy which clearly doesn’t seem to have happened in this case.

The journal has since issued a full retraction, saying the article “does not meet [Frontiers’] standards of editorial and scientific rigor.” In an accompanying blog post, Frontiers said it had removed the article, and the AI-generated figures within it from its databases “to protect the integrity of the scientific record.”

The journal claims the paper’s authors failed to respond to a reviewer’s requests calling on them to revise the figures. Now, Frontiers says it is investigating how all of this was able to happen in the first place. One of the US reviewers reportedly told Motherboard they reviewed the article only on its scientific merit. The decision to include the AI-generated figure, they claimed, was ultimately left up to Frontiers.

“We sincerely apologize to the scientific community for this mistake and thank our readers who quickly brought this to our attention,” Frontiers wrote.

Frontiers did not immediately respond to PopSci’s request for comment.

Science integrity expert Elisabeth Bik, who spends much of her time spotting manipulated images in academic journals, described the figure as a “sad example” of how generative AI images can slip through the cracks. Even though the phallic figure in particular was easy to spot, Bik warned its publication could foreshadow more harmful entries in the future.

“These figures are clearly not scientifically correct, but if such botched illustrations can pass peer review so easily, more realistic-looking AI-generated figures have likely already infiltrated the scientific literature,” Bik wrote on her blog Science Integrity Digest. “Generative AI will do serious harm to the quality, trustworthiness, and value of scientific papers.”

Should academic journals allow AI-generated material?

With over five million academic articles published online every year, it’s almost impossible to gauge how frequently researchers are turning to AI-generated images. PopSci was able to spot at least one other obvious example of what appeared to be an AI-generated image depicting two African elephants fighting, which appeared in a press kit for a recently published Nature Metabolism paper. The AI-generated image was not included in the published paper.

But even if AI-generated images aren’t flooding articles at this moment, there’s still an incentive for time-scrapped researchers to turn to the increasingly convincing tools to produce published works at a faster clip. Some prominent journals are already taking precautions to prevent the content from being published.

Last year, Nature released a strongly worded statement saying it would not allow any AI-generative images or videos to appear in its journals. The family of journals published by Science are prohibited from using text, images, or figures generated by AI without first getting an editor’s permission. Nature’s editorial board said its decision, made after months of deliberation, stemmed primarily from difficulties related to verifying data used to generate AI content. The board also expressed concern over image generators’ inability to properly credit artists for their work.

AI firms responsible for these tools are currently fighting off a spate of lawsuits from artists and authors who say these tools are illegally spitting out images trained on copyright protected materials. In addition to questions of attribution, generative AI tools have a tendency to spew out text and produce images that aren’t factually or conceptually accurate, a phenomena researchers refer to as “hallucinations.” Elsewhere, researchers warn AI-generated images could be use to create realistic fake images, also known as deepfakes, which they say could be used to give credence to faulty data or inaccurate conclusions. All of these factors present challenges to researchers or journals looking to publish AI-generated material.

“The world is on the brink of an AI revolution,” Nature’s editorial board wrote last year. “This revolution holds great promise, but AI—and particularly generative AI—is also rapidly upending long-established conventions in science, art, publishing and more. These conventions have, in some cases, taken centuries to develop, but the result is a system that protects integrity in science and protects content creators from exploitation. If we’re not careful in our handling of AI, all of these gains are at risk of unraveling.”