New Type of Brain-Computer Interface Lets You Control Robots With Your Eyes

With this head gear, you could make robots go grab you a beer simply by glancing at the refrigerator. A...

With this head gear, you could make robots go grab you a beer simply by glancing at the refrigerator.

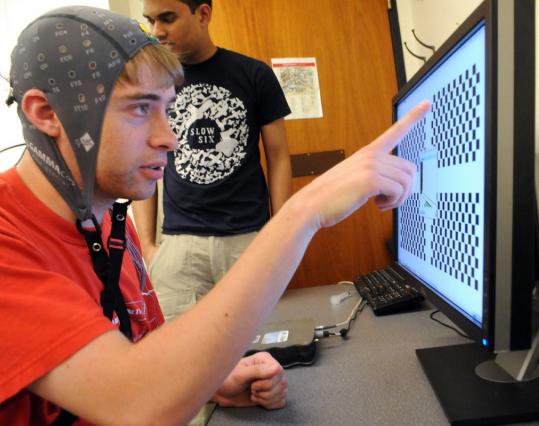

A team of researchers at Northeastern University in Boston is working on a brain-robot interface that lets you command a robot by looking at specific regions on a computer screen. The system detects brain signals from the user’s visual cortex, and commands a robot to move left, right and forward, the Boston Globe reports.

Each quadrant on the computer screen corresponds to a different command and each flashes at a different frequency. If you stare at one quadrant, your visual cortex will emit a corresponding frequency, which is detected by an electrode cap covering your head.

A computer translates that frequency into a directional command, then wirelessly transmits the command to a small laptop attached to a robot. You can even track the robot through a Skype video connection, the Globe reports.

It’s a simpler and smaller system than other mind-control robot interfaces we’ve seen. The new brain-computer interface could be used to control household appliances, according to Northeastern U. professor Deniz Erdogmus. Starting this school year, students will rig a wheelchair to accept commands from the interface, allowing people with physical impairments to move around simply by looking in the direction they want to go.

Students in Erdogmus’ Cognitive Systems Laboratory work on several brain sensing devices that enable people to communicate with computers and robots. People with various physical impairments would be able to use the technology to control a mechanical avatar, for instance.