Software Learns To Crack CAPTCHAs

But does CAPTCHA-cracking bring us closer to creating a machine brain?

Think of it as graduating kindergarten. After working for three years to build its machine-learning software, today a company is announcing that the software passes its first test—CAPTCHAs.

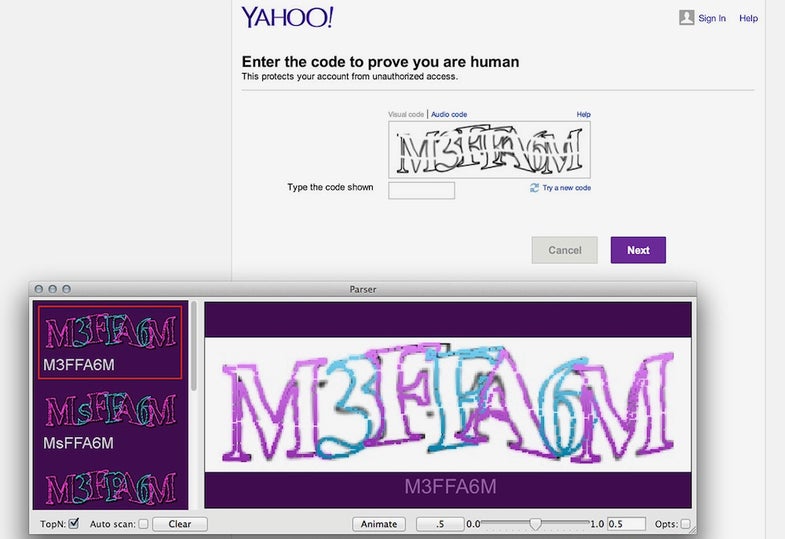

Vicarious, a San Francisco-based company, is working on teaching software to develop a sense of vision. Ultimately, the vision system should be able to recognize letters wherever they appear, identify objects in photographs, and generally do all the stuff any kid with healthy vision can do. Breaking CAPTCHAs, those distorted letter patterns that websites often use to weed out human users from spambots, is Vicarious’ first progress report. Vicarious’ founders say their software solves CAPTCHAs, on average, 90 percent of the time. (However, it is stumped by the Google Street View numerals in Google’s latest reCAPTCHA software, unveiled October 25.)

“CAPTCHA is a good test because it is representative of many of the problems you see in general perception,” Dileep George, one of Vicarious’ founders, tells_ Popular Science_. “For example, variation—the amount of variation in the letter. The difficulty of recognizing the letters from clutter and overlap. These are all problems that you have to solve in general vision also.”

The software uses machine learning, a technique pioneered 1980s in which programmers “teach” their programs concepts such as the shape of the letter A by feeding the program thousands of examples of the letter A. The idea is that it may be too hard to explain to a computer what an A is in a few lines of code… but the computer could nevertheless figure things out for itself with enough training data. George and his colleagues say they’ve advanced the field by building a program that learns by example, but instead of requiring thousands of examples, it needs just 10 per letter. That makes the program a more human-like learner, says Scott Phoenix, George’s co-founder: “Humans don’t get 10,000 examples of a deer or a snake in order to know what those are.” In addition, the software works on a laptop, which means it doesn’t require extraordinary computing power to run and makes it commercializable.

“If they were able to present it an arbitrary picture and tell me what is in the picture, that would be amazing.”

“I think what they’ve done is pretty impressive,” says Chris Eliasmith, a researcher at the University of Waterloo in Canada who works on a brain model named Spaun and who isn’t a part of Vicarious. “I believe they have made progress in basic machine learning kind of problems, problems that have been sort of staples of the field for a while.”

For engineers outside of the company, however, it’s difficult to say how far along Vicarious truly is in building its artificial intelligence. Eliasmith points out that while learning letters from only 10 examples sounds great, it depends on whether the program requires just 10 examples of any type, or whether it may need more to recognize different fonts. George and Phoenix didn’t answer an email asking for clarification in time for publication.

In addition, in spite of CAPTCHA’s tantalizing full name—Complete Automated Public Turing test to tell Computers and Humans Apart—it’s not really an indication that a program is close to human intelligence. After all, you can’t have a conversation with a CAPTCHA-breaker.

“The fact that it can break a CAPTCHA does not imply the thing thinks like a human,” Luis von Ahn, CAPTCHA’s original creator, says. “I don’t mean to say that they’re not the right track. They may well be on the right track. I just don’t know.”

Other groups have come up with programs to break CAPTCHAs, so this feat isn’t new. “I think this is a little on the higher end of accuracy in the breakers,” von Ahn says. An advance like this isn’t the end of CAPTCHA, although in time, CAPTCHA-breaking is likely to evolve to the point where companies will need to rely on another spambot gatekeeper. The next step is asking people to identify objects in photographs, von Ahn says.

Vicarious claims its program works like a human brain, but both von Ahn and Eliasmith say it is difficult to tell whether the software is truly more brain-like than other machine learning software. Part of the problem may be that Vicarious does not fully publish the math behind its program, which is understandable for a commercial company. Instead, the outside researchers Popular Science talked with had to rely on a short video the company made.

There was one thing von Ahn says would impress him in a piece of artificial intelligence vision software: “If they were able to present it an arbitrary picture and tell me what is in the picture, that would be amazing. That still wouldn’t be able to say if it thinks like a human, but at least I have never seen a computer do that before.”

Vicarious is working on getting its software to recognize animals in photographs, but the company isn’t ready to announce its accuracy rate yet, Phoenix says. There’s no guarantee Vicarious will do it first or best, as many groups are working on this problem, Eliasmith says. We’ll have to wait for Vicarious to move a little further ahead in its development before it can prove its mettle.