What Might A Killerbot Arms Race Look Like?

The coming swarm

When they appear on the horizon, the robots coming to kill you won’t necessarily look like warplanes. That’s limited, human-centric thinking, says Stuart Russell, a computer scientist at the University of California at Berkeley, and it only applies to today’s unmanned weapons. Predator and Reaper drones were built with remote pilots and traditional flight mechanics in mind, and armed with the typical weapons of air war–powerful missiles, as useful for destroying buildings and vehicles as personnel. Tomorrow’s nimbler, self-piloted armed bots won’t simply be updated tools for old-fashioned air strikes. They’ll be vectors for slaughter.

More likely, the lethal autonomous weapons systems (LAWS) to come will show up in a cloud of thousands or more. Each robot will be small, cheap, and lightly armed, packing the bare minimum to end a single life at a time. Predicting the exact nature of these weapons is as macabre as it is speculative, but to illustrate how we should adjust our thinking on the subject of deploying autonomous robots on the battlefield, Russell offers two hypotheticals. “It would perhaps be able to fire miniature projectiles, just powerful enough to shoot someone through their eyeball,” he says. “It would be pretty easy to do that from 30 or 40 meters away. Or it could put a shaped charge directly on a person’s cranium. One gram of explosives is enough to blow a hole in sheet metal. That would probably be more than enough.”

Russell’s prediction is one of focused, efficient lethality. But to anthropomorphize this assault cloud, imagining it as a swarm of tiny, flying snipers or grenadiers, is another mistake. Russell estimates that, with enough iteration and innovation, the systems developed in a LAWS arms race could eventually be as cheap as $10 apiece. They would be closer to a plague of guided munitions than an automated fighting force, leaving a locust-like trail of inert, disposable components alongside their victims. Unleashing such a weapon on a city, with orders to kill anyone holding a weapon-like object, or simply every male within a given age group, would be too cheap, and too effective to resist. “No matter where this sort of arms race ends up, it becomes clear that humans don’t stand a chance.”

Russell’s worry is not that the future of warfare will be merely unsettling, or unfair. In a commentary published today in Nature, Russell reiterates many of the concerns he presented this past April in Geneva, at a United Nations meeting on the topic of banning LAWS. Among those concerns are the prospects of autonomous weapons being misused—used to commit war crimes, for example—as well as overused. The clear appeal of sending robots into traditionally casualty-heavy situations, such as urban combat, combined with their potential lethality, could transform armed conflicts into a series of one-sided massacres. “The stakes are high,” he writes. “LAWS have been described as the third revolution in warfare, after gunpowder and nuclear arms.”

“Lethal autonomous weapons have been described as the third revolution in warfare, after gunpowder and nuclear arms.”

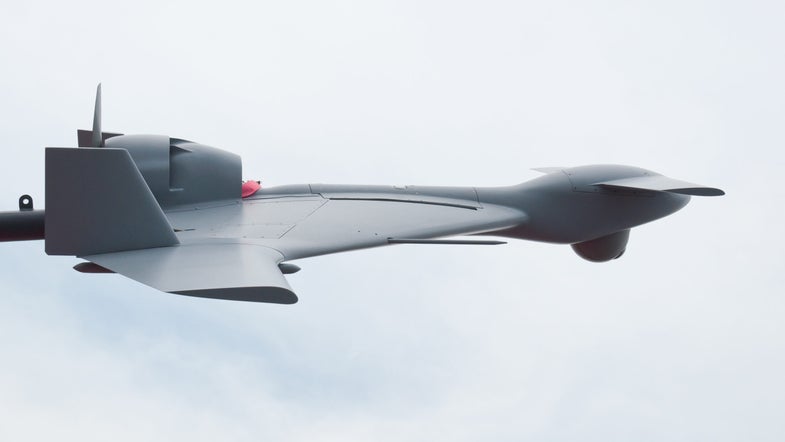

The debate over autonomous weapons has typically centered on who, or what, is making the decision to kill. Human Rights Watch and other opponents of the technology have often warned that the benefits of allowing robots to choose their own targets—such as when distance or terrain interrupts communication links between operators and drones—make the fielding of such systems inevitable. Military personnel, meanwhile, insist that there’s no will for these machines, and that commanders will always want a human “in the loop,” giving the final order to attack a given target. But the defense industry is nonetheless moving towards this capability. The marketing language used by defense contractor BAE Systems to describe its in-development Taranis stealth drone includes the phrase, “full autonomy.”

And in his Nature article, Russell cites two DARPA projects whose aim is to significantly increase drone autonomy. The Fast Lightweight Autonomy (FLA) project is developing methods for small unmanned systems to navigate themselves through cluttered environments, and the Collaborative Operations in Denied Environments (CODE) program is explicit about its interest in autonomous combat. “Just as wolves hunt in coordinated packs with minimal communication, multiple CODE-enabled unmanned aircraft would collaborate to find, track, identify and engage targets, all under the command of a single human mission supervisor,” said DARPA program manager Jean-Charles Lede in an agency press release earlier this year.

Despite the mention of a human supervisor, engaging targets is part of CODE, a program intended to deal with situations where unmanned vehicles may be forced to act autonomously. “They tend to put in a couple of fig leaf phrases, here and there, related to the man in the loop,” says Russell. “but I think they are interested. And I think without a ban, there will be an arms race, and they will be used.” In fact, he believes that LAWS have already been deployed. Israel’s Harop drone is designed to loiter over an area, searching for enemy radar sites. If it detects a given source of radiation, the system automatically crashes into it, detonating its warhead. “That seems to me to cross the line,” says Russell. “What it’s doing is possibly indiscriminate. And you could imagine Palestinians putting a radar system in a crowded school, to trick the Israelis into committing a war crime.”

But even if LAWS were to function perfectly, and could somehow cut through the fog of war to avoid large-scale friendly fire incidents, or full-blown, automated atrocities, they may share an important similarity with nukes. There’s no viable defense against the overwhelming destructive force of a nuclear attack. Russell argues that, once autonomous weapons have matured, they will be just as unstoppable, resurrecting the tenuous doomsday geopolitics of the Cold War’s nuclear standoff. If nations don’t start the complex process of defining and banning these systems, and preventing an escalation before it begins, he fears it’s only a matter of time before the clouds roll towards one city or another. “I imagine that people will come up with countermeasures,” says Russell. “Some kind of electromagnetic weapon, or they might send up their own cloud of counter-drones.” But if his analysis is right, and a LAWS arms races peaks at swarms that number in the millions targeting large populations, would anything be effective against what amounts to an autonomous weapon of mass destruction?

Russell laughs, a little too knowingly. “I feel like we don’t want to find out.”