Neural Networks Designed to ‘See’ are Quite Good at ‘Hearing’ As Well

Neural networks — collections of artificial neurons or nodes set up to behave like the neurons in the brain —...

Neural networks — collections of artificial neurons or nodes set up to behave like the neurons in the brain — can be trained to carry out a variety of tasks, often having something to do with pattern or sequence recognition. As such, they have shown great promise in image recognition systems. Now, research coming out of the University of Hong Kong has shown that neural networks can hear as well as see. A neural network there has learned the features of sound, classifying songs into specific genres with 87 percent accuracy.

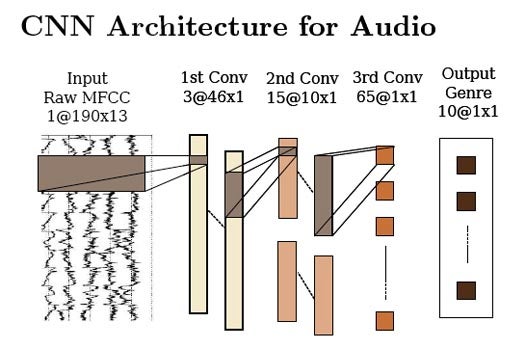

The network is composed of three “thinking” layers stacked one atop the other, with the first taking in the raw data and the third outputting a genre. Drawing from a database that spanned 10 musical genres, the machine went to work. Within each layer, each neuron only hears a snippet of the song about 23 milliseconds long. But each node overlaps the one next to it by half, so in total each node really gets to hear about two seconds worth of audio.

The algorithms employed by the network needed only that amount of time to process and identify the genres of songs from the database. However, when turned loose on songs not included in the library that it learned on, it didn’t perform well at all. Which tells us a few things.

For one, to work universally the network needs to be trained on a more universally representative library, as there are more than 10 genres in the entire universe of music. But more importantly, as Technology Review points out, this neural network shows that a device designed for one function — this particular neural network was inspired by the visual cortex of a cat — can be re-wired to do something different (in this case, to hear).

Similar networks based on auditory cortexes have been rewired for vision, so it would appear these kinds of neural networks are quite flexible in their functions. As such, it seems they could potentially be applied to all sorts of perceptual tasks in artificial intelligence systems, the possibilities of which have only begun to be explored.