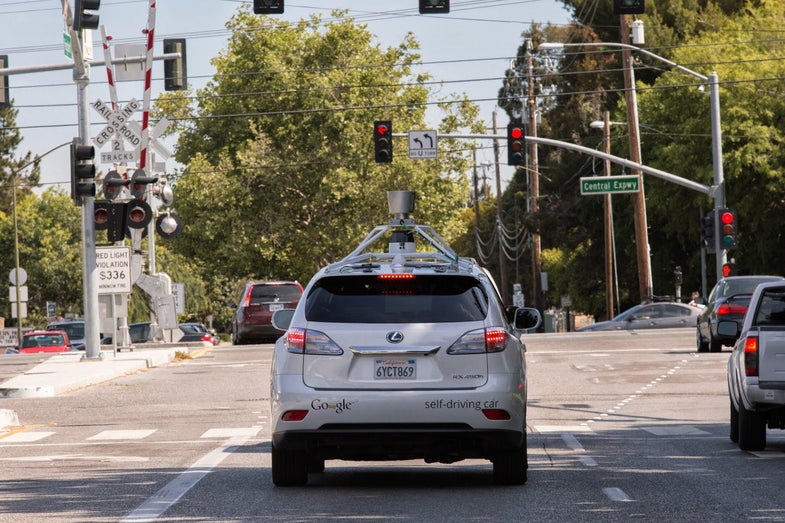

People Keep Crashing Into Google’s Self-Driving Cars

Robots, however, follow the rules of the road

You want to know why we can’t have nice things? People, that’s why. Self-driving cars are just trundling along, minding their own business, when people decide to do something crazy. That’s what Chris Urmson, the director of Google’s self-driving car program, writes in a recent post at Backchannel.

In response to an Associated Press article disclosing that self-driving cars have been involved in four accidents in the state of California, Urmson revealed that over the 1.7 million miles logged by Google’s self-driving cars, they had been involved in a total of 11 accidents–though, he hastened to add, all were minor with only light damage–and in none of them was Google’s robot car at fault.

Those accidents aren’t totally a loss either; the information gleaned from those scenarios helps Google improve the algorithms of the autonomous car, helping build patterns of driving that allow it to anticipate when an accident might occur. As one example, Urmson says that the car hesitates when lights turn green, because that’s a situation in which human drivers are likely to barrel through the intersection.

Of course, there are still questions. Urmson says that the 1.7-million-mile figure includes both autonomous and manual driving, with the auto-driving taking about a million of the miles. It’s not stated in which of the accidents people were behind the wheel and, more to the point, whether in any cases the human drivers may have prevented accidents by taking control from the autonomous car.

Either way, Urmson points out that accidents are impossible to avoid completely, whether you’re a human or a robot. But robots don’t tire, have much better awareness than people, and tend to be overcautious rather than reckless. So maybe the old adage is true: the perfect system is one with no users. Only when people have been eliminated can robots finally drive in safety.