MIT’s Wearable Sensor Pack Turns First Responders Into Digital Mapmakers

The first human moving through a dangerous area can generate annotated digital maps in realtime, imparting critical information to the next wave of responders.

Robots have seemingly unlimited potential when it comes to search and rescue operations–they can enter hazardous environments, quickly map dangerous areas for first responders, and help establish communication links and a game plan for larger recovery and triage efforts. But in these scenarios, humans aren’t going anywhere. We still need breathing, thinking bodies on the ground. So a team at MIT has built a wearable sensor pack that can “roboticize” human first responders, allowing the first person into a dangerous environment to digitally map it in realtime, just like a robot.

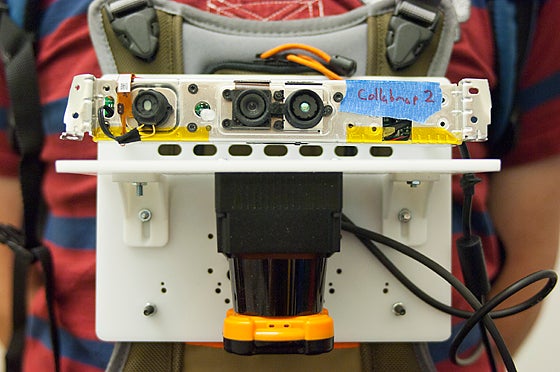

The prototype platform consists of a variety of sensors–accelerometers, gyroscopes, a camera, and a LiDAR (light detection and ranging) rangefinder, among others–affixed to a sheet of plastic roughly the size of a tablet computer, which is in turn strapped to the user’s chest. These sensors wirelessly beam data to a laptop, allowing others to remotely view the user’s progress through an environment. It also allows the sensor platform to build a digital map of the area as the user moves through it, providing the responders that follow with far more situational awareness than they would have otherwise.

Adapting this kind of sensor platform for human use (robots, after all, have been doing this kind of mapping for some time now) was trickier than it may seem. Rolling robots tend to keep their instruments more level than a moving human, which jostles them as he or she moves, bends, stoops, climbs, shimmies, or otherwise negotiates obstacles. This can be particularly challenging for the LiDAR sensor, which uses a sweeping beam of laser light (and its reflections off of surrounding objects) to build a 3-D digital map of the environment.

To accomplish this, the MIT team developed a means to mesh all the data from the various inertial sensors–they even used a barometer in one experiment and found it quite effective at making the distinction between different floors in a building–in order to compensate for human movement and correct errors in the LiDAR scans.

The result is a platform that creates detailed digital maps on the fly, complete with annotations from the user (using a handheld device, the mapmaker can push a button to designate certain points on the map as areas of interest). The researchers plan to add voice annotations to the system as well so the user can make vocal notes about areas of the map or leave cautionary audio notes scattered around the map.