Internet Giants Could Slash Energy Costs 40 Percent With Smart Rerouting Algorithm

A routing algorithm can channel Internet data to locations where electricity prices are cheapest

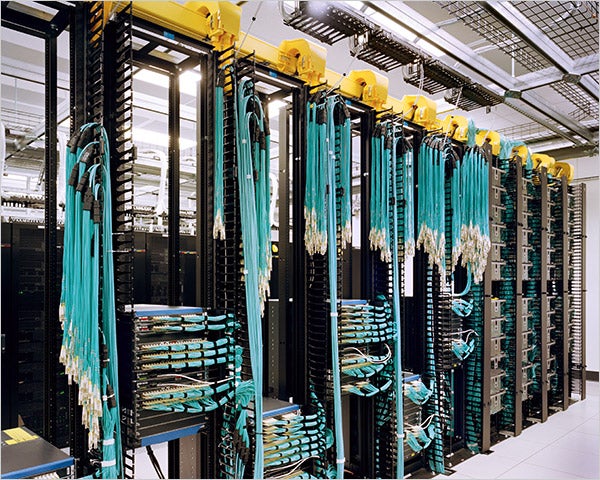

Moving computing from the desktop to the 24/7 data centers of the “cloud” may be the way forward (just ask Google), but it will come with a hefty energy price. Teams at MIT and Carnegie Mellon University, however, are developing a smart algorithm that could reroute Internet traffic to where energy is cheapest at any given moment, potentially saving millions of dollars in energy usage.

The teams from MIT and Carnegie Mellon worked together with Internet company Akamai to see how the algorithm could take advantage of daily and even hourly fluctuations in electricity prices across the nation. Technology Review reports that the algorithm weighs the expense of rerouting Internet data over greater distances against the cost savings from cheaper energy prices, and finds the happy medium for maximum savings.

The researchers looked at 39 months worth of electricity prices for 29 major U.S. cities, and also tracked 24 days of activity from nine Akamai Internet servers, which provide image and bandwidth-intensive content hosting to the Web’s biggest sites–to test the routing scheme. A best-case scenario where energy usage was directly proportional to computing allowed a company to theoretically cut energy costs by 40 percent.

This could translate into major savings totaling millions of dollars for companies ranging from Google to Amazon, and could also ease some of the local loads on the nation’s electricity grid from power-hungry server farms and data centers, which require constant cooling to prevent servers from overheating.

Several companies told Technology Review that more hardware and control improvements are required before such a scheme could efficiently juggle data rerouting, and there’s no guarantee of reduced energy usage beyond simple energy cost savings. But if researchers can move forward on this with industry, it sounds a lot better than just relying on altruistic home owners or greening initiatives among “World of Warcraft” players.

[via Technology Review]