Glasses Packed With Smartphone Tech Could Help Visually Impaired People ‘See’ Again

Glasses have been passively correcting human vision for centuries, using tricks of light to compensate for various visual impairments. But...

Glasses have been passively correcting human vision for centuries, using tricks of light to compensate for various visual impairments. But there are some conditions–like age-related macular degeneration–that simple lenses can’t correct. So Oxford University researchers are getting proactive with a pair of frames packed with technologies usually found in gaming consoles and smartphones to give greater independence and self sufficiency to those suffering from more serious optical ailments.

Our smartphones contain all kinds of cool tech that is cheap, easy to come by, and usually wasted on snapping and sharing pics of our cats doing cute things. Likewise, peripherals like our gaming controllers and the Kinect pack all kinds of cheap and powerful sensor tech.

The Oxford researchers thought they could get more productive mileage out of implements like tiny cameras, position detectors, depth sensors, and facial recognition and tracking software by packing it into a pair of specs that can help visually impaired people function again.

Many of these problems are often brought on by age, when degradation of optical tissues renders parts of the eye unresponsive to light. This means that people can see shapes and objects, but might have trouble discerning between two different people or objects, or might have trouble with depth perception (or, generally, a combination of these problems).

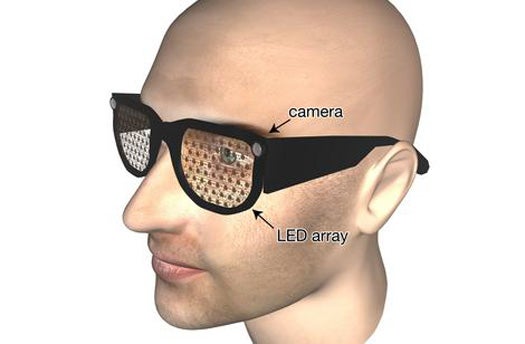

The assistive glasses have video cameras mounted at the corners and tiny LED arrays embedded in the clear lenses. These LEDs can feed extra information to the wearer through various visual cues imparted by light and color. Via software running on a smartphone-like computer running in the person’s pocket, facial recognition software could identify a person walking into the room and light that person with a certain color, revealing that person’s identity to the wearer.

Likewise, certain objects–the TV remote, a coffee mug, a newspaper–could be identified with certain colors in the LED display, turning the blurs associated with deteriorating vision into identifiable objects. The Oxford crew even thinks they could use optical character recognition to possibly read text to wearers via headphones attached to the glasses, allowing them to peruse the morning headlines.

There’s a certain degree of learning involved. People would have to get used to the extra layer of information coming to them, and each person’s glasses would have to be tailored to his or her particular vision needs. But the researchers think they can produce the glasses for the same cost as many high-end smartphones, which is far less expensive than training a helper dog or maintaining around the clock care. More importantly, the specs would allow visually impaired people to maintain a degree of independence otherwise not possible.