How Facebook’s New Machine Brain Will Learn All About You From Your Photos

Facebook poaches an NYU machine learning star to start a new AI lab that may very well end up knowing more about your social life than you do.

Facebook users upload 350 million photos onto the social network every day, far beyond the ability of human beings to comprehensively look at, much less analyze. And so that’s one big reason the company just hired New York University (NYU) machine learning expert Yann LeCun, an eminent practitioner of an artificial intelligence (AI) technique known as “deep learning.” As director of Facebook’s new AI laboratory, LeCun will stay on at NYU part time, while working from a new Facebook facility on Astor Place in New York City.

“Yann LeCun’s move will be an exciting step both for machine learning and for Facebook, which has a lot of unique social data,” says Andrew Ng, who directs the Stanford Artifical Intelligence Laboratory and who led a deep-learning project to analyze YouTube video for Google. “Machine learning is already used in hundreds of places throughout Facebook, ranging from photo tagging to ranking articles to your newsfeed. Better machine learning will be able to help improve all of these features, as well as help Facebook create new applications that none of us have dreamed of yet.” What might those futuristic advances be? Facebook did not reply to repeated requests for comment.

“The dream of AI is to build full knowledge of the world and know everything that is going on.”

Aaron Hertzmann, a research scientist at Adobe whose specialties include computer vision and machine learning, says that Facebook might want to use machine learning to see what content makes users stick around the longest. And he thinks cutting-edge deep learning algorithms could also be useful in gleaning data from Facebook’s massive trove of photos, which numbers roughly 250 billion.

“If you post a picture of yourself skiing, Facebook doesn’t know what’s going on unless you tag it,” Hertzmann says. “The dream of AI is to build full knowledge of the world and know everything that is going on.”

To try to draw intelligent conclusions from the terabytes of data that users freely give to Facebook every day, LeCun will apply his 25 years of experience refining the artificial intelligence technique known as “deep learning,” which loosely simulates the step-by- step, hierarchical learning process of the brain. Applied to the problem of identifying objects in a photo, LeCun’s deep learning approach emulates the visual cortex, the part of the brain to which our retina sends visual data for analysis.

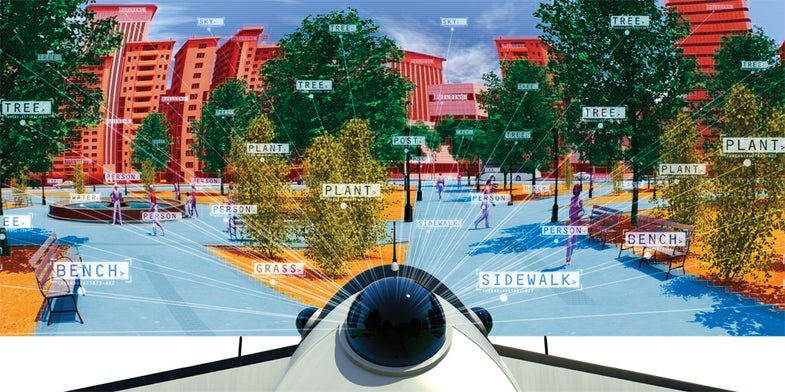

By applying a filter of just a few pixels over a photo, LeCun’s first layer of software processing looks for simple visual elements, like a vertical edge. A second layer of processing deploys a filter that is a few pixels larger, seeking to assemble those edges into parts of an object. A third layer then builds those parts into objects, tested by hundreds of filters for objects like “person” and “truck,” until the final layer has created a rich visual scene in which trees, sky and buildings are clearly delineated. Through advanced training techniques, some “supervised” by humans and others “unsupervised,” the filters, or “cookie cutters,” dynamically improve at correctly identifying objects over time.

Quickly performing these many layers of repetitive filtering makes massive computational demands. For example, LeCun is the vision expert on an ongoing $7.5 million project funded by the Office of Naval Research to create a small, self-flying drone capable of traveling through an unfamiliar forest at 35 MPH. Unofficially known as “Endor.tech,” and profiled in Popular Science in 2012, the robot will run on a customized computer known as an FPGA, capable of roughly 1 trillion operations per second.

“I’ll take as many [operations per second] as I can get,” LeCun said at the time.

That robot will analyze 30 frames per second of video images in order to make real-time decisions about how to fly itself through a forest at 35 MPH. It’s not hard to imagine similar algorithms used to “read” the videos that you upload to Facebook, by examining who and what is present in the scene. Instead of targeting ads to users based on keywords written in Facebook posts, the algorithms would analyze a video of say, you at the beach with some friends. The algorithm might then learn what beer you’re drinking lately, what brand of sunscreen you use, who you’re hanging out with, and guess whether you might be on vacation.